Affected files: Money/Assets/Financial Instruments.md Money/Assets/Security.md Money/Markets/Markets.md Politcs/Now.md STEM/AI/Neural Networks/CNN/Examples.md STEM/AI/Neural Networks/CNN/FCN/FCN.md STEM/AI/Neural Networks/CNN/FCN/FlowNet.md STEM/AI/Neural Networks/CNN/FCN/Highway Networks.md STEM/AI/Neural Networks/CNN/FCN/ResNet.md STEM/AI/Neural Networks/CNN/FCN/Skip Connections.md STEM/AI/Neural Networks/CNN/FCN/Super-Resolution.md STEM/AI/Neural Networks/CNN/GAN/DC-GAN.md STEM/AI/Neural Networks/CNN/GAN/GAN.md STEM/AI/Neural Networks/CNN/GAN/StackGAN.md STEM/AI/Neural Networks/CNN/Inception Layer.md STEM/AI/Neural Networks/CNN/Interpretation.md STEM/AI/Neural Networks/CNN/Max Pooling.md STEM/AI/Neural Networks/CNN/Normalisation.md STEM/AI/Neural Networks/CNN/UpConv.md STEM/AI/Neural Networks/CV/Layer Structure.md STEM/AI/Neural Networks/MLP/MLP.md STEM/AI/Neural Networks/Neural Networks.md STEM/AI/Neural Networks/RNN/LSTM.md STEM/AI/Neural Networks/RNN/RNN.md STEM/AI/Neural Networks/RNN/VQA.md STEM/AI/Neural Networks/SLP/Least Mean Square.md STEM/AI/Neural Networks/SLP/Perceptron Convergence.md STEM/AI/Neural Networks/SLP/SLP.md STEM/AI/Neural Networks/Transformers/LLM.md STEM/AI/Neural Networks/Transformers/Transformers.md STEM/AI/Properties.md STEM/CS/Language Binding.md STEM/Light.md STEM/Maths/Tensor.md STEM/Quantum/Orbitals.md STEM/Quantum/Schrödinger.md STEM/Quantum/Standard Model.md STEM/Quantum/Wave Function.md Tattoo/Music.md Tattoo/Plans.md Tattoo/Sources.md

1.9 KiB

1.9 KiB

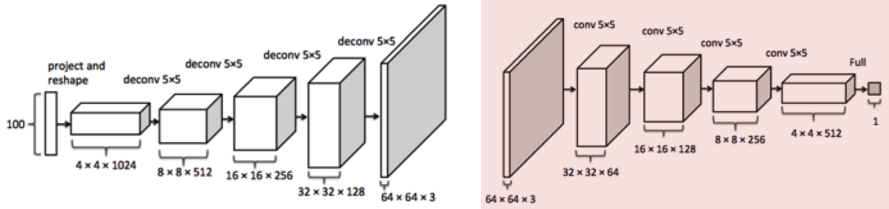

Deep Convolutional GAN

- Generator

- Discriminator

- Contractive

- Cross-entropy loss

- Conv and leaky Activation Functions#ReLu layers only

- Normalised output via Activation Functions#Sigmoid

Deep Learning#Loss Function

D(S,L)=-\sum_iL_ilog(S_i)S(0.1, 0.9)^T- Score generated by discriminator

L(1, 0)^T- One-hot label vector

- Step 1

- Depends on choice of real/fake

- Step 2

- One-hot fake vector

\sum_i- Sum over all images in mini-batch

| Noise | Image |

|---|---|

z |

x |

- Generator wants

D(G(z))=1- Wants to fool discriminator

- Discriminator wants

D(G(z))=0- Wants to correctly catch generator

- Real data wants

D(x)=1

J^{(D)}=-\frac 1 2 \mathbb E_{x\sim p_{data}}\log D(x)-\frac 1 2 \mathbb E_z\log (1-D(G(z)))J^{(G)}=-J^{(D)}- First term for real images

- Second term for fake images

Mode Collapse

- Generator gives easy solution

- Learns one image for most noise that will fool discriminator

- Mitigate by minibatch discriminator

- Match G(z) distribution to x

What is Learnt?

- Encoding texture/patch detail from training set

- Similar to FCN

- Reproducing texture at high level

- Cues triggered by code vector

- Input random noise

- Iteratively improves visual feasibility

- Different to FCN

- Discriminator is a task specific classifier

- Difficult to train over diverse footage

- Mixing concepts doesn't work

- Single category/class