Affected files: Money/Assets/Financial Instruments.md Money/Assets/Security.md Money/Markets/Markets.md Politcs/Now.md STEM/AI/Neural Networks/CNN/Examples.md STEM/AI/Neural Networks/CNN/FCN/FCN.md STEM/AI/Neural Networks/CNN/FCN/FlowNet.md STEM/AI/Neural Networks/CNN/FCN/Highway Networks.md STEM/AI/Neural Networks/CNN/FCN/ResNet.md STEM/AI/Neural Networks/CNN/FCN/Skip Connections.md STEM/AI/Neural Networks/CNN/FCN/Super-Resolution.md STEM/AI/Neural Networks/CNN/GAN/DC-GAN.md STEM/AI/Neural Networks/CNN/GAN/GAN.md STEM/AI/Neural Networks/CNN/GAN/StackGAN.md STEM/AI/Neural Networks/CNN/Inception Layer.md STEM/AI/Neural Networks/CNN/Interpretation.md STEM/AI/Neural Networks/CNN/Max Pooling.md STEM/AI/Neural Networks/CNN/Normalisation.md STEM/AI/Neural Networks/CNN/UpConv.md STEM/AI/Neural Networks/CV/Layer Structure.md STEM/AI/Neural Networks/MLP/MLP.md STEM/AI/Neural Networks/Neural Networks.md STEM/AI/Neural Networks/RNN/LSTM.md STEM/AI/Neural Networks/RNN/RNN.md STEM/AI/Neural Networks/RNN/VQA.md STEM/AI/Neural Networks/SLP/Least Mean Square.md STEM/AI/Neural Networks/SLP/Perceptron Convergence.md STEM/AI/Neural Networks/SLP/SLP.md STEM/AI/Neural Networks/Transformers/LLM.md STEM/AI/Neural Networks/Transformers/Transformers.md STEM/AI/Properties.md STEM/CS/Language Binding.md STEM/Light.md STEM/Maths/Tensor.md STEM/Quantum/Orbitals.md STEM/Quantum/Schrödinger.md STEM/Quantum/Standard Model.md STEM/Quantum/Wave Function.md Tattoo/Music.md Tattoo/Plans.md Tattoo/Sources.md |

||

|---|---|---|

| .. | ||

| FCN | ||

| GAN | ||

| CNN.md | ||

| Convolutional Layer.md | ||

| Examples.md | ||

| Inception Layer.md | ||

| Interpretation.md | ||

| Max Pooling.md | ||

| Normalisation.md | ||

| README.md | ||

| UpConv.md | ||

Before 2010s

- Data hungry

- Need lots of training data

- Processing power

- Niche

- No-one cared/knew about CNNs

After

- ImageNet

- 16m images, 1000 classes

- GPUs

- General processing GPUs

- CUDA

- NIPS/ECCV 2012

- Double digit % gain on ImageNet accuracy

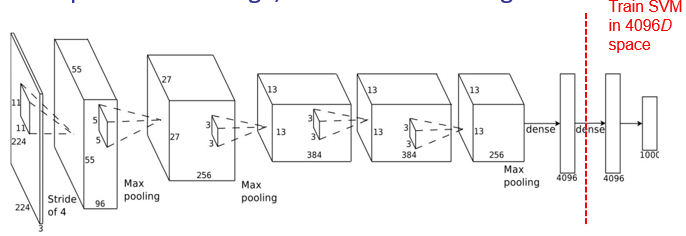

Full Connected

- Move from convolutional operations towards vector output

- Stochastic drop-out

- Sub-sample channels and only connect some to dense layers

As a Descriptor

- Most powerful as a deeply learned feature extractor

- Dense classifier at the end isn't fantastic

- Use SVM to classify prior to penultimate layer

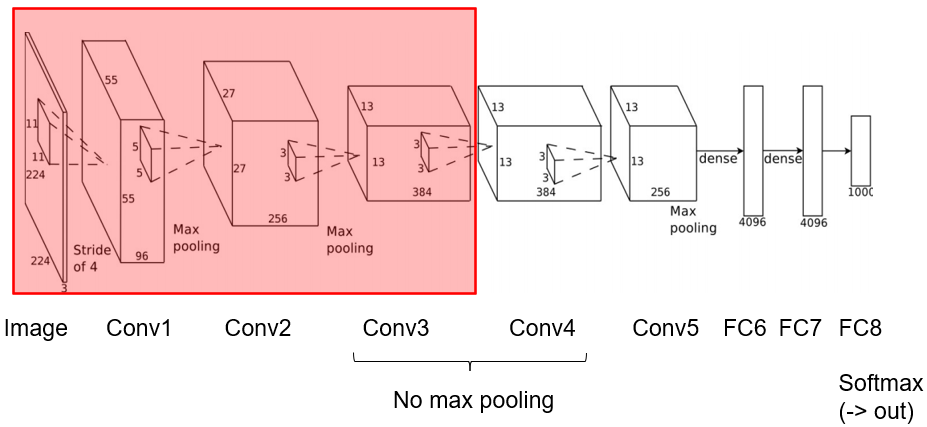

Finetuning

- Observations

- Most CNNs have similar weights in conv1

- Most useful CNNs have several conv layers

- Many weights

- Lots of training data

- Training data is hard to get

- Labelling

- Reuse weights from other network

- Freeze weights in first 3-5 conv layers

- Learning rate = 0

- Randomly initialise remaining layers

- Continue with existing weights