Affected files: STEM/AI/Neural Networks/CNN/Examples.md STEM/AI/Neural Networks/CNN/FCN/FCN.md STEM/AI/Neural Networks/CNN/FCN/ResNet.md STEM/AI/Neural Networks/CNN/FCN/Skip Connections.md STEM/AI/Neural Networks/CNN/GAN/DC-GAN.md STEM/AI/Neural Networks/CNN/GAN/GAN.md STEM/AI/Neural Networks/CNN/Interpretation.md STEM/AI/Neural Networks/CNN/UpConv.md STEM/AI/Neural Networks/Deep Learning.md STEM/AI/Neural Networks/MLP/MLP.md STEM/AI/Neural Networks/Properties+Capabilities.md STEM/AI/Neural Networks/SLP/Least Mean Square.md STEM/AI/Neural Networks/SLP/SLP.md STEM/AI/Neural Networks/Transformers/Transformers.md STEM/AI/Properties.md STEM/CS/Language Binding.md STEM/CS/Languages/dotNet.md STEM/Signal Proc/Image/Image Processing.md

1.1 KiB

1.1 KiB

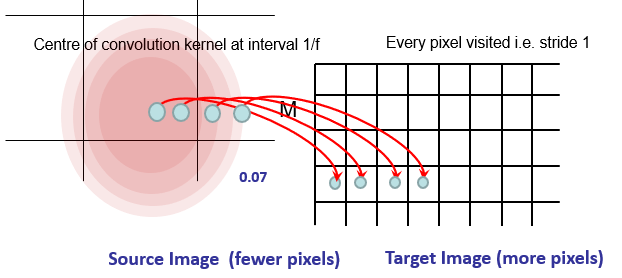

- Fractionally strided convolution

- Transposed Convolution

- Like a deep interpolation

- Convolution with a fractional input stride

- Up-sampling is convolution 'in reverse'

- Not an actual inverse convolution

- For scaling up by a factor of

f- Consider as a Convolution of stride

1/f

- Consider as a Convolution of stride

- Could specify kernel

- Or learn

- Can have multiple upconv layers

- Separated by ReLu

- For non-linear up-sampling conv

- Interpolation is linear

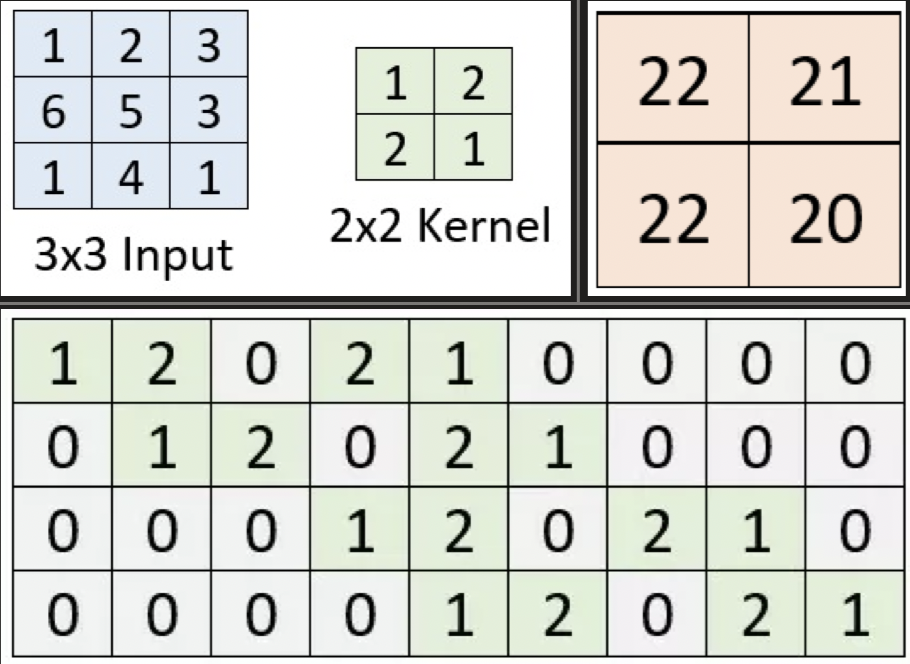

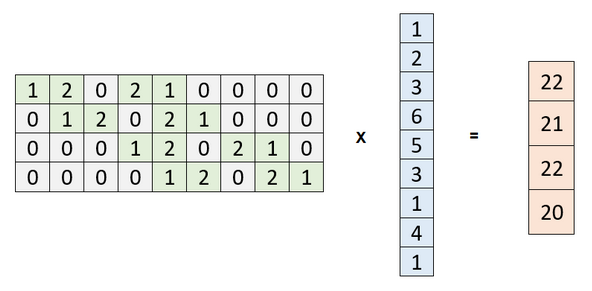

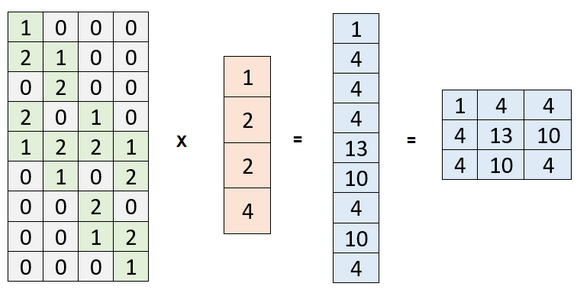

Convolution Matrix

Normal

- Equivalent operation with a flattened input

- Row per kernel location

- Many-to-one operation

Understanding transposed convolutions

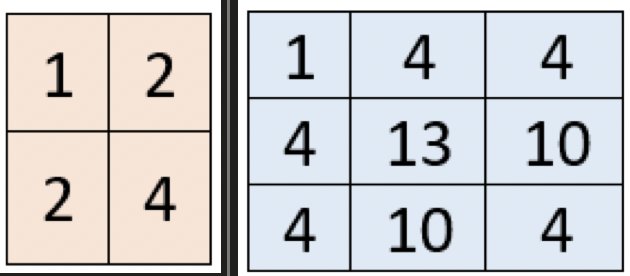

Transposed

- One-to-many