Affected files: Money/Assets/Financial Instruments.md Money/Assets/Security.md Money/Markets/Markets.md Politcs/Now.md STEM/AI/Neural Networks/CNN/Examples.md STEM/AI/Neural Networks/CNN/FCN/FCN.md STEM/AI/Neural Networks/CNN/FCN/FlowNet.md STEM/AI/Neural Networks/CNN/FCN/Highway Networks.md STEM/AI/Neural Networks/CNN/FCN/ResNet.md STEM/AI/Neural Networks/CNN/FCN/Skip Connections.md STEM/AI/Neural Networks/CNN/FCN/Super-Resolution.md STEM/AI/Neural Networks/CNN/GAN/DC-GAN.md STEM/AI/Neural Networks/CNN/GAN/GAN.md STEM/AI/Neural Networks/CNN/GAN/StackGAN.md STEM/AI/Neural Networks/CNN/Inception Layer.md STEM/AI/Neural Networks/CNN/Interpretation.md STEM/AI/Neural Networks/CNN/Max Pooling.md STEM/AI/Neural Networks/CNN/Normalisation.md STEM/AI/Neural Networks/CNN/UpConv.md STEM/AI/Neural Networks/CV/Layer Structure.md STEM/AI/Neural Networks/MLP/MLP.md STEM/AI/Neural Networks/Neural Networks.md STEM/AI/Neural Networks/RNN/LSTM.md STEM/AI/Neural Networks/RNN/RNN.md STEM/AI/Neural Networks/RNN/VQA.md STEM/AI/Neural Networks/SLP/Least Mean Square.md STEM/AI/Neural Networks/SLP/Perceptron Convergence.md STEM/AI/Neural Networks/SLP/SLP.md STEM/AI/Neural Networks/Transformers/LLM.md STEM/AI/Neural Networks/Transformers/Transformers.md STEM/AI/Properties.md STEM/CS/Language Binding.md STEM/Light.md STEM/Maths/Tensor.md STEM/Quantum/Orbitals.md STEM/Quantum/Schrödinger.md STEM/Quantum/Standard Model.md STEM/Quantum/Wave Function.md Tattoo/Music.md Tattoo/Plans.md Tattoo/Sources.md |

||

|---|---|---|

| .. | ||

| Attention.md | ||

| LLM.md | ||

| README.md | ||

| Transformers.md | ||

- Attention

- Weighting significance of parts of the input

- Including recursive output

- Weighting significance of parts of the input

- Similar to RNNs

- Process sequential data

- Translation & text summarisation

- Differences

- Process input all at once

- Largely replaced LSTM and gated recurrent units (GRU) which had attention mechanics

- No recurrent structure

Examples

- BERT

- Bidirectional Encoder Representations from Transformers

- Original GPT

transformers-explained-visually-part-1-overview-of-functionality

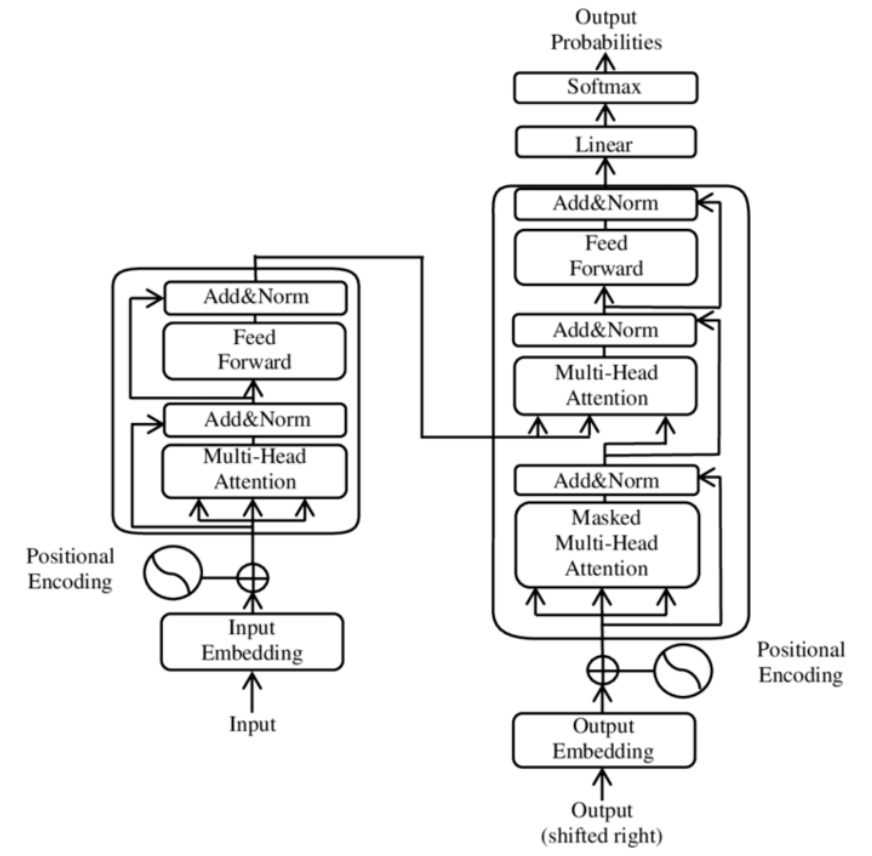

Architecture

Input

- Byte-pair encoding tokeniser

- Mapped via word embedding into vector

- Positional information added

Encoder/Decoder

- Similar to seq2seq models

- Create internal representation

- Encoder layers

- Create encodings that contain information about which parts of input are relevant to each other

- Subsequent encoder layers receive previous encoding layers output

- Decoder layers

- Takes encodings and does opposite

- Uses incorporated textual information to produce output

- Has attention to draw information from output of previous decoders before drawing from encoders

- Both use Attention

- Both use MLP layers for additional processing of outputs

- Contain residual connections & layer norm steps