adding data, writing, added LR range for exp2

|

Before

(image error) Size: 110 KiB After

(image error) Size: 115 KiB

|

BIN

graphs/exp1-test2-10-error-rate-curves.png

Normal file

|

After

(image error) Size: 86 KiB |

BIN

graphs/exp1-test2-10-error-rate-std.png

Normal file

|

After

(image error) Size: 91 KiB |

BIN

graphs/exp1-test2-10-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 124 KiB |

BIN

graphs/exp1-test2-10-test-train-error-rate.png

Normal file

|

After

(image error) Size: 104 KiB |

BIN

graphs/exp1-test2-11-error-rate-curves.png

Normal file

|

After

(image error) Size: 48 KiB |

BIN

graphs/exp1-test2-11-error-rate-std.png

Normal file

|

After

(image error) Size: 56 KiB |

BIN

graphs/exp1-test2-11-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 92 KiB |

BIN

graphs/exp1-test2-11-test-train-error-rate.png

Normal file

|

After

(image error) Size: 79 KiB |

BIN

graphs/exp1-test2-12-error-rate-curves.png

Normal file

|

After

(image error) Size: 86 KiB |

BIN

graphs/exp1-test2-12-error-rate-std.png

Normal file

|

After

(image error) Size: 103 KiB |

BIN

graphs/exp1-test2-12-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 126 KiB |

BIN

graphs/exp1-test2-12-test-train-error-rate.png

Normal file

|

After

(image error) Size: 105 KiB |

BIN

graphs/exp1-test2-13-error-rate-curves.png

Normal file

|

After

(image error) Size: 66 KiB |

BIN

graphs/exp1-test2-13-error-rate-std.png

Normal file

|

After

(image error) Size: 85 KiB |

BIN

graphs/exp1-test2-13-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 109 KiB |

BIN

graphs/exp1-test2-13-test-train-error-rate.png

Normal file

|

After

(image error) Size: 97 KiB |

BIN

graphs/exp1-test2-14-error-rate-curves.png

Normal file

|

After

(image error) Size: 89 KiB |

BIN

graphs/exp1-test2-14-error-rate-std.png

Normal file

|

After

(image error) Size: 98 KiB |

BIN

graphs/exp1-test2-14-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 122 KiB |

BIN

graphs/exp1-test2-14-test-train-error-rate.png

Normal file

|

After

(image error) Size: 107 KiB |

BIN

graphs/exp1-test2-4-error-rate-curves.png

Normal file

|

After

(image error) Size: 71 KiB |

BIN

graphs/exp1-test2-4-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 107 KiB |

BIN

graphs/exp1-test2-4-test-train-error-rate.png

Normal file

|

After

(image error) Size: 96 KiB |

BIN

graphs/exp1-test2-5-error-rate-curves.png

Normal file

|

After

(image error) Size: 108 KiB |

BIN

graphs/exp1-test2-5-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 133 KiB |

BIN

graphs/exp1-test2-5-test-train-error-rate.png

Normal file

|

After

(image error) Size: 109 KiB |

BIN

graphs/exp1-test2-6-error-rate-curves.png

Normal file

|

After

(image error) Size: 60 KiB |

BIN

graphs/exp1-test2-6-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 100 KiB |

BIN

graphs/exp1-test2-6-test-train-error-rate.png

Normal file

|

After

(image error) Size: 87 KiB |

BIN

graphs/exp1-test2-7-error-rate-curves.png

Normal file

|

After

(image error) Size: 84 KiB |

BIN

graphs/exp1-test2-7-error-rate-std.png

Normal file

|

After

(image error) Size: 93 KiB |

BIN

graphs/exp1-test2-7-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 128 KiB |

BIN

graphs/exp1-test2-7-test-train-error-rate.png

Normal file

|

After

(image error) Size: 112 KiB |

BIN

graphs/exp1-test2-8-error-rate-curves.png

Normal file

|

After

(image error) Size: 54 KiB |

BIN

graphs/exp1-test2-8-error-rate-std.png

Normal file

|

After

(image error) Size: 62 KiB |

BIN

graphs/exp1-test2-8-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 104 KiB |

BIN

graphs/exp1-test2-8-test-train-error-rate.png

Normal file

|

After

(image error) Size: 80 KiB |

BIN

graphs/exp1-test2-9-error-rate-curves.png

Normal file

|

After

(image error) Size: 48 KiB |

BIN

graphs/exp1-test2-9-error-rate-std.png

Normal file

|

After

(image error) Size: 53 KiB |

BIN

graphs/exp1-test2-9-test-train-error-rate-std.png

Normal file

|

After

(image error) Size: 95 KiB |

BIN

graphs/exp1-test2-9-test-train-error-rate.png

Normal file

|

After

(image error) Size: 74 KiB |

|

Before

(image error) Size: 90 KiB After

(image error) Size: 94 KiB

|

|

Before

(image error) Size: 22 KiB After

(image error) Size: 67 KiB

|

|

Before

(image error) Size: 56 KiB After

(image error) Size: 50 KiB

|

|

Before

(image error) Size: 27 KiB After

(image error) Size: 86 KiB

|

|

Before

(image error) Size: 28 KiB After

(image error) Size: 83 KiB

|

|

Before

(image error) Size: 30 KiB After

(image error) Size: 95 KiB

|

|

Before

(image error) Size: 15 KiB After

(image error) Size: 50 KiB

|

|

Before

(image error) Size: 19 KiB After

(image error) Size: 59 KiB

|

|

Before

(image error) Size: 68 KiB After

(image error) Size: 68 KiB

|

|

Before

(image error) Size: 26 KiB After

(image error) Size: 68 KiB

|

195

nncw.ipynb

353

nncw.py

@ -1,7 +1,7 @@

|

||||

#!/usr/bin/env python

|

||||

# coding: utf-8

|

||||

|

||||

# In[1]:

|

||||

# In[2]:

|

||||

|

||||

|

||||

import numpy as np

|

||||

@ -32,7 +32,7 @@ fig_dpi = 70

|

||||

#

|

||||

# Read CSVs dumped from MatLab and parse into Pandas DataFrames

|

||||

|

||||

# In[2]:

|

||||

# In[3]:

|

||||

|

||||

|

||||

data = pd.read_csv('features.csv', header=None).T

|

||||

@ -62,17 +62,19 @@ labels.astype(bool).sum(axis=0)

|

||||

#

|

||||

# Using a 50/50 split

|

||||

|

||||

# In[3]:

|

||||

# In[4]:

|

||||

|

||||

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5, stratify=labels)

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5

|

||||

# , stratify=labels

|

||||

)

|

||||

|

||||

|

||||

# ## Generate & Retrieve Model

|

||||

#

|

||||

# Get a shallow model with a single hidden layer of varying nodes

|

||||

|

||||

# In[4]:

|

||||

# In[5]:

|

||||

|

||||

|

||||

def get_model(hidden_nodes=9, activation=lambda: 'sigmoid', weight_init=lambda: 'glorot_uniform'):

|

||||

@ -86,12 +88,13 @@ def get_model(hidden_nodes=9, activation=lambda: 'sigmoid', weight_init=lambda:

|

||||

|

||||

# Get a Keras Tensorboard callback for dumping data for later analysis

|

||||

|

||||

# In[5]:

|

||||

# In[6]:

|

||||

|

||||

|

||||

def tensorboard_callback(path='tensorboard-logs', prefix=''):

|

||||

return tf.keras.callbacks.TensorBoard(log_dir=os.path.normpath(os.path.join(path, prefix + datetime.datetime.now().strftime("%Y%m%d-%H%M%S"))),

|

||||

histogram_freq=1)

|

||||

return tf.keras.callbacks.TensorBoard(

|

||||

log_dir=os.path.normpath(os.path.join(path, prefix + datetime.datetime.now().strftime("%Y%m%d-%H%M%S"))), histogram_freq=1

|

||||

)

|

||||

|

||||

|

||||

# # Example Training

|

||||

@ -144,7 +147,7 @@ model.metrics[1].result()

|

||||

# (Hint2: as epochs increases you can expect the test error rate to reach a minimum and then start increasing, you may need to set the stopping criteria to achieve the desired number of epochs - Hint 3: to find classification error rates for train and test set, you need to check the code from E2, to determine how you may obtain the train and test set patterns)

|

||||

#

|

||||

|

||||

# In[16]:

|

||||

# In[194]:

|

||||

|

||||

|

||||

# hidden_nodes = [2, 8, 16, 24, 32]

|

||||

@ -156,6 +159,7 @@ def evaluate_parameters(hidden_nodes=hidden_nodes,

|

||||

epochs=epochs,

|

||||

batch_size=128,

|

||||

optimizer=lambda: 'sgd',

|

||||

weight_init=lambda: 'glorot_uniform',

|

||||

loss=lambda: 'categorical_crossentropy',

|

||||

metrics=['accuracy'],

|

||||

callbacks=None,

|

||||

@ -176,7 +180,7 @@ def evaluate_parameters(hidden_nodes=hidden_nodes,

|

||||

if print_params:

|

||||

print(f"Nodes: {hn}, Epochs: {e}")

|

||||

|

||||

model = get_model(hn)

|

||||

model = get_model(hn, weight_init=weight_init)

|

||||

model.compile(

|

||||

optimizer=optimizer(),

|

||||

loss=loss(),

|

||||

@ -266,7 +270,7 @@ ax.set_title("Training vs Validation Accuracy")

|

||||

ax.plot(single_history['accuracy'], label="train", lw=2)

|

||||

ax.plot(single_history['val_accuracy'], label="validation", lw=2, c=(1,0,0))

|

||||

ax.set_xlabel("Epochs")

|

||||

ax.set_ylim(0, 1)

|

||||

# ax.set_ylim(0, 1)

|

||||

ax.grid()

|

||||

ax.legend()

|

||||

|

||||

@ -296,7 +300,7 @@ ax.set_xlabel('Epochs')

|

||||

ax.set_ylabel('Hidden Nodes')

|

||||

ax.set_zlabel('Accuracy')

|

||||

ax.view_init(30, -110)

|

||||

ax.set_zlim([0, 1])

|

||||

# ax.set_zlim([0, 1])

|

||||

fig.colorbar(surf, shrink=0.3, aspect=6)

|

||||

|

||||

plt.tight_layout()

|

||||

@ -348,31 +352,42 @@ plt.show()

|

||||

#

|

||||

# ### Pickle Results

|

||||

#

|

||||

# | test | learning rate | momentum | batch size | hidden nodes | epochs |

|

||||

# | --- | --- | --- | --- | --- | --- |

|

||||

# |1|0.01|0|128|2, 8, 12, 16, 24, 32, 64, 128, 256|1, 2, 4, 8, 16, 32, 64, 100, 150, 200|

|

||||

# |2|0.5|0.1|128|2, 8, 12, 16, 24, 32, 64, 128|1, 2, 4, 8, 16, 32, 64, 100|

|

||||

# |3|1|0.3|20|2, 8, 12, 16, 24, 32, 64, 128|1, 2, 4, 8, 16, 32, 64, 100|

|

||||

# |4|0.6|0.1|20|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200|

|

||||

# |5|0.05|0.01|20|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200|

|

||||

# |6|1.5|0.5|20|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200|

|

||||

# | test | learning rate | momentum | batch size | hidden nodes | epochs | statified |

|

||||

# | --- | --- | --- | --- | --- | --- | --- |

|

||||

# |1|0.01|0|128|2, 8, 12, 16, 24, 32, 64, 128, 256|1, 2, 4, 8, 16, 32, 64, 100, 150, 200| |

|

||||

# |2|0.5|0.1|128|2, 8, 12, 16, 24, 32, 64, 128|1, 2, 4, 8, 16, 32, 64, 100| |

|

||||

# |3|1|0.3|20|2, 8, 12, 16, 24, 32, 64, 128|1, 2, 4, 8, 16, 32, 64, 100| |

|

||||

# |4|0.6|0.1|20|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200| |

|

||||

# |5|0.05|0.01|20|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200| |

|

||||

# |6|1.5|0.5|20|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200| |

|

||||

# |2-1|0.01|0|35|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200| n |

|

||||

# |2-2|0.1|0|35|2, 16, 32|1, 2, 4, 8, 16, 32, 64, 100| n |

|

||||

# |2-3|0.15|0|35|2, 16, 32|1, 2, 4, 8, 16, 32, 64, 100| n |

|

||||

# |2-4|0.08|0.9|35|1, 2, 8, 16, 32, 64|1, 2, 4, 8, 16, 32, 64, 100| n |

|

||||

# |2-5|0.08|0.2|35|1, 2, 8, 16, 32, 64|1, 2, 4, 8, 16, 32, 64, 100| n |

|

||||

# |2-6|0.01|0.1|35|2, 8, 16, 24, 32|1, 2, 4, 8, 16, 32, 64, 100, 150, 200| n |

|

||||

|

||||

# In[30]:

|

||||

# In[214]:

|

||||

|

||||

|

||||

multi_param_results = list()

|

||||

multi_iterations = 30

|

||||

for i in range(multi_iterations):

|

||||

print(f"Iteration {i+1}/{multi_iterations}")

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5, stratify=labels)

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5

|

||||

# , stratify=labels

|

||||

)

|

||||

multi_param_results.append(list(evaluate_parameters(dtrain=data_train,

|

||||

dtest=data_test,

|

||||

ltrain=labels_train,

|

||||

ltest=labels_test,

|

||||

optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=1.5, momentum=0.5),

|

||||

hidden_nodes=[2, 16, 32],

|

||||

epochs=[1, 2, 4, 8, 16, 32, 64, 100],

|

||||

optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=0.15, momentum=0.0),

|

||||

weight_init=lambda: 'random_uniform',

|

||||

return_model=False,

|

||||

print_params=False,

|

||||

batch_size=20)))

|

||||

batch_size=35)))

|

||||

|

||||

|

||||

# ### Accuracy Tensor

|

||||

@ -381,7 +396,7 @@ for i in range(multi_iterations):

|

||||

#

|

||||

# (Iterations x [Test/Train] x Number of nodes x Number of epochs)

|

||||

|

||||

# In[173]:

|

||||

# In[301]:

|

||||

|

||||

|

||||

multi_param_epochs = sorted(list({i["epochs"] for i in multi_param_results[0]}))

|

||||

@ -405,20 +420,32 @@ print(f'Epochs: {multi_param_epochs}')

|

||||

print()

|

||||

print(f'Loss: {multi_param_results[0][0]["loss"]}')

|

||||

print(f'LR: {multi_param_results[0][0]["optimizer"]["learning_rate"]:.3}')

|

||||

print(f'Momentum: {multi_param_results[0][0]["optimizer"]["momentum"]:.3}')

|

||||

print(f'Momentum: {multi_param_results[0][0]["optimizer"]["momentum"]:}')

|

||||

|

||||

|

||||

# #### Export/Import Test Sets

|

||||

#

|

||||

# Export mean and standard deviations for retrieval and visualisation

|

||||

pickle.dump(multi_param_results, open("result.p", "wb"))exp1_testname = 'exp1-test5'

|

||||

multi_param_results = pickle.load(open(f"results/{exp1_testname}.p", "rb"))np.savetxt("exp1-mean.csv", mean_param_accuracy, delimiter=',')

|

||||

|

||||

# In[215]:

|

||||

|

||||

|

||||

pickle.dump(multi_param_results, open("results/exp1-test2-3.p", "wb"))

|

||||

|

||||

|

||||

# In[300]:

|

||||

|

||||

|

||||

exp1_testname = 'exp1-test1'

|

||||

multi_param_results = pickle.load(open(f"results/{exp1_testname}.p", "rb"))

|

||||

|

||||

np.savetxt("exp1-mean.csv", mean_param_accuracy, delimiter=',')

|

||||

np.savetxt("exp1-std.csv", std_param_accuracy, delimiter=',')mean_param_accuracy = np.loadtxt("results/test1-exp1-mean.csv", delimiter=',')

|

||||

std_param_accuracy = np.loadtxt("results/test1-exp1-std.csv", delimiter=',')

|

||||

# multi_iterations = 30

|

||||

# ### Best Results

|

||||

|

||||

# In[141]:

|

||||

# In[302]:

|

||||

|

||||

|

||||

best_param_accuracy_idx = np.unravel_index(np.argmax(mean_param_accuracy[0, :, :]), mean_param_accuracy.shape)

|

||||

@ -431,7 +458,7 @@ print(f'Nodes: {best_param_accuracy_nodes}, Epochs: {best_param_accuracy_epochs}

|

||||

|

||||

# ### Test Accuracy Surface

|

||||

|

||||

# In[174]:

|

||||

# In[303]:

|

||||

|

||||

|

||||

X, Y = np.meshgrid(multi_param_epochs, multi_param_nodes)

|

||||

@ -447,7 +474,7 @@ ax.set_xlabel('Epochs')

|

||||

ax.set_ylabel('Hidden Nodes')

|

||||

ax.set_zlabel('Accuracy')

|

||||

ax.view_init(30, -110)

|

||||

ax.set_zlim([0, 1])

|

||||

# ax.set_zlim([0, 1])

|

||||

fig.colorbar(surf, shrink=0.3, aspect=6)

|

||||

|

||||

plt.tight_layout()

|

||||

@ -457,15 +484,15 @@ plt.show()

|

||||

|

||||

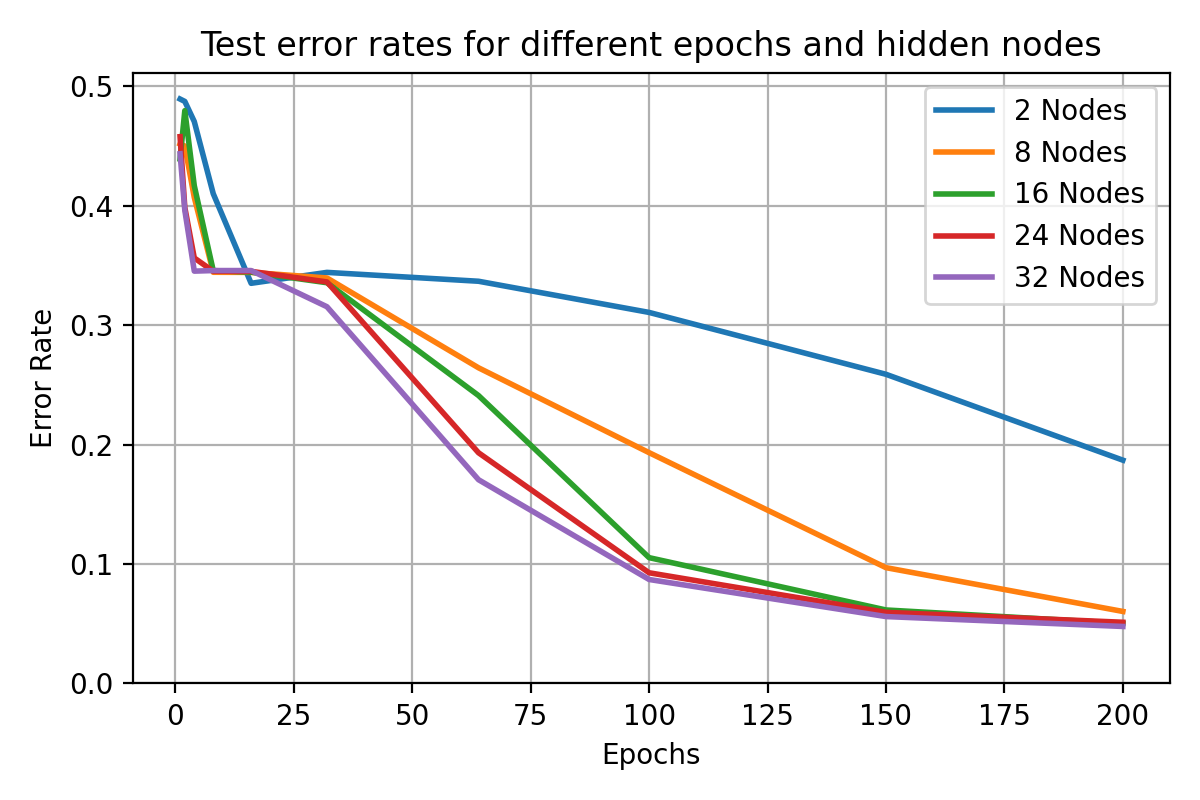

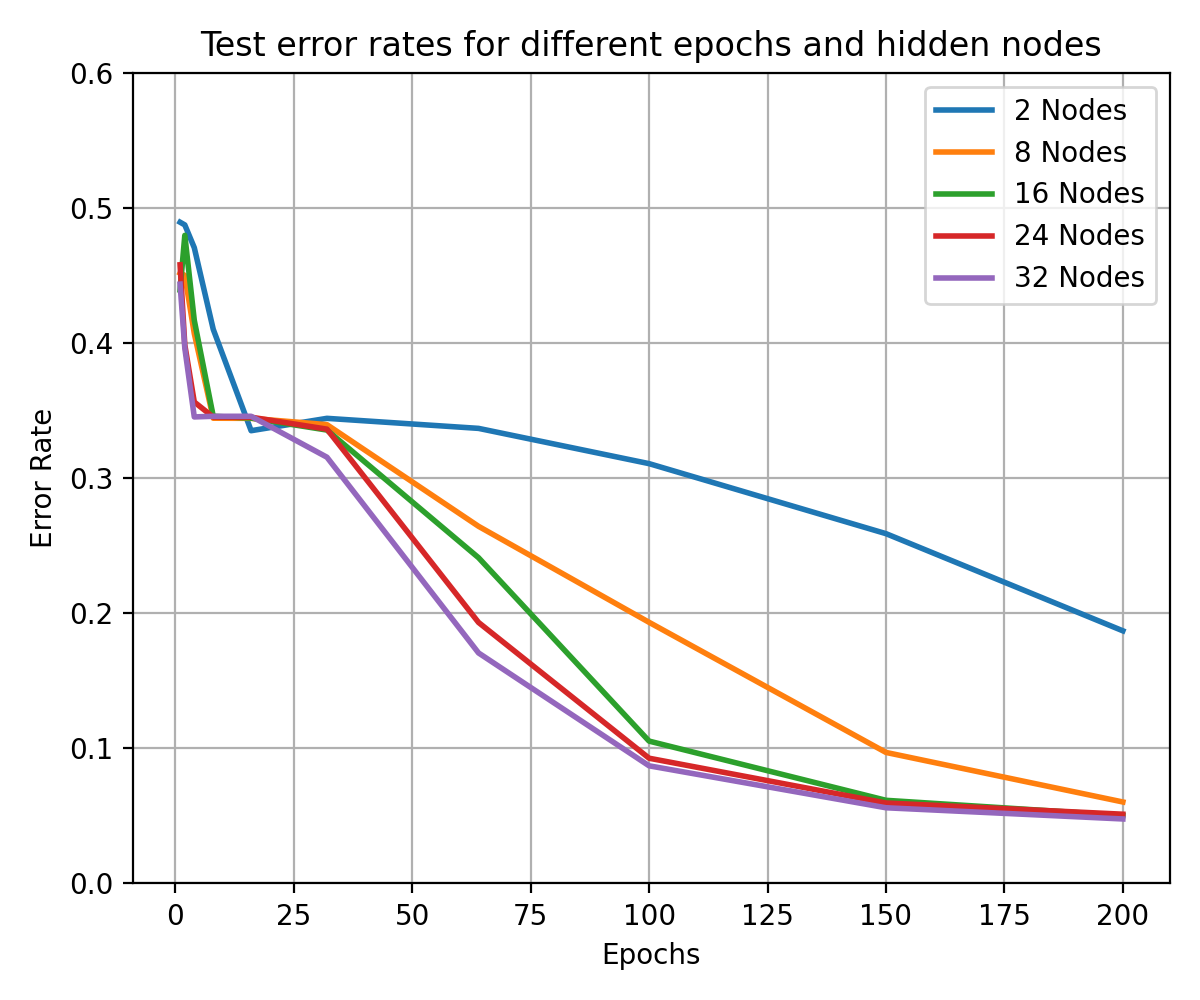

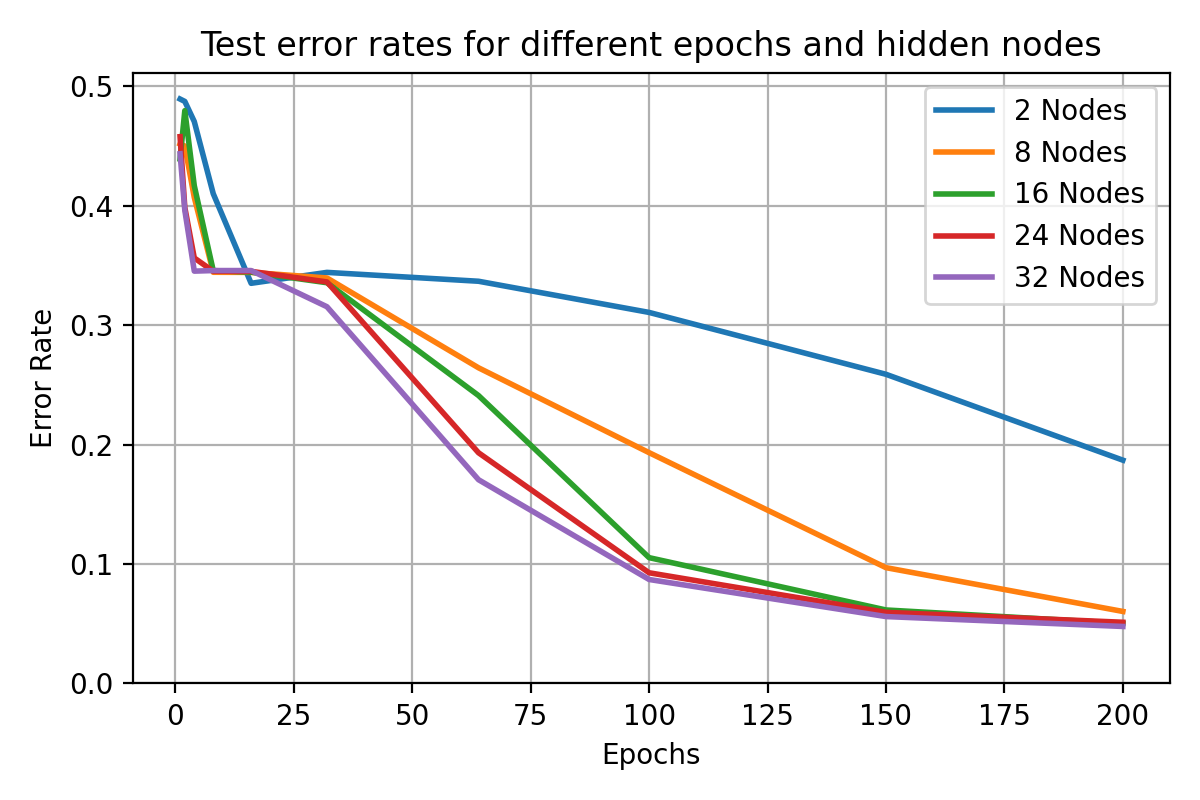

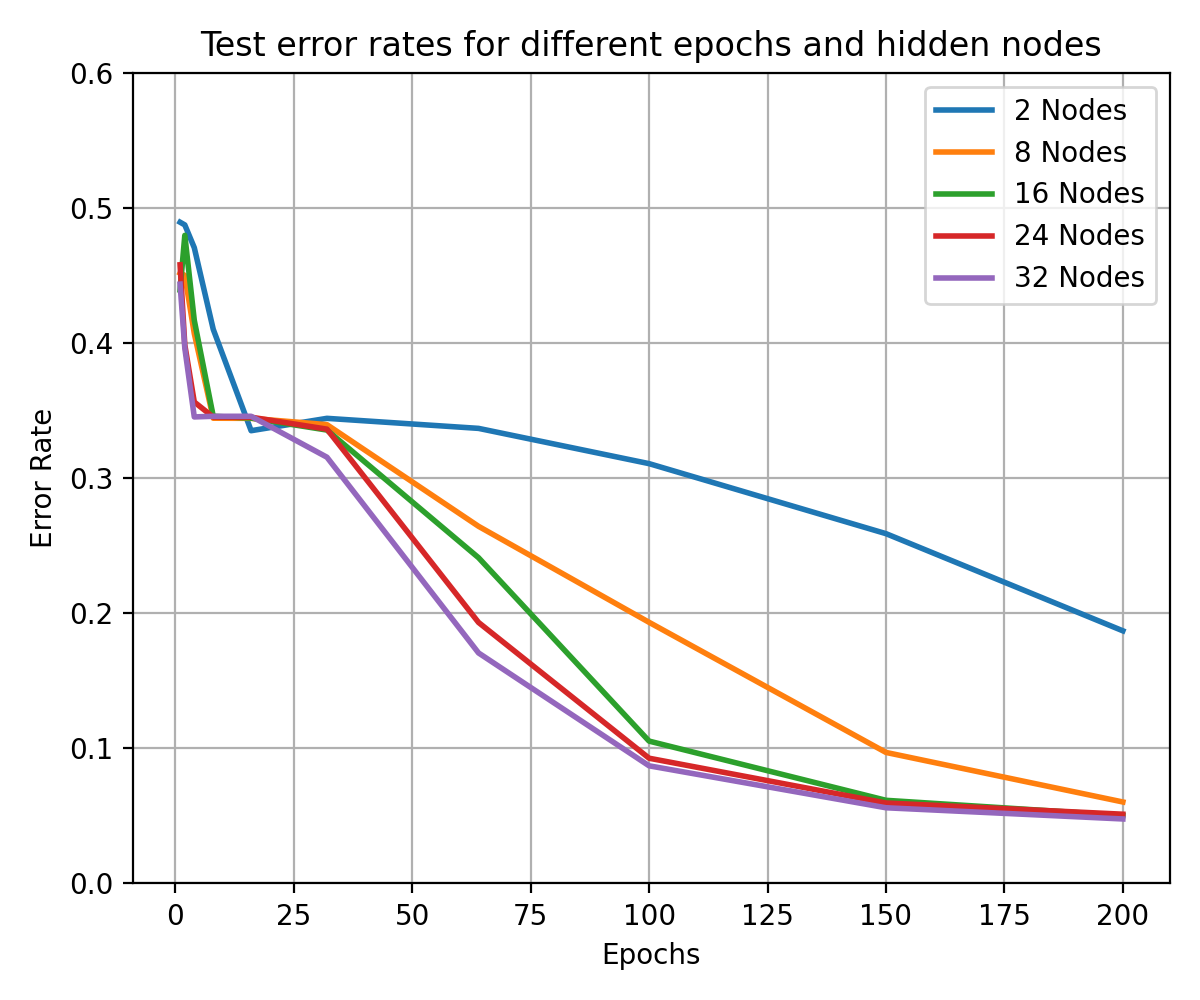

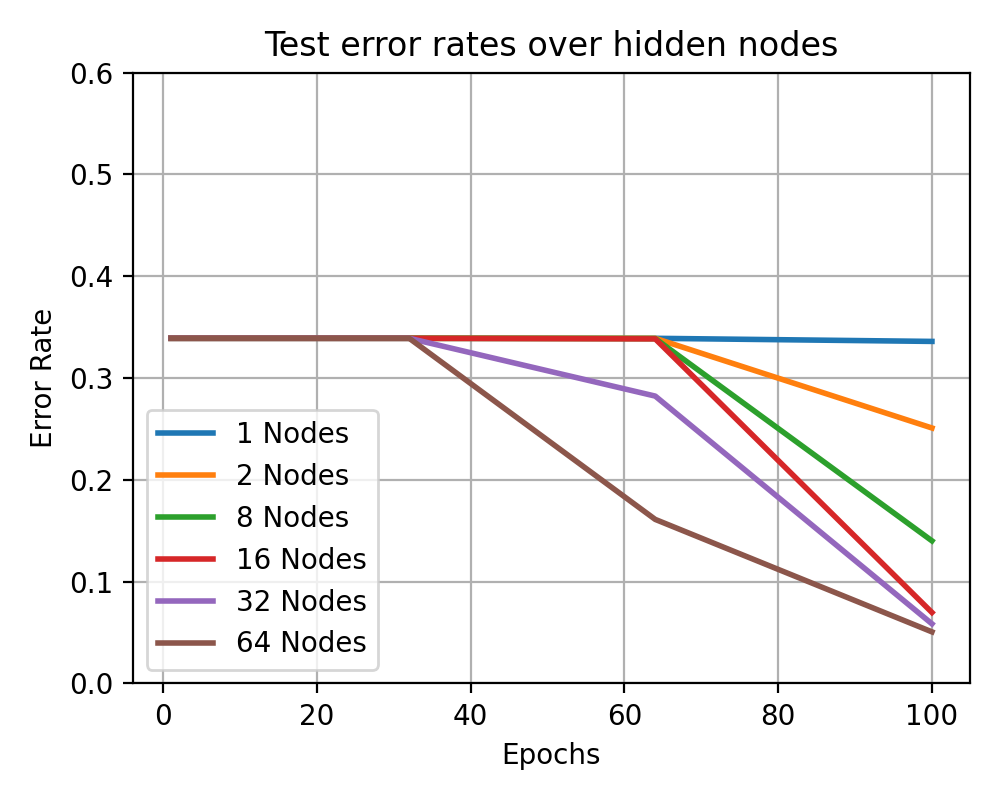

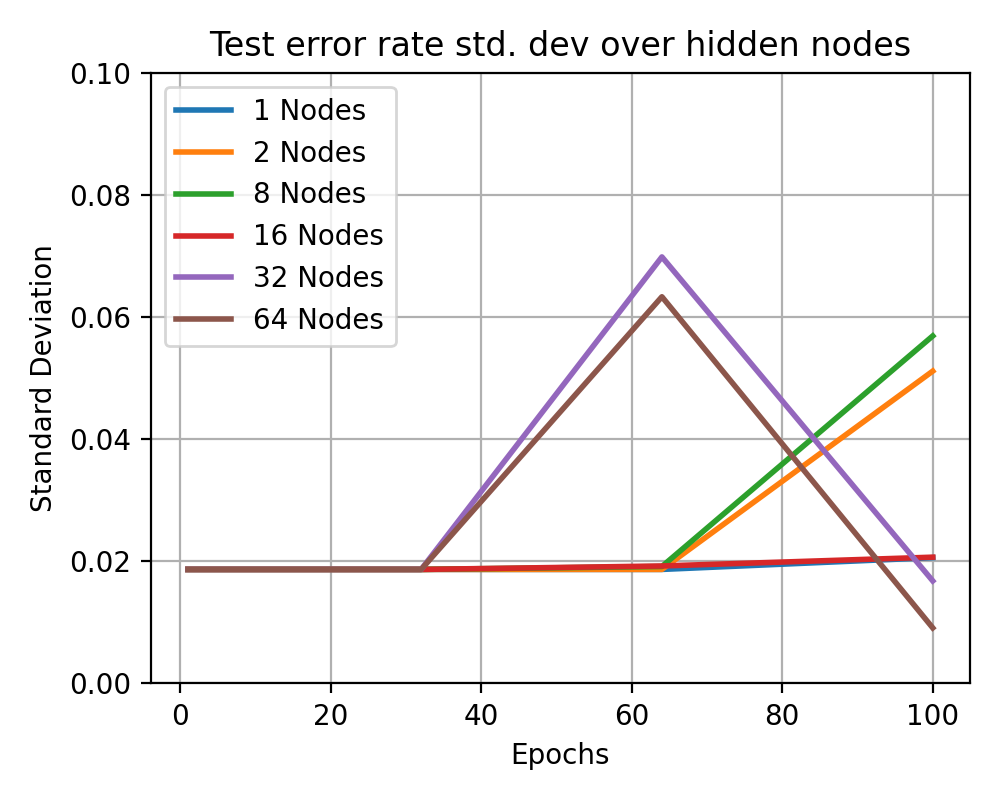

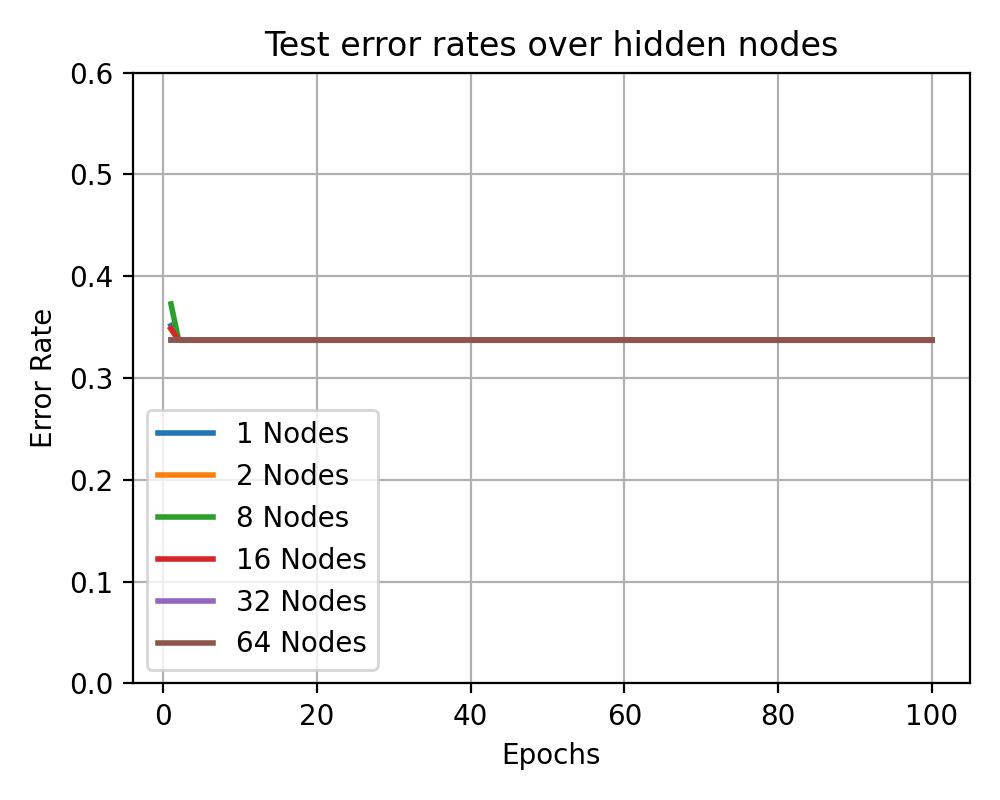

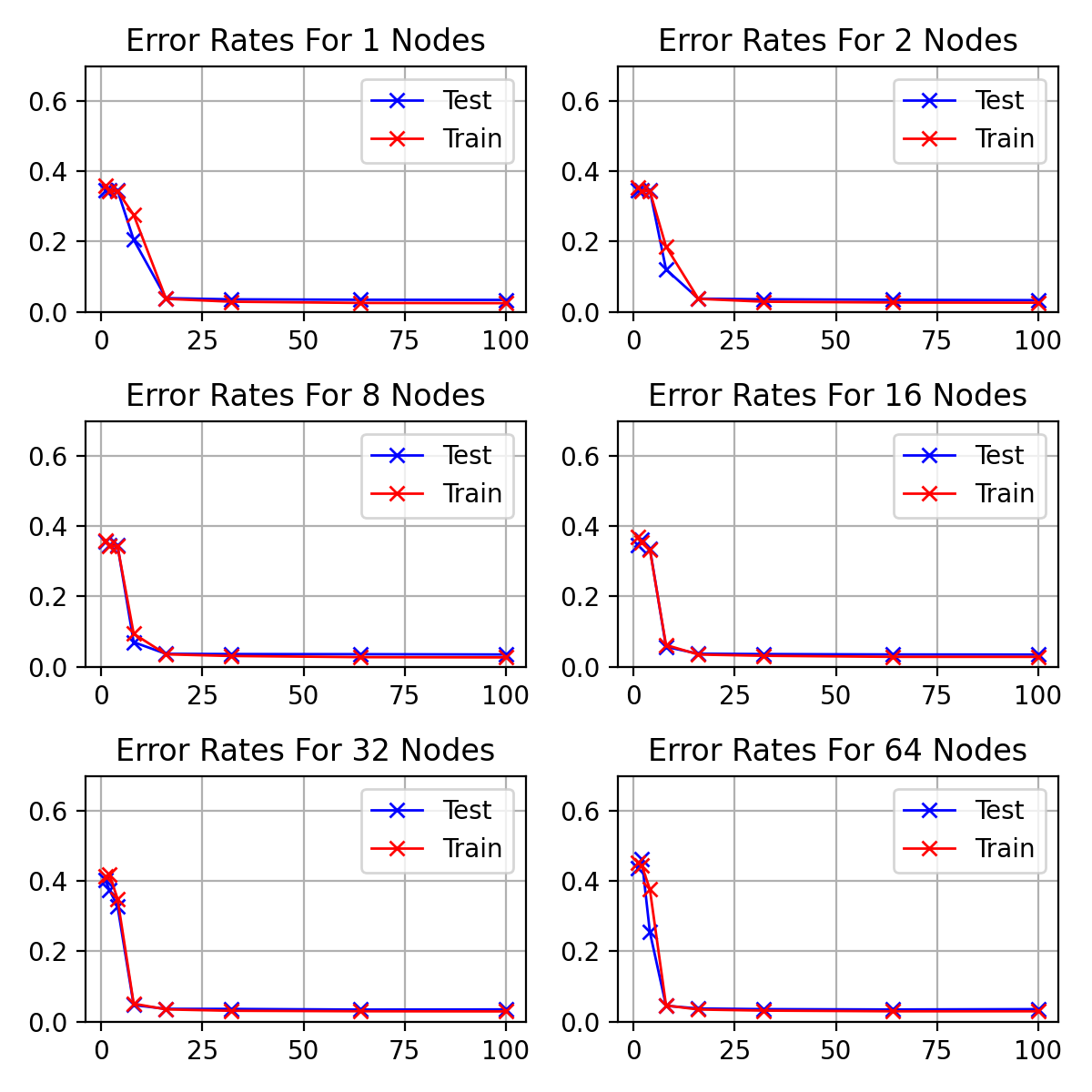

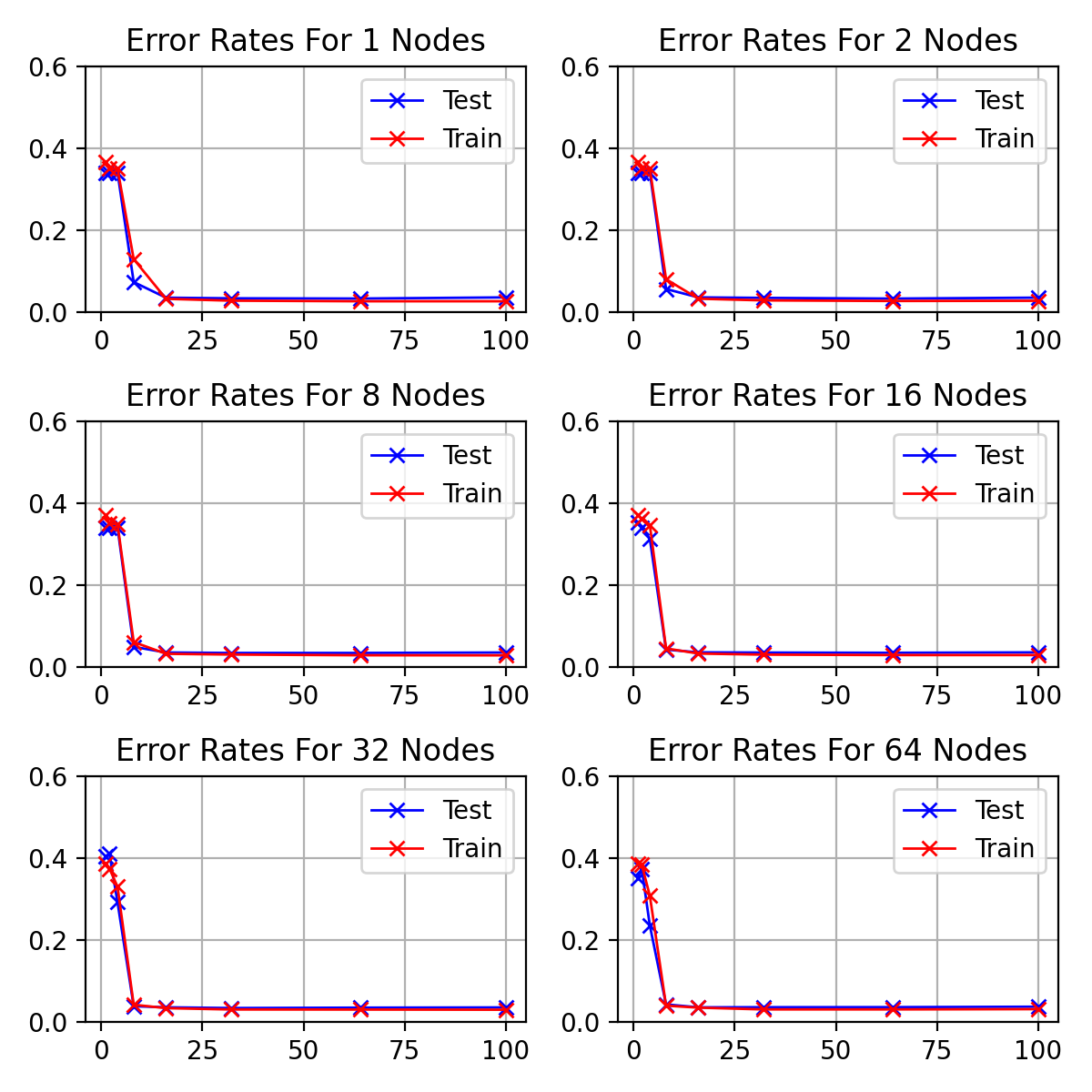

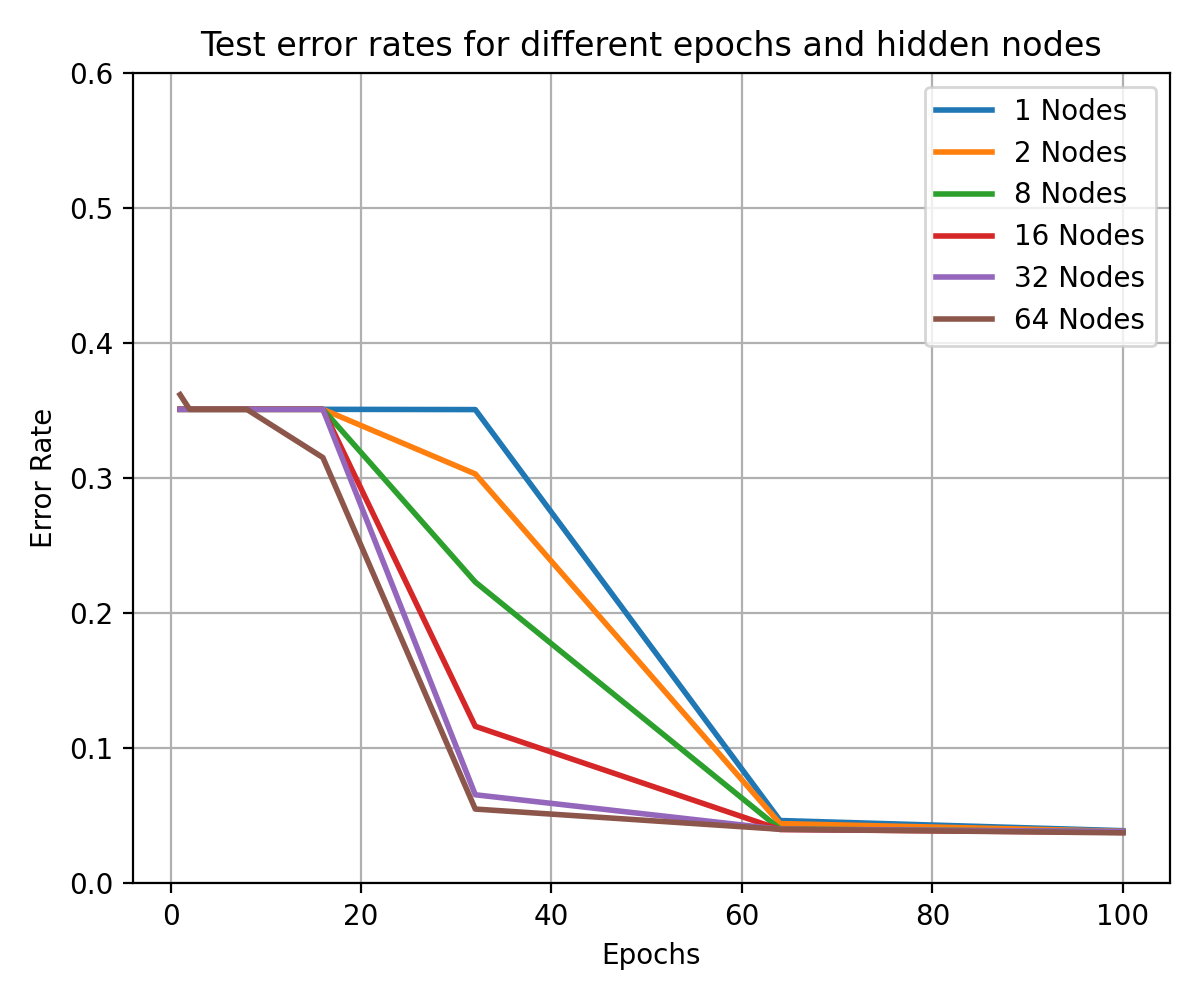

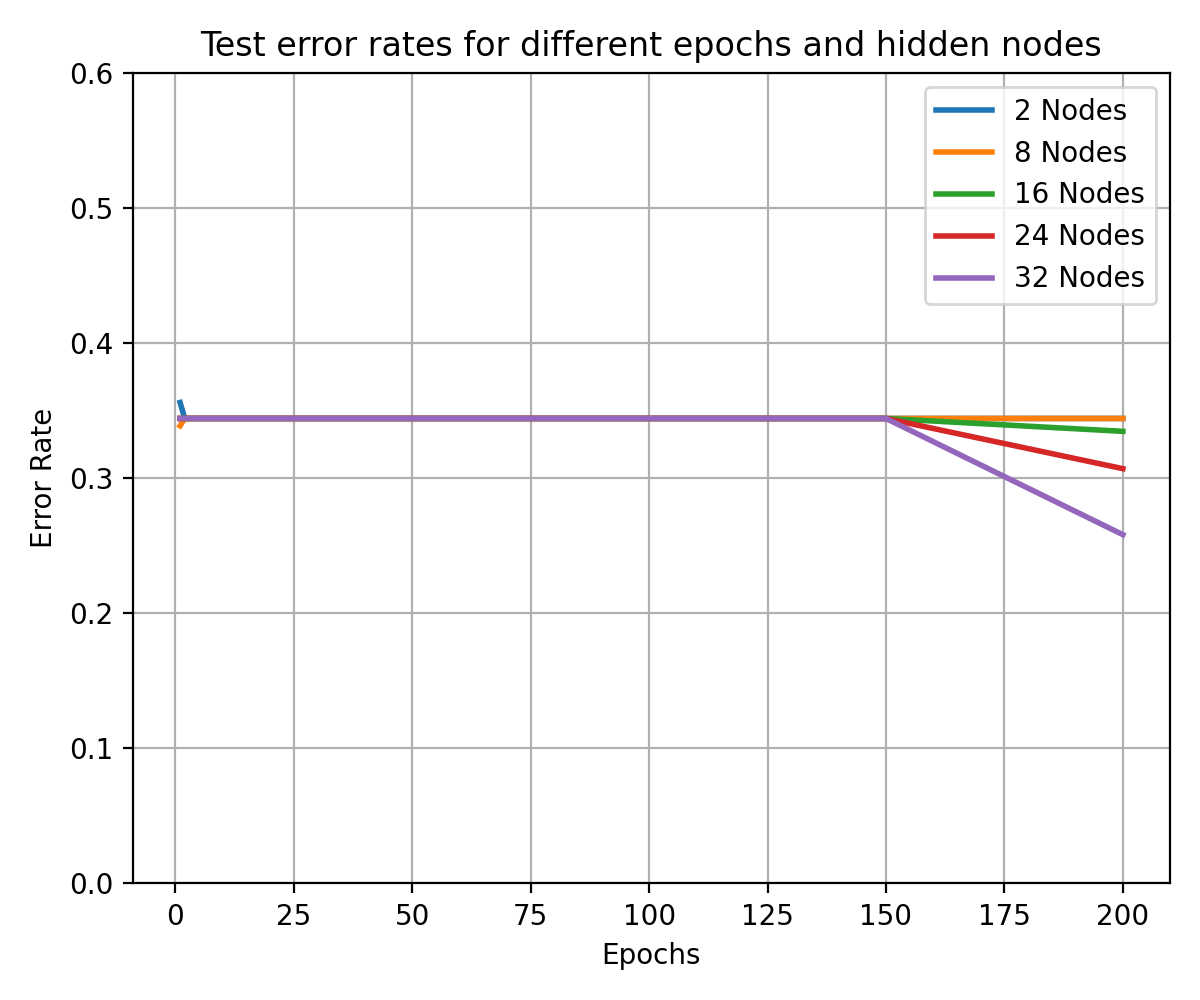

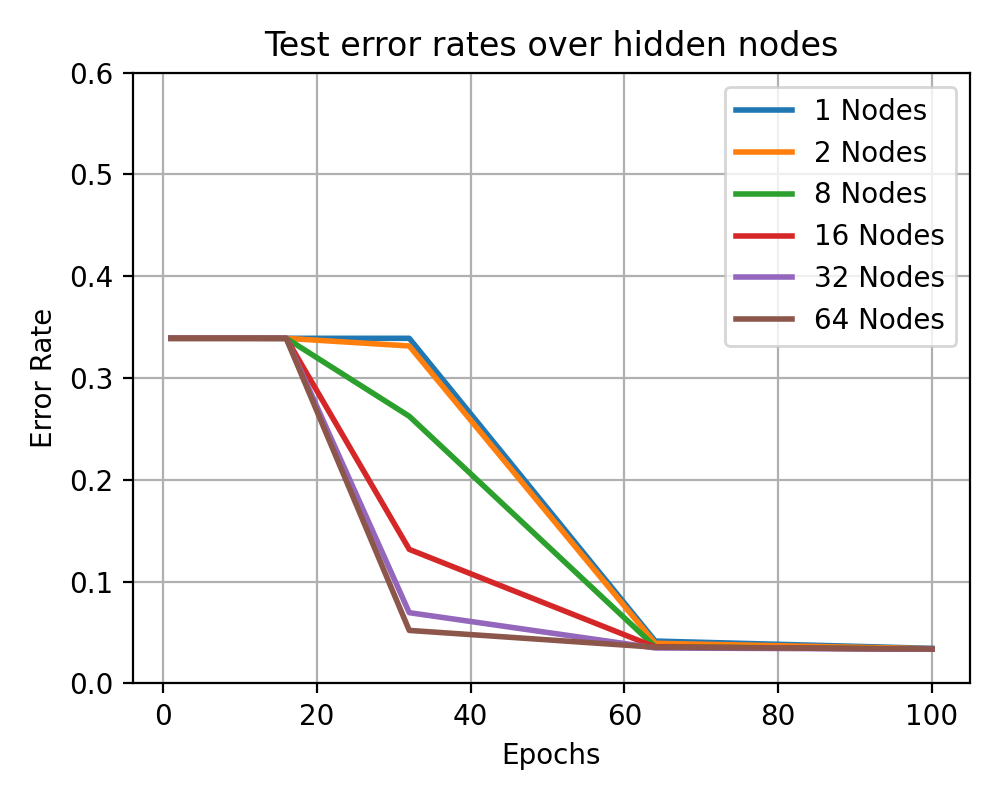

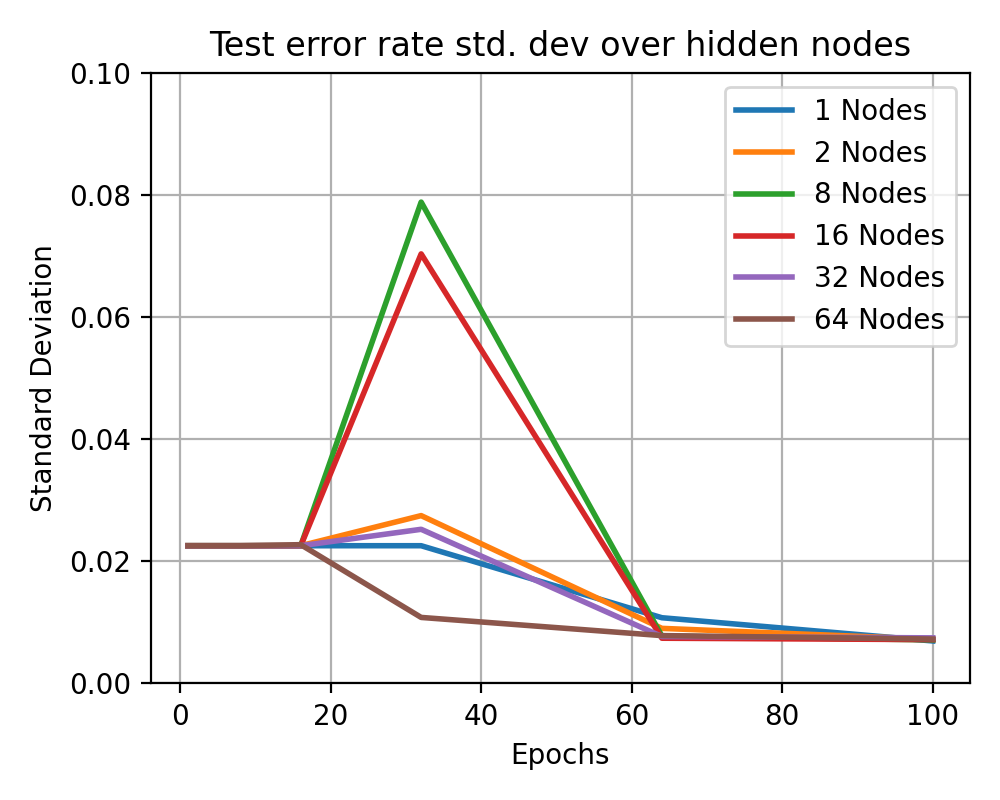

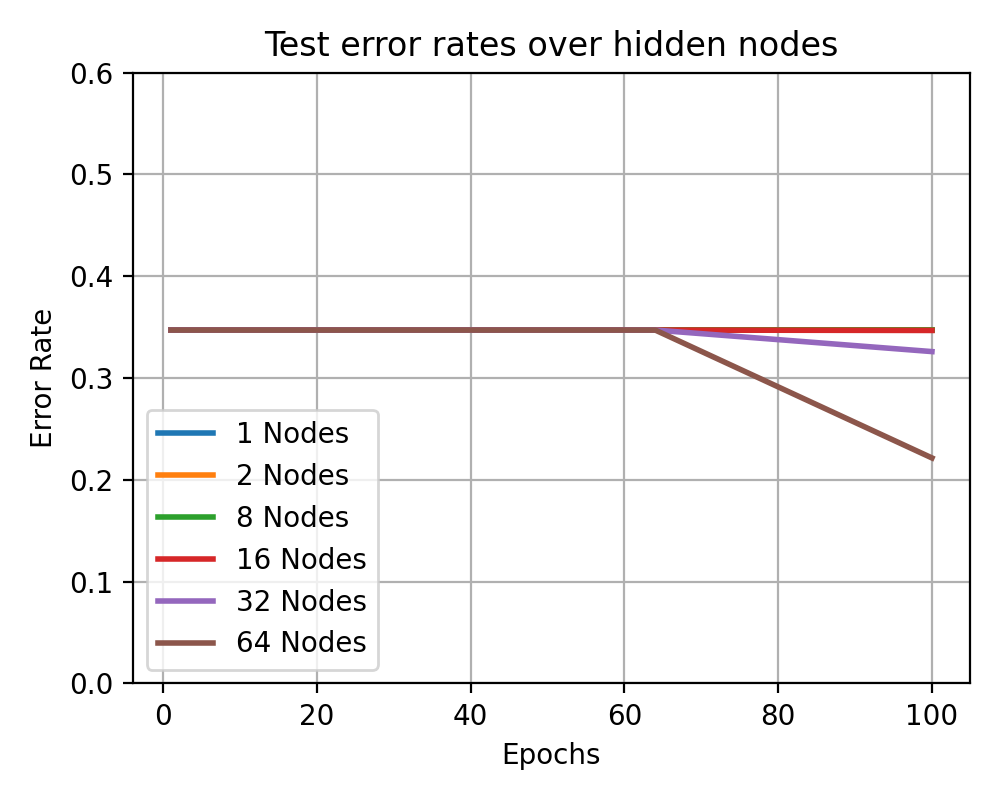

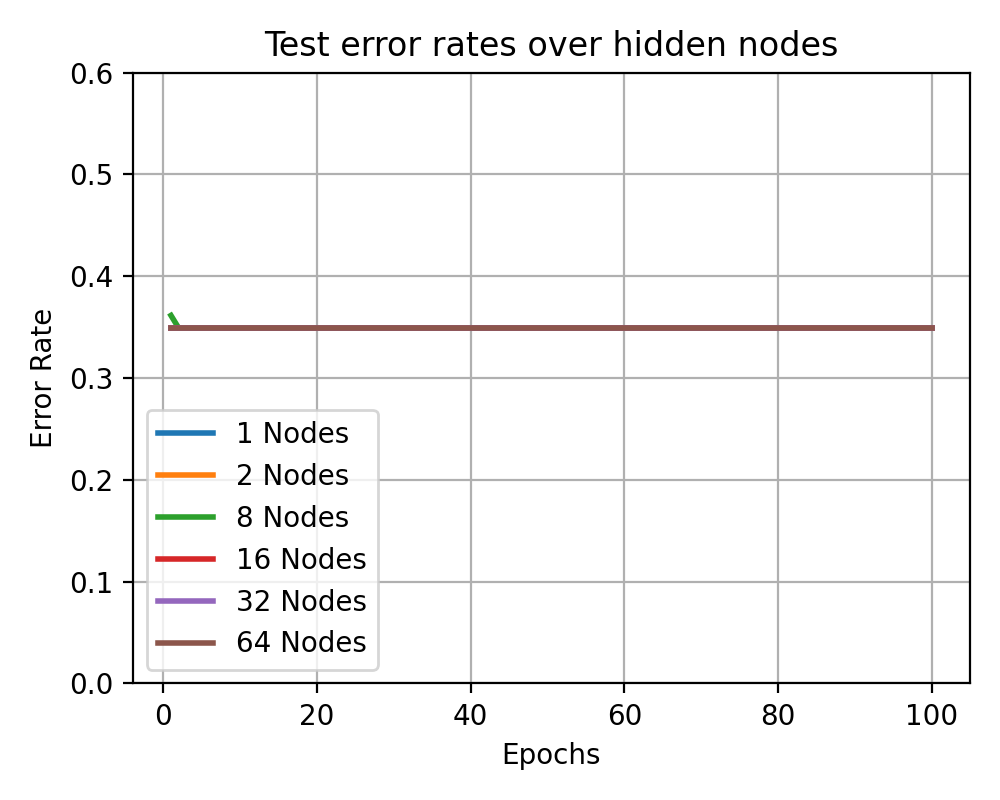

# ### Test Error Rate Curves

|

||||

|

||||

# In[175]:

|

||||

# In[313]:

|

||||

|

||||

|

||||

# fig = plt.figure(figsize=(7, 5))

|

||||

fig = plt.figure()

|

||||

fig = plt.figure(figsize=(6, 5))

|

||||

# fig = plt.figure()

|

||||

fig.set_dpi(fig_dpi)

|

||||

|

||||

for idx, layer in enumerate(mean_param_accuracy[0, :, :]):

|

||||

# plt.errorbar(epochs, 1- layer, yerr=std_param_accuracy[idx], label=f'{hidden_nodes[idx]} Nodes')

|

||||

for idx, (layer, std) in enumerate(zip(mean_param_accuracy[0, :, :], std_param_accuracy[0, :, :])):

|

||||

# plt.errorbar(multi_param_epochs, 1 - layer, yerr=std, capsize=4, label=f'{multi_param_nodes[idx]} Nodes')

|

||||

plt.plot(multi_param_epochs, 1 - layer, '-', label=f'{multi_param_nodes[idx]} Nodes', lw=2)

|

||||

|

||||

plt.legend()

|

||||

@ -473,19 +500,19 @@ plt.grid()

|

||||

plt.title(f"Test error rates for different epochs and hidden nodes")

|

||||

plt.xlabel("Epochs")

|

||||

plt.ylabel("Error Rate")

|

||||

plt.ylim(0)

|

||||

plt.ylim(0, 0.6)

|

||||

|

||||

plt.tight_layout()

|

||||

# plt.savefig(f'graphs/{exp1_testname}-error-rate-curves.png')

|

||||

plt.savefig(f'graphs/{exp1_testname}-error-rate-curves.png')

|

||||

plt.show()

|

||||

|

||||

|

||||

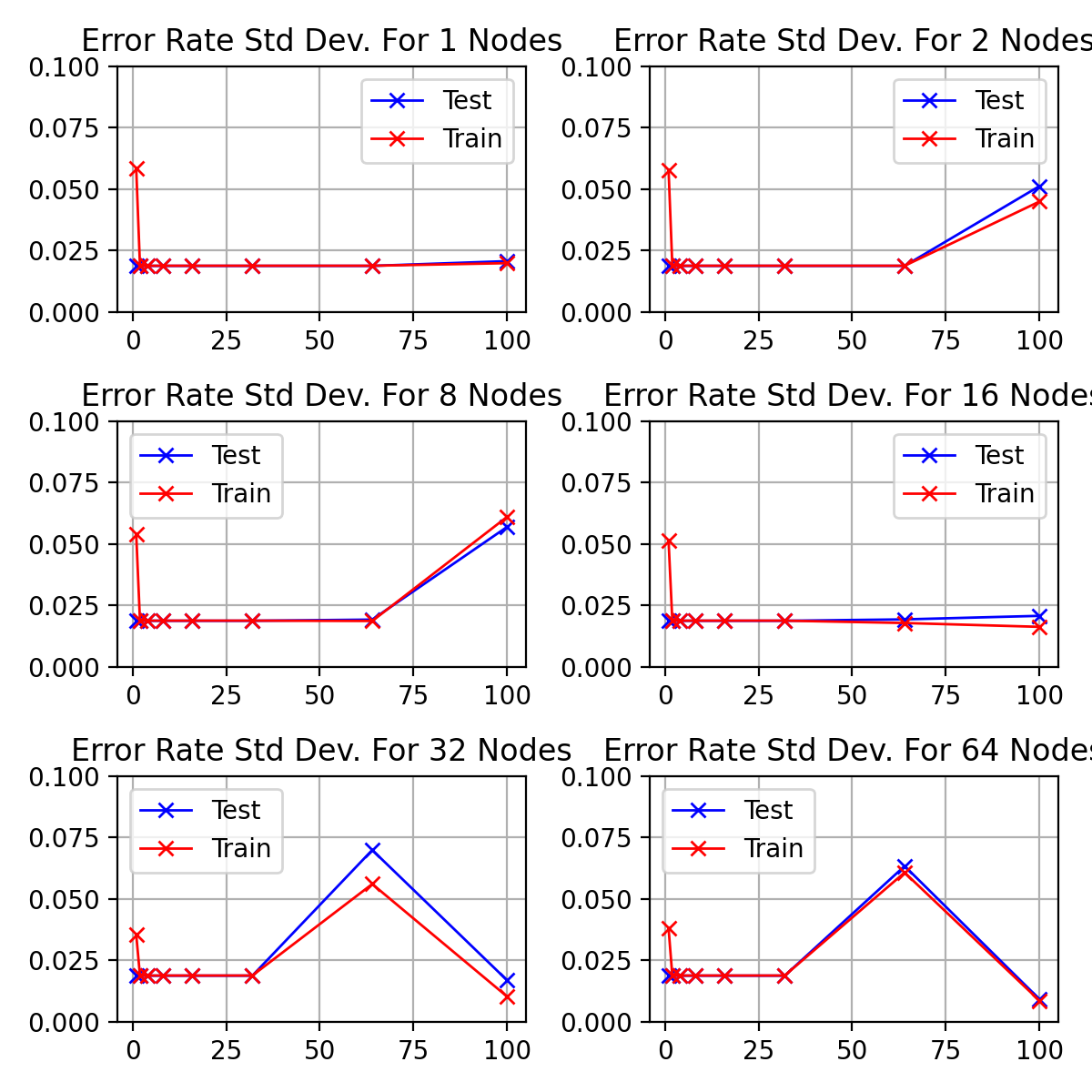

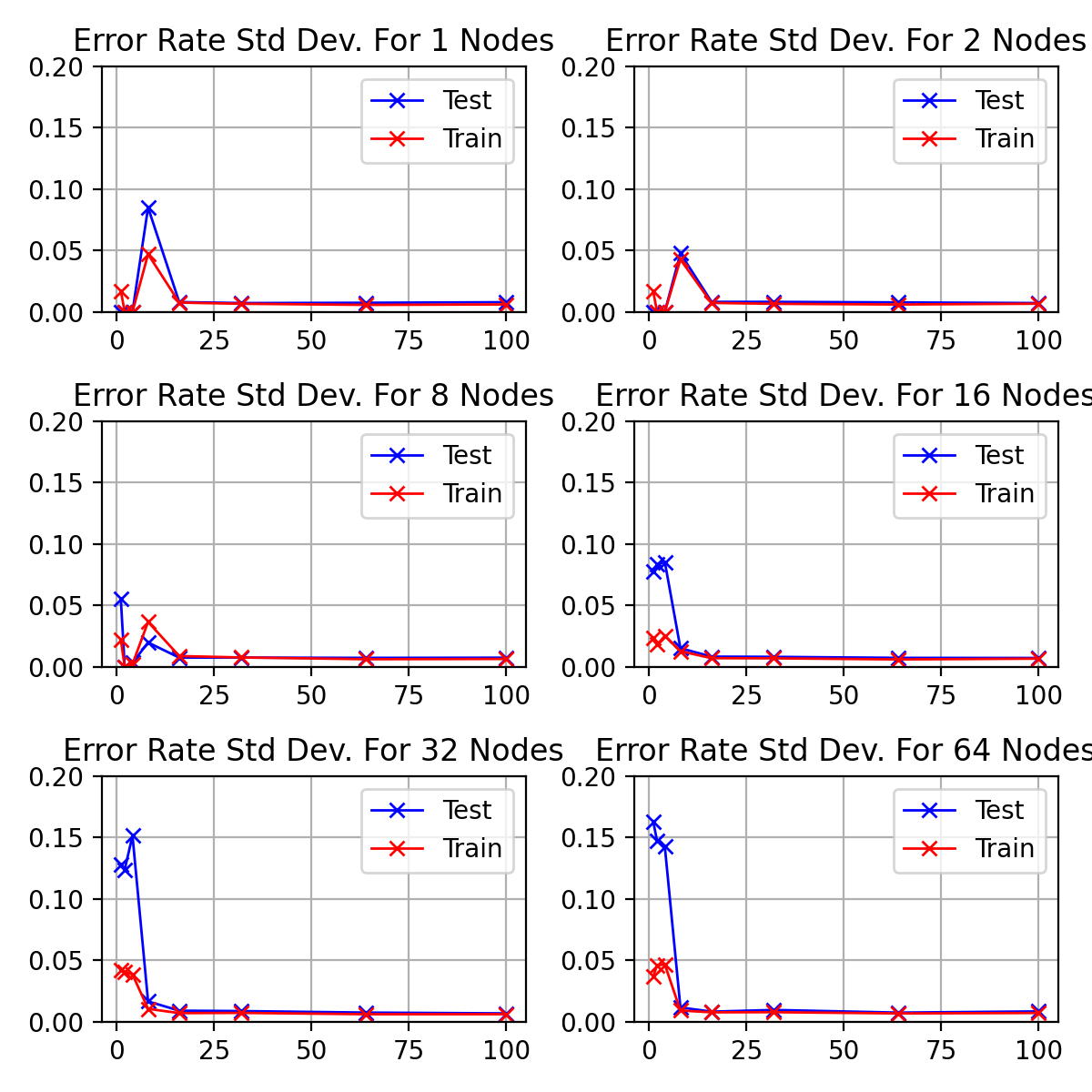

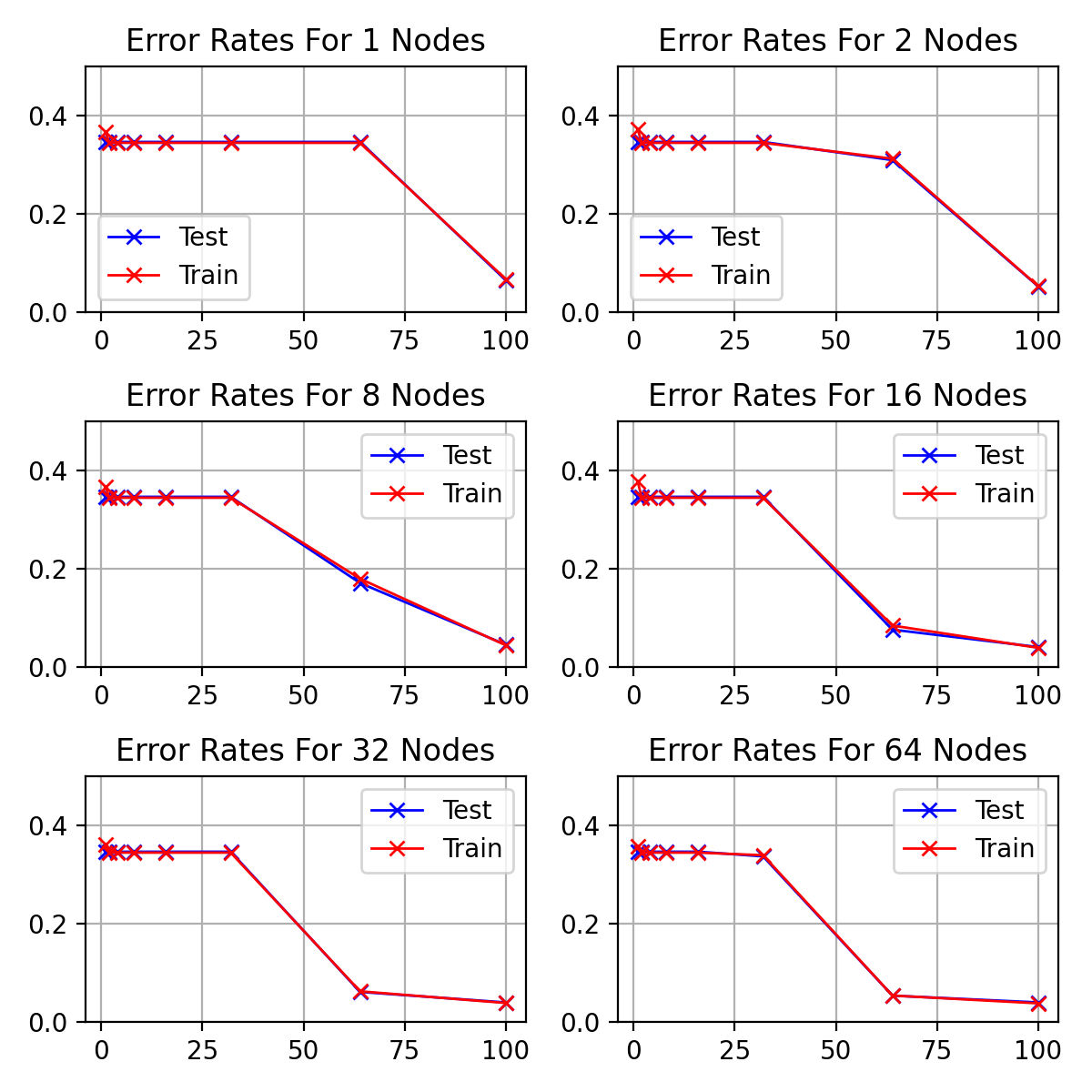

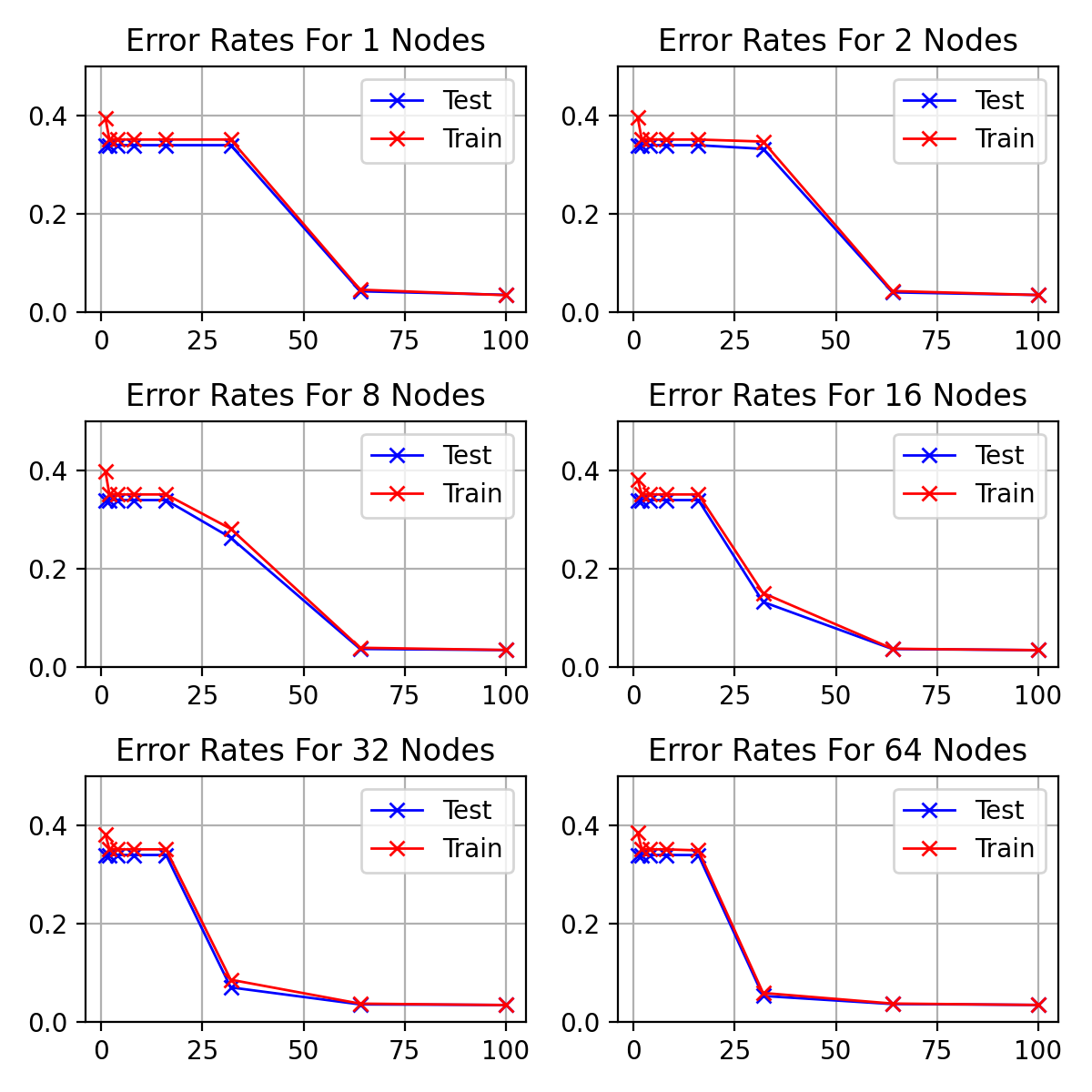

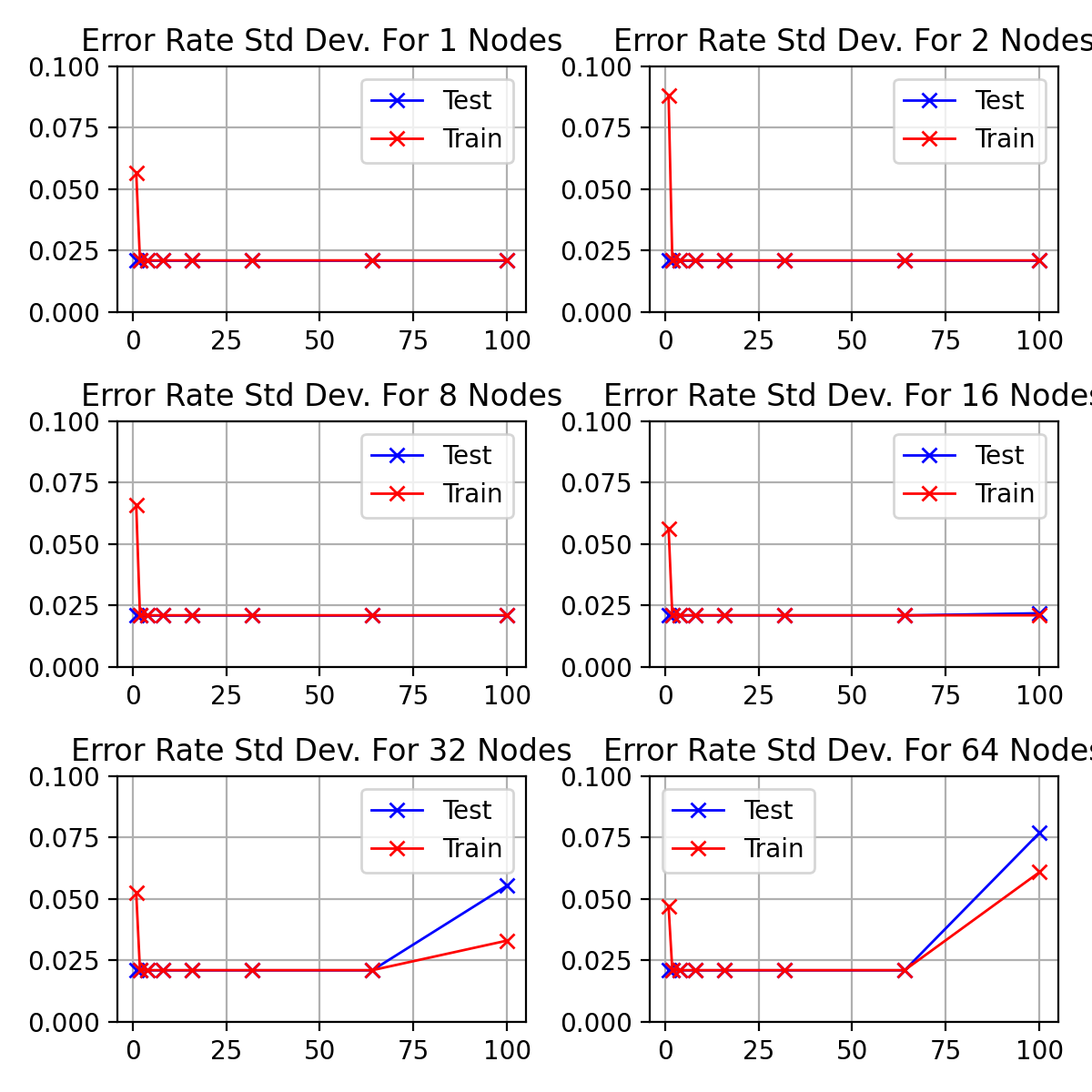

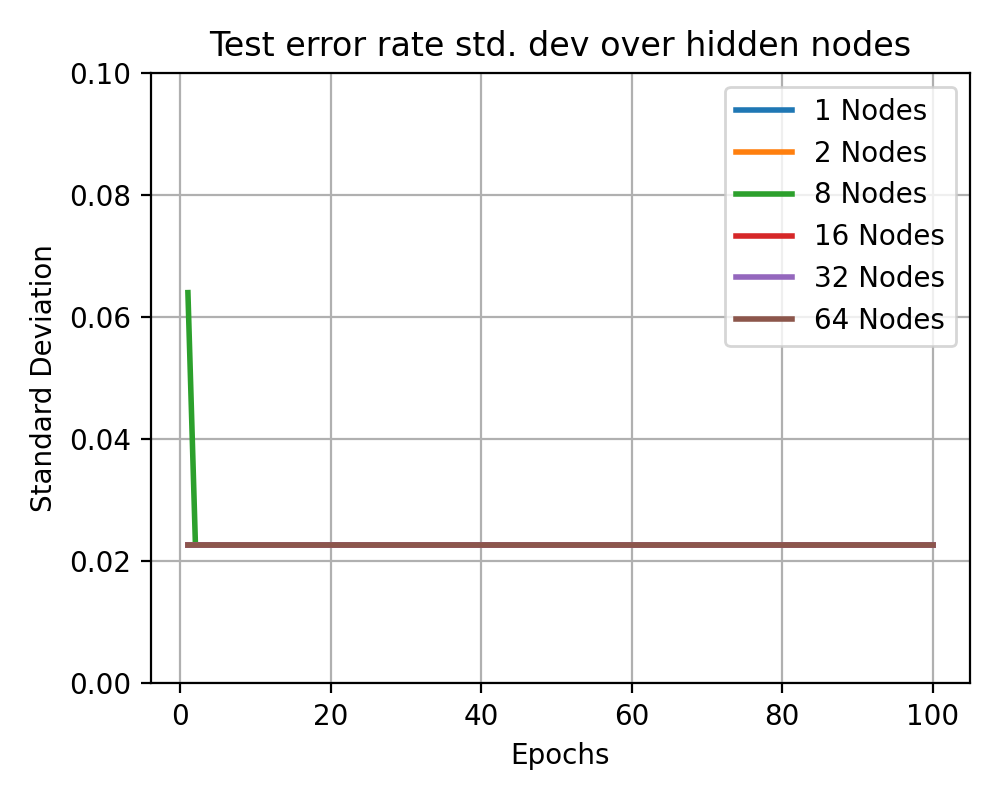

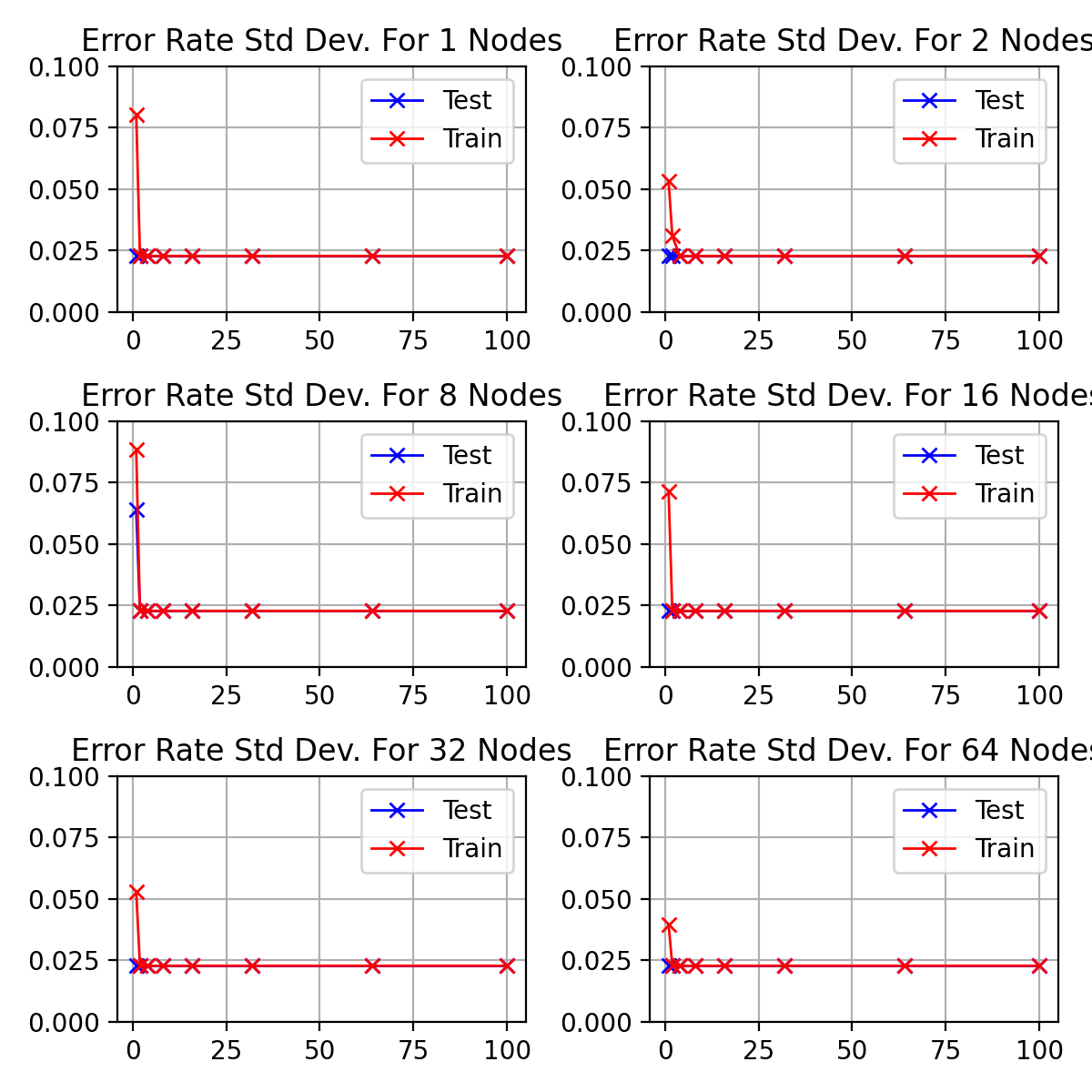

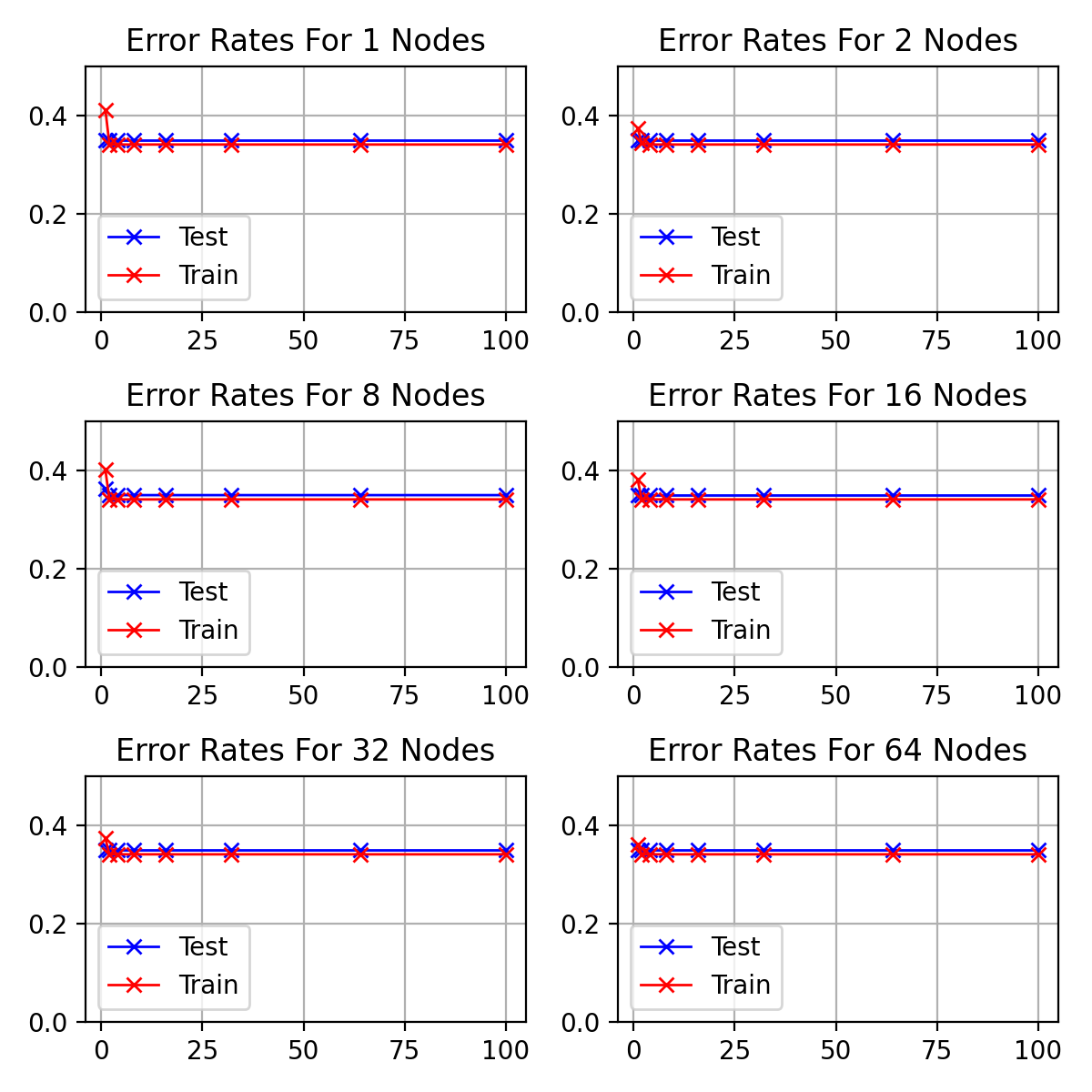

# ### Test/Train Error Over Nodes

|

||||

|

||||

# In[169]:

|

||||

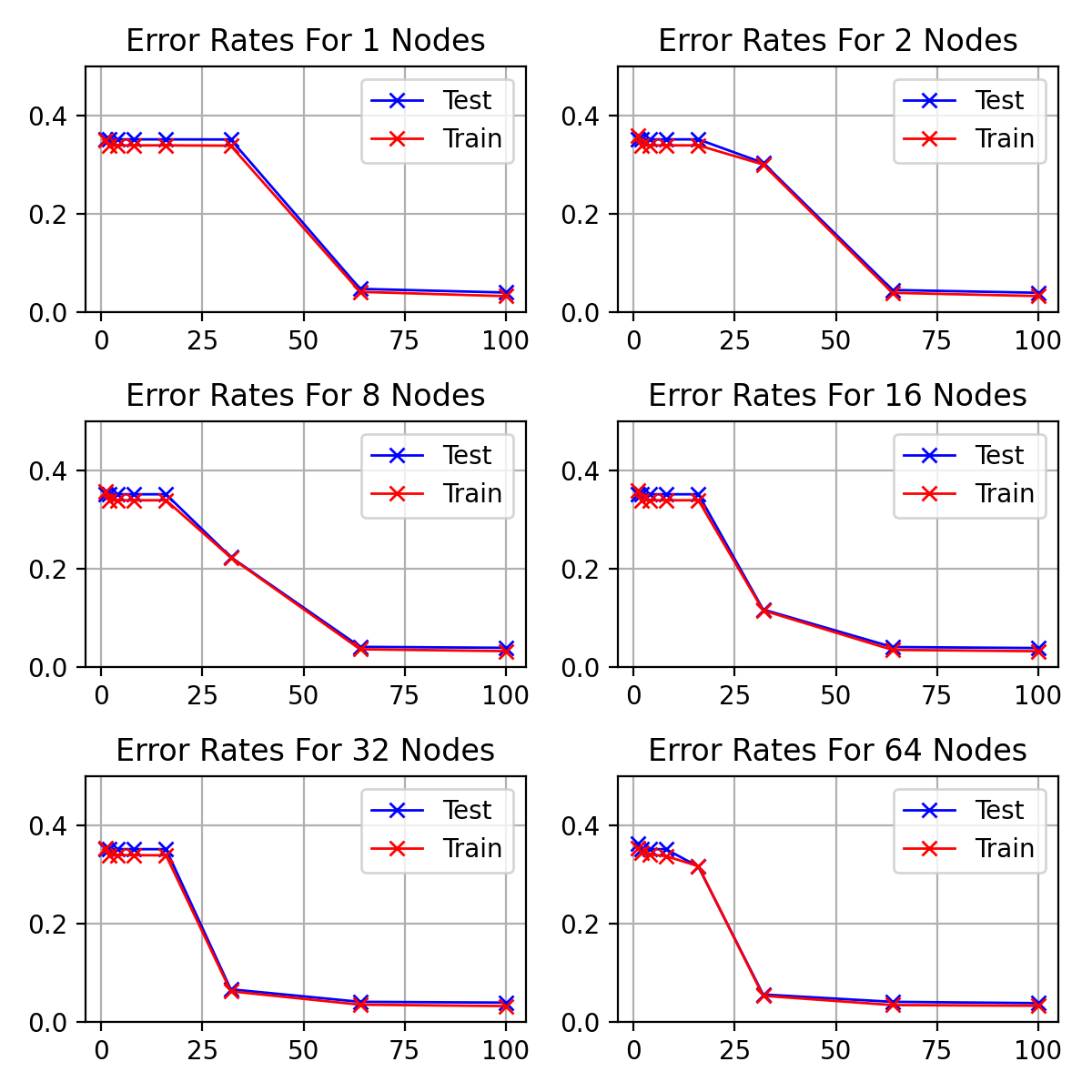

# In[314]:

|

||||

|

||||

|

||||

fig, axes = plt.subplots(math.ceil(len(multi_param_nodes) / 2), 2, figsize=(8, 8*math.ceil(len(multi_param_nodes) / 2)/3))

|

||||

fig, axes = plt.subplots(math.ceil(len(multi_param_nodes) / 2), 2, figsize=(6, 6*math.ceil(len(multi_param_nodes) / 2)/3))

|

||||

fig.set_dpi(fig_dpi)

|

||||

|

||||

for idx, (nodes, ax) in enumerate(zip(multi_param_nodes, axes.flatten())):

|

||||

@ -499,13 +526,13 @@ for idx, (nodes, ax) in enumerate(zip(multi_param_nodes, axes.flatten())):

|

||||

ax.grid()

|

||||

|

||||

fig.tight_layout()

|

||||

# fig.savefig(f'graphs/{exp1_testname}-test-train-error-rate.png')

|

||||

fig.savefig(f'graphs/{exp1_testname}-test-train-error-rate.png')

|

||||

|

||||

|

||||

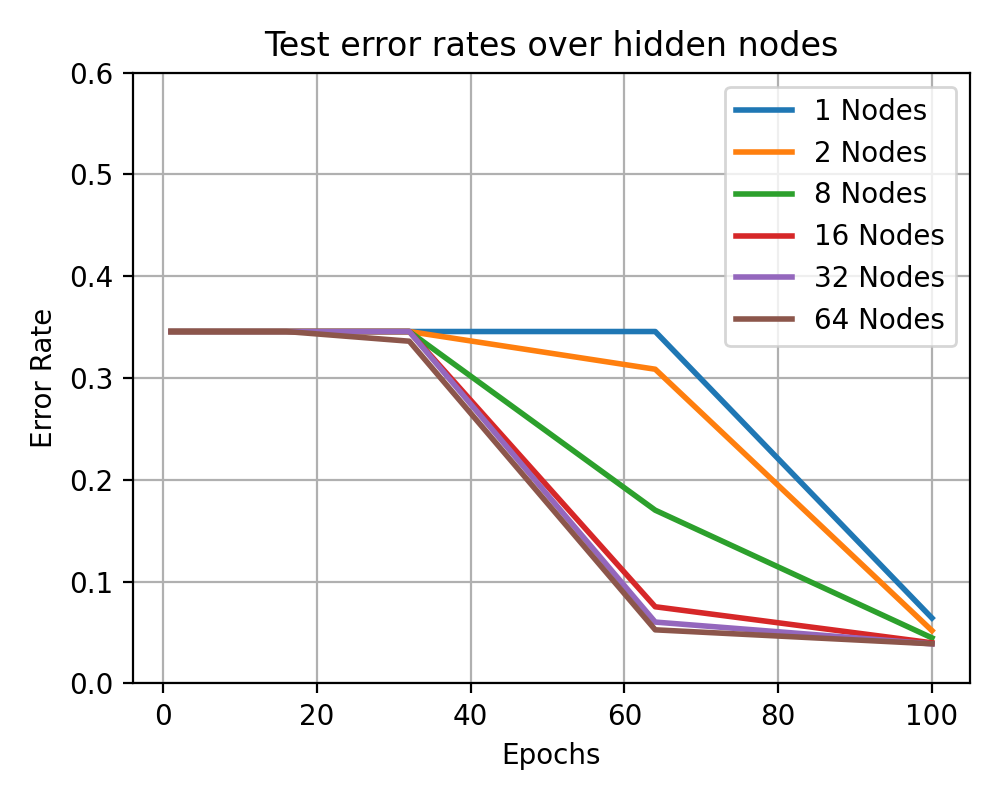

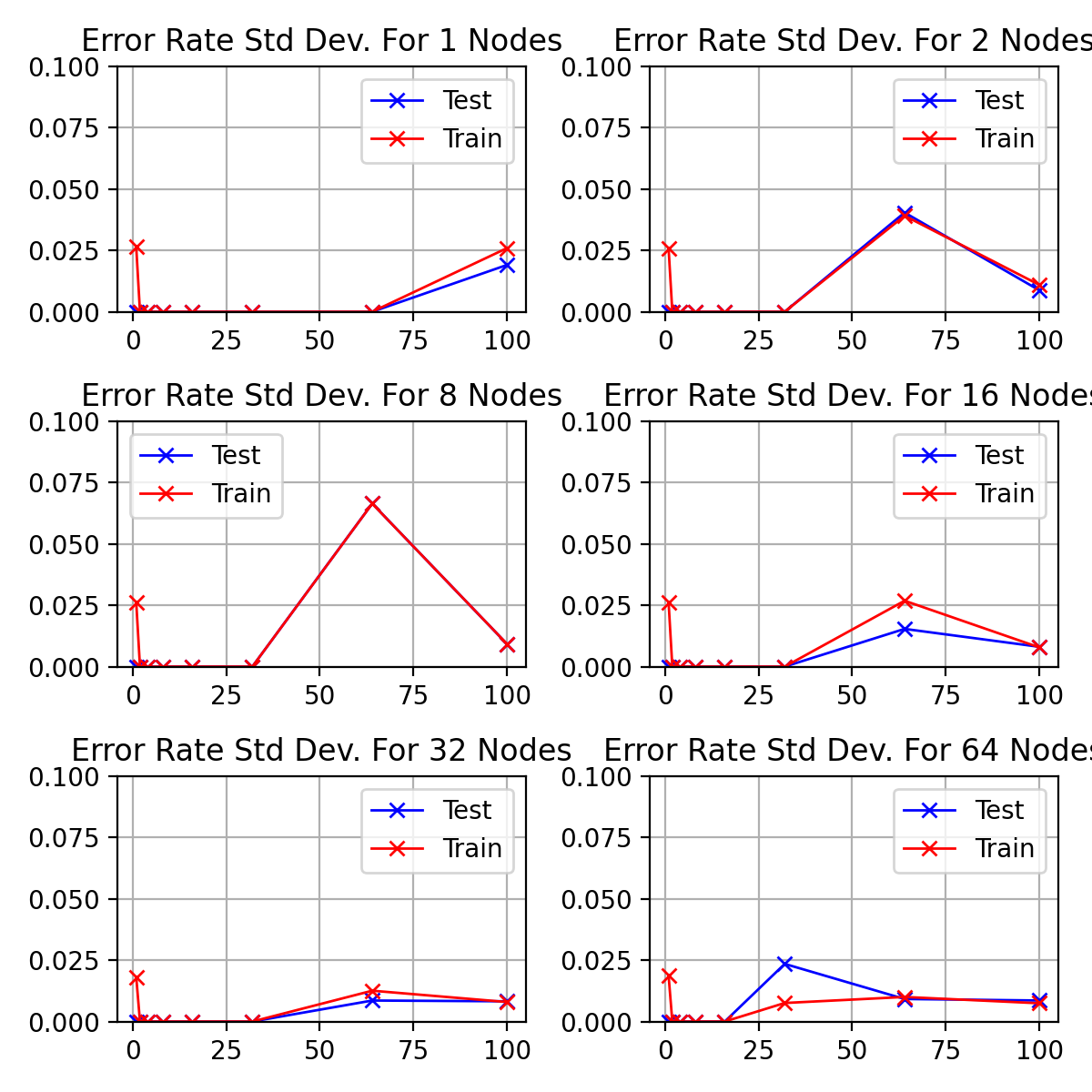

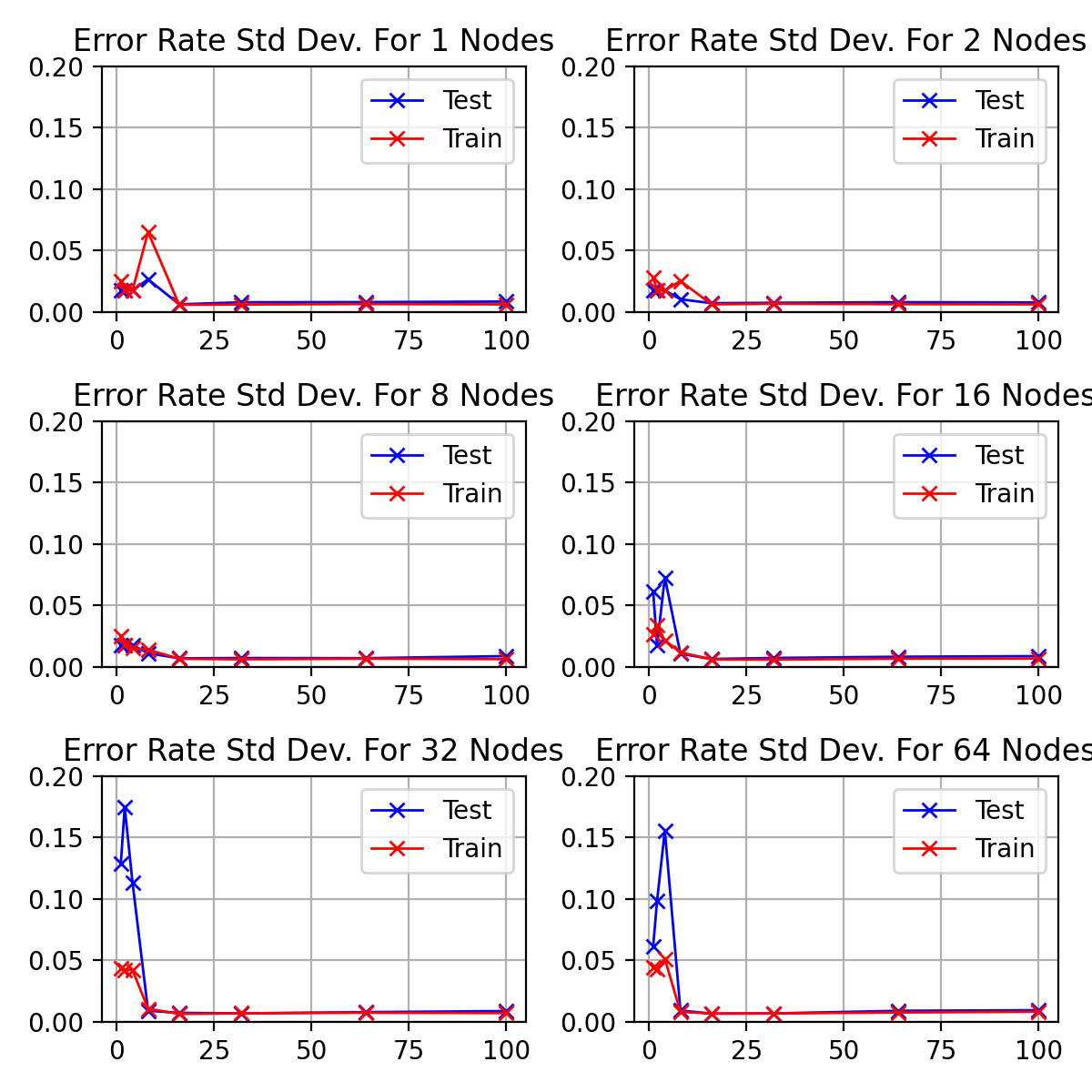

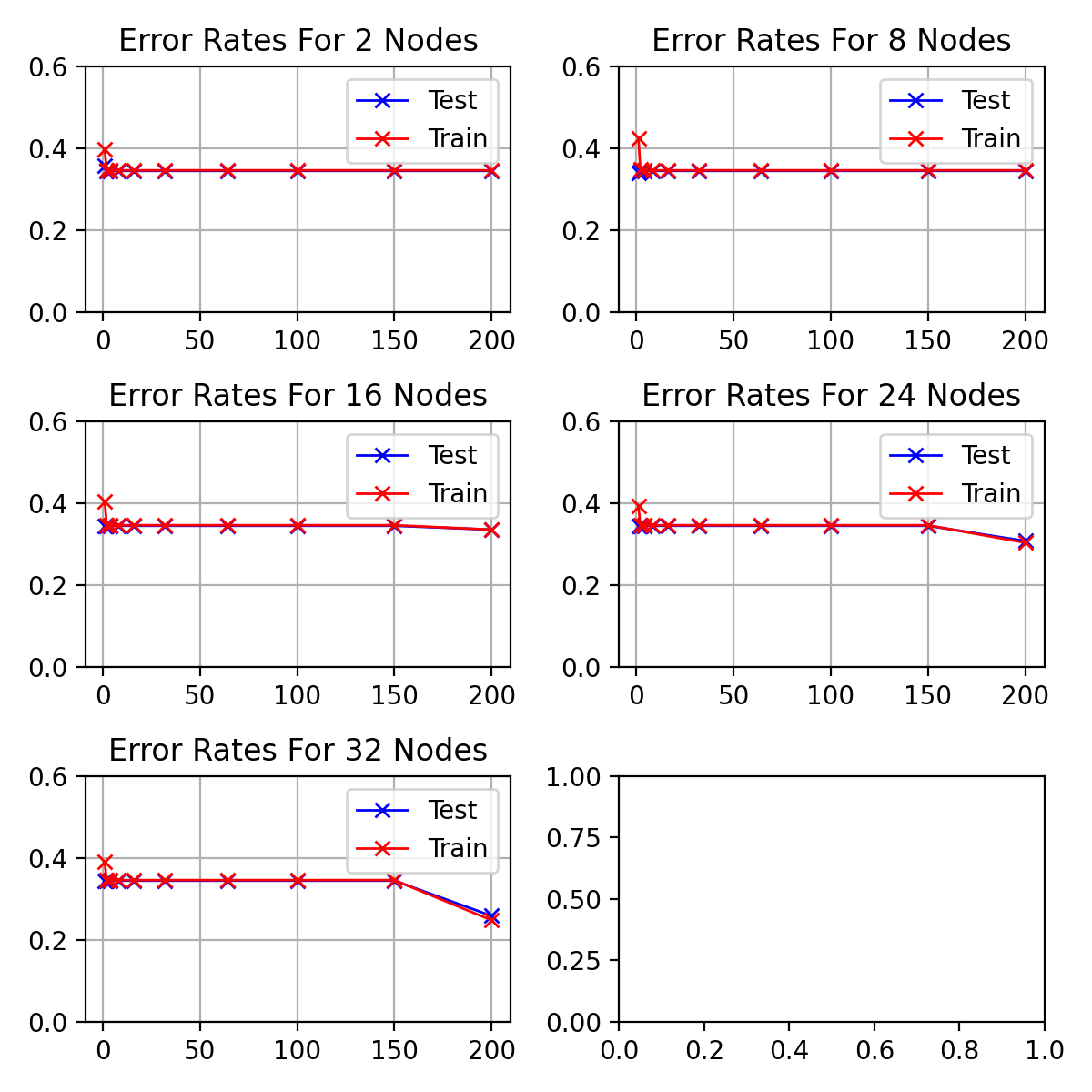

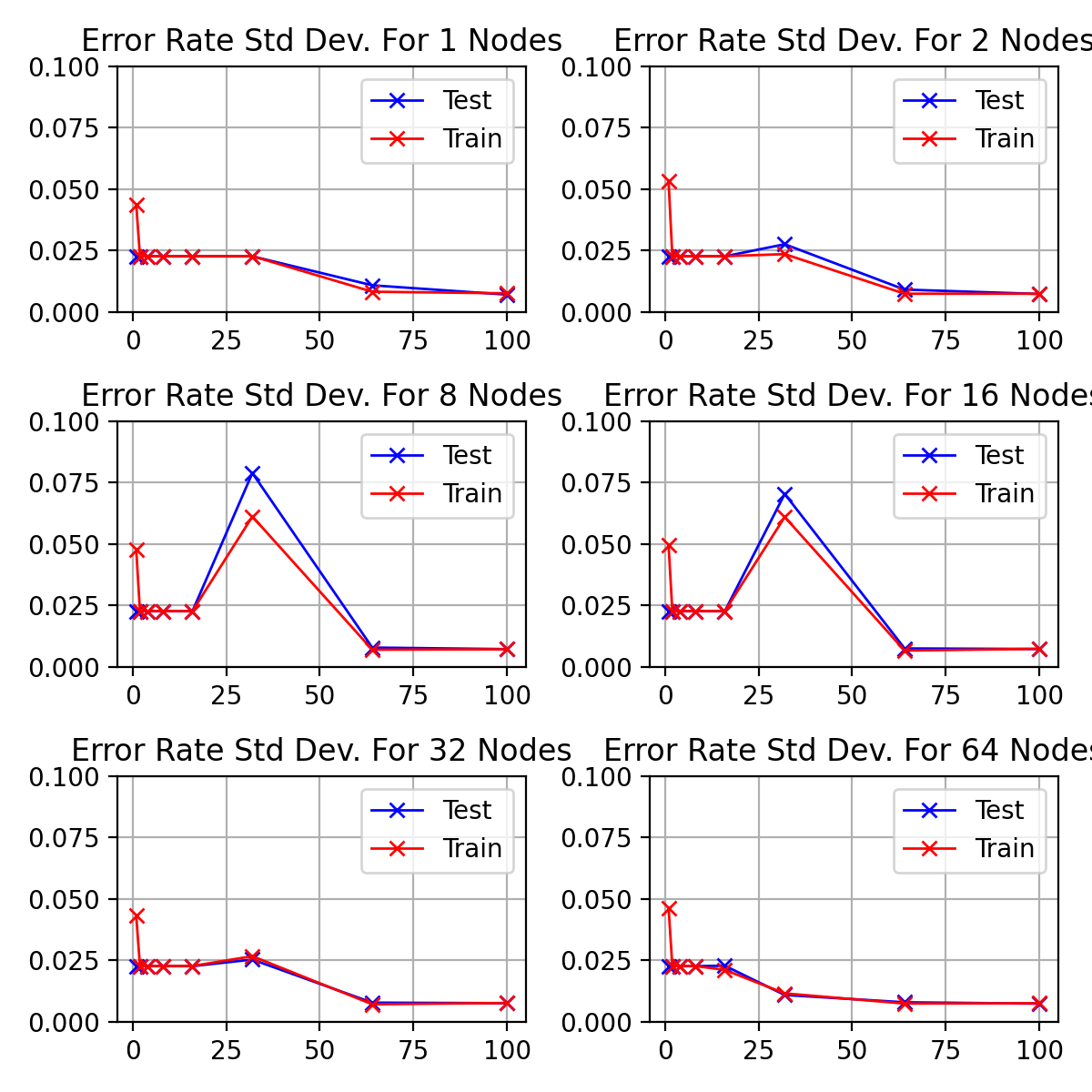

# In[170]:

|

||||

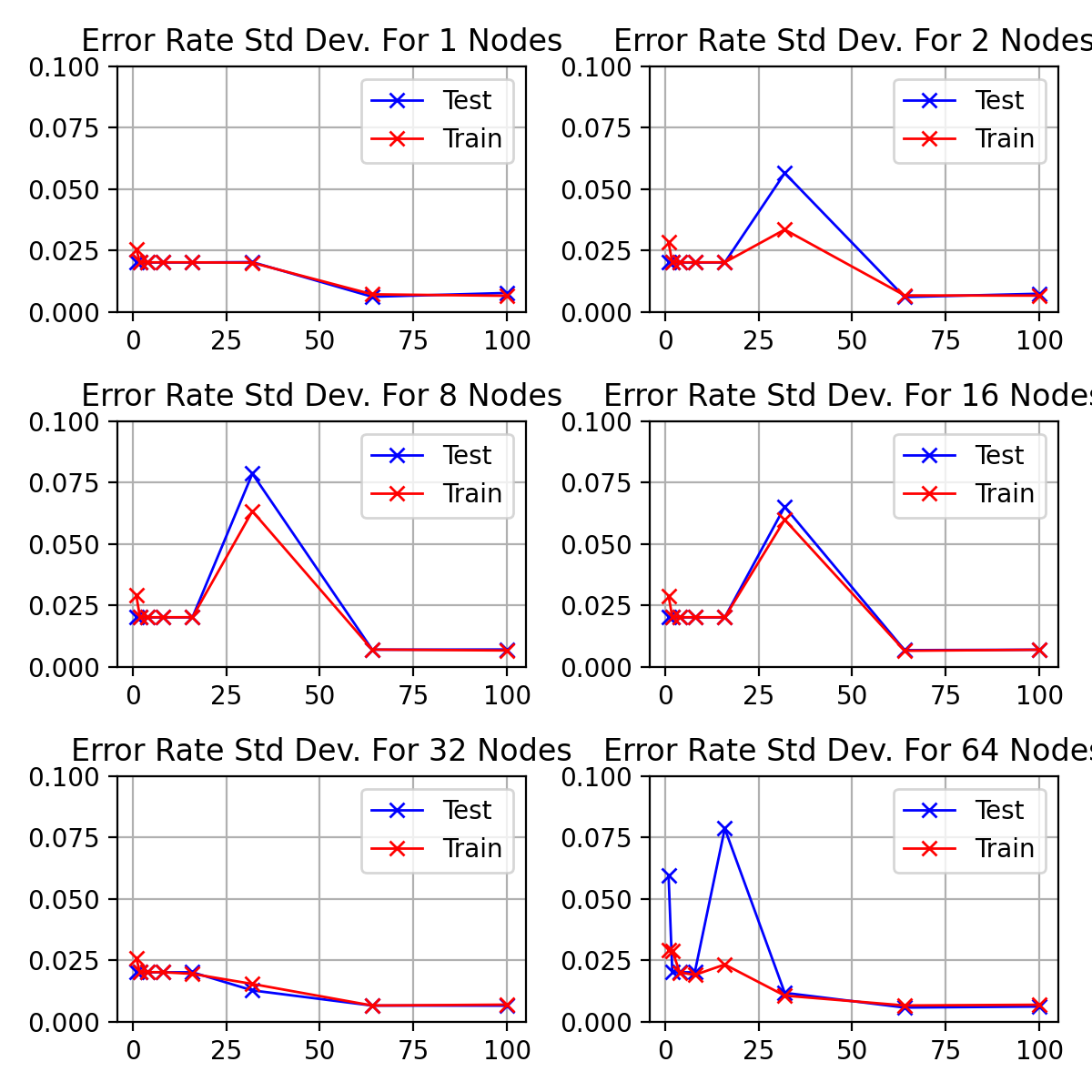

# In[315]:

|

||||

|

||||

|

||||

fig, axes = plt.subplots(math.ceil(len(multi_param_nodes) / 2), 2, figsize=(8, 8*math.ceil(len(multi_param_nodes) / 2)/3))

|

||||

fig, axes = plt.subplots(math.ceil(len(multi_param_nodes) / 2), 2, figsize=(6, 6*math.ceil(len(multi_param_nodes) / 2)/3))

|

||||

fig.set_dpi(fig_dpi)

|

||||

|

||||

for idx, (nodes, ax) in enumerate(zip(multi_param_nodes, axes.flatten())):

|

||||

@ -517,7 +544,7 @@ for idx, (nodes, ax) in enumerate(zip(multi_param_nodes, axes.flatten())):

|

||||

ax.grid()

|

||||

|

||||

fig.tight_layout()

|

||||

# fig.savefig(f'graphs/{exp1_testname}-test-train-error-rate-std.png')

|

||||

fig.savefig(f'graphs/{exp1_testname}-test-train-error-rate-std.png')

|

||||

|

||||

|

||||

# # Experiment 2

|

||||

@ -527,7 +554,7 @@ fig.tight_layout()

|

||||

# (Hint4: to implement majority vote you need to determine the predicted class labels -probably easier to implement yourself rather than use the ensemble matlab functions)

|

||||

#

|

||||

|

||||

# In[6]:

|

||||

# In[113]:

|

||||

|

||||

|

||||

num_models=[1, 3, 9, 15, 25]

|

||||

@ -558,20 +585,36 @@ def evaluate_ensemble_vote(hidden_nodes=16,

|

||||

for m in nmodels:

|

||||

if print_params:

|

||||

print(f"Models: {m}")

|

||||

|

||||

response = {"epochs": list(),

|

||||

"num_models": m}

|

||||

|

||||

###################

|

||||

## GET MODELS

|

||||

###################

|

||||

if isinstance(hidden_nodes, tuple): # for range of hidden nodes, calculate value per model

|

||||

if m == 1:

|

||||

models = [get_model(int(np.mean(hidden_nodes)), weight_init=weight_init)]

|

||||

response["nodes"] = [int(np.mean(hidden_nodes))]

|

||||

|

||||

else:

|

||||

models = [get_model(int(i), weight_init=weight_init)

|

||||

for i in np.linspace(*hidden_nodes, num=m)]

|

||||

response["nodes"] = [int(i) for i in np.linspace(*hidden_nodes, num=m)]

|

||||

|

||||

elif hidden_nodes == 'm':

|

||||

models = [get_model(i+1, weight_init=weight_init) for i in range(m)]

|

||||

response["nodes"] = [i+1 for i in range(m)]

|

||||

else: # not a range of epochs, just set to given value

|

||||

models = [get_model(hidden_nodes, weight_init=weight_init) for _ in range(m)]

|

||||

response["nodes"] = hidden_nodes

|

||||

|

||||

models = [get_model(hidden_nodes, weight_init=weight_init) for _ in range(m)]

|

||||

for model in models:

|

||||

model.compile(

|

||||

optimizer=optimizer(),

|

||||

loss=loss(),

|

||||

metrics=metrics

|

||||

)

|

||||

|

||||

|

||||

|

||||

response = {"nodes": hidden_nodes,

|

||||

"epochs": list(),

|

||||

"num_models": m}

|

||||

)

|

||||

|

||||

if tboard:

|

||||

if callbacks is not None:

|

||||

@ -584,13 +627,13 @@ def evaluate_ensemble_vote(hidden_nodes=16,

|

||||

###################

|

||||

histories = list()

|

||||

for idx, model in enumerate(models):

|

||||

if isinstance(epochs, tuple):

|

||||

if isinstance(epochs, tuple): # for range of epochs, calculate value per model

|

||||

if m == 1:

|

||||

e = (epochs[0] + epochs[1]) / 2 # average, not lower bound if single model

|

||||

e = np.mean(epochs) # average, not lower bound if single model

|

||||

else:

|

||||

e = np.linspace(epochs[0], epochs[1], num=m)[idx]

|

||||

e = np.linspace(*epochs, num=m)[idx]

|

||||

e = int(e)

|

||||

else:

|

||||

else: # not a range of epochs, just set to given value

|

||||

e = epochs

|

||||

|

||||

# print(m, e) # debug

|

||||

@ -669,15 +712,19 @@ def evaluate_ensemble_vote(hidden_nodes=16,

|

||||

# ## Single Iteration

|

||||

# Run a single iteration of ensemble model investigations

|

||||

|

||||

# In[11]:

|

||||

# In[224]:

|

||||

|

||||

|

||||

single_ensem_results = list()

|

||||

for test in evaluate_ensemble_vote(epochs=(5, 300), optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=0.02)):

|

||||

# for test in evaluate_ensemble_vote(epochs=(5, 300), optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=0.02)):

|

||||

for test in evaluate_ensemble_vote(hidden_nodes=(1, 400),

|

||||

epochs=20,

|

||||

optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=0.02)):

|

||||

single_ensem_results.append(test)

|

||||

print(test["nodes"], test["epochs"])

|

||||

|

||||

|

||||

# In[16]:

|

||||

# In[225]:

|

||||

|

||||

|

||||

fig = plt.figure(figsize=(8, 5))

|

||||

@ -685,15 +732,15 @@ fig.set_dpi(fig_dpi)

|

||||

|

||||

ensem_x = [i["num_models"] for i in single_ensem_results]

|

||||

|

||||

plt.plot(ensem_x, [i["accuracy"] for i in single_ensem_results], 'x-', label='Ensemble Accuracy')

|

||||

plt.plot(ensem_x, [i["individual_accuracy"] for i in single_ensem_results], 'x-', label='Individual Accuracy')

|

||||

plt.plot(ensem_x, [i["agreement"] for i in single_ensem_results], 'x-', label='Agreement')

|

||||

plt.plot(ensem_x, 1 - np.array([i["accuracy"] for i in single_ensem_results]), 'x-', label='Ensemble Test')

|

||||

plt.plot(ensem_x, 1 - np.array([i["individual_accuracy"] for i in single_ensem_results]), 'x-', label='Individual Test')

|

||||

plt.plot(ensem_x, 1 - np.array([i["agreement"] for i in single_ensem_results]), 'x-', label='Disagreement')

|

||||

|

||||

plt.title("Test Accuracy for Horizontal Model Ensembles")

|

||||

plt.ylim(0, 1)

|

||||

plt.title("Test Error Rates for Horizontal Model Ensembles")

|

||||

plt.ylim(0)

|

||||

plt.grid()

|

||||

plt.legend()

|

||||

plt.ylabel("Accuracy")

|

||||

plt.ylabel("Error Rate")

|

||||

plt.xlabel("Number of Models")

|

||||

plt.show()

|

||||

|

||||

@ -710,24 +757,58 @@ plt.show()

|

||||

#

|

||||

# ### Pickle Results

|

||||

#

|

||||

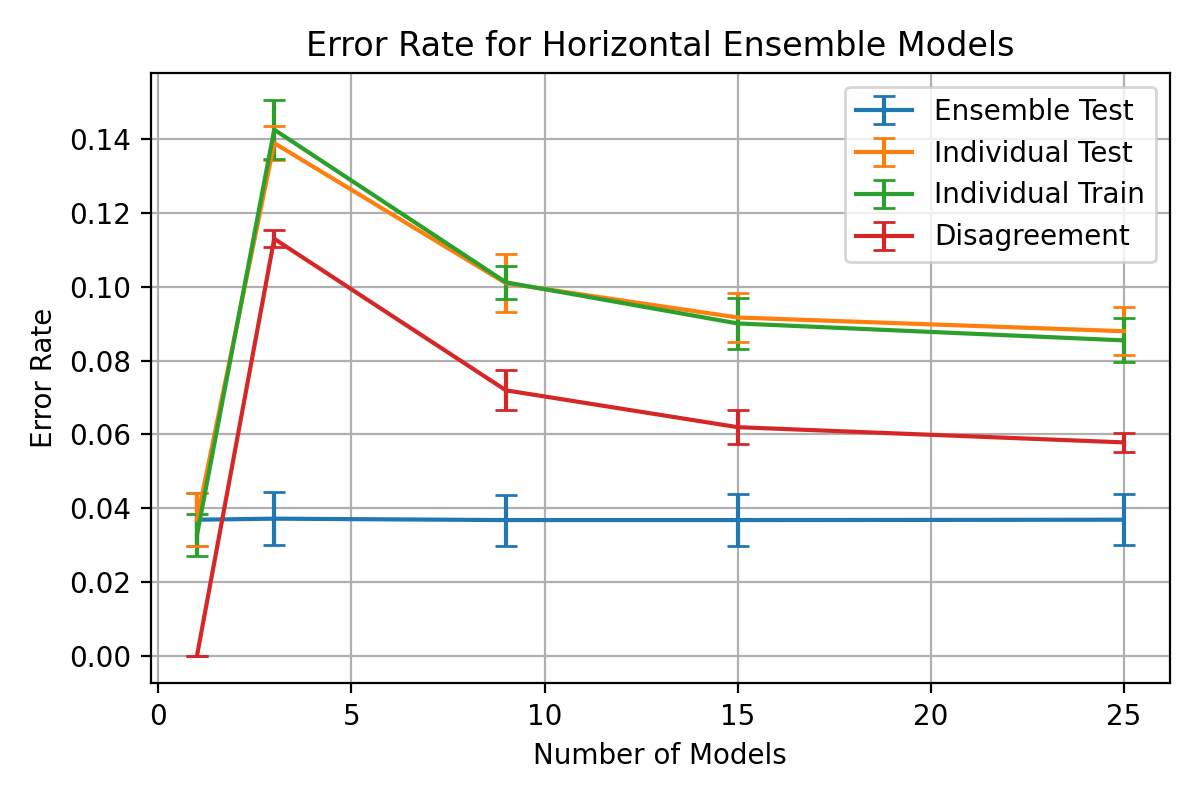

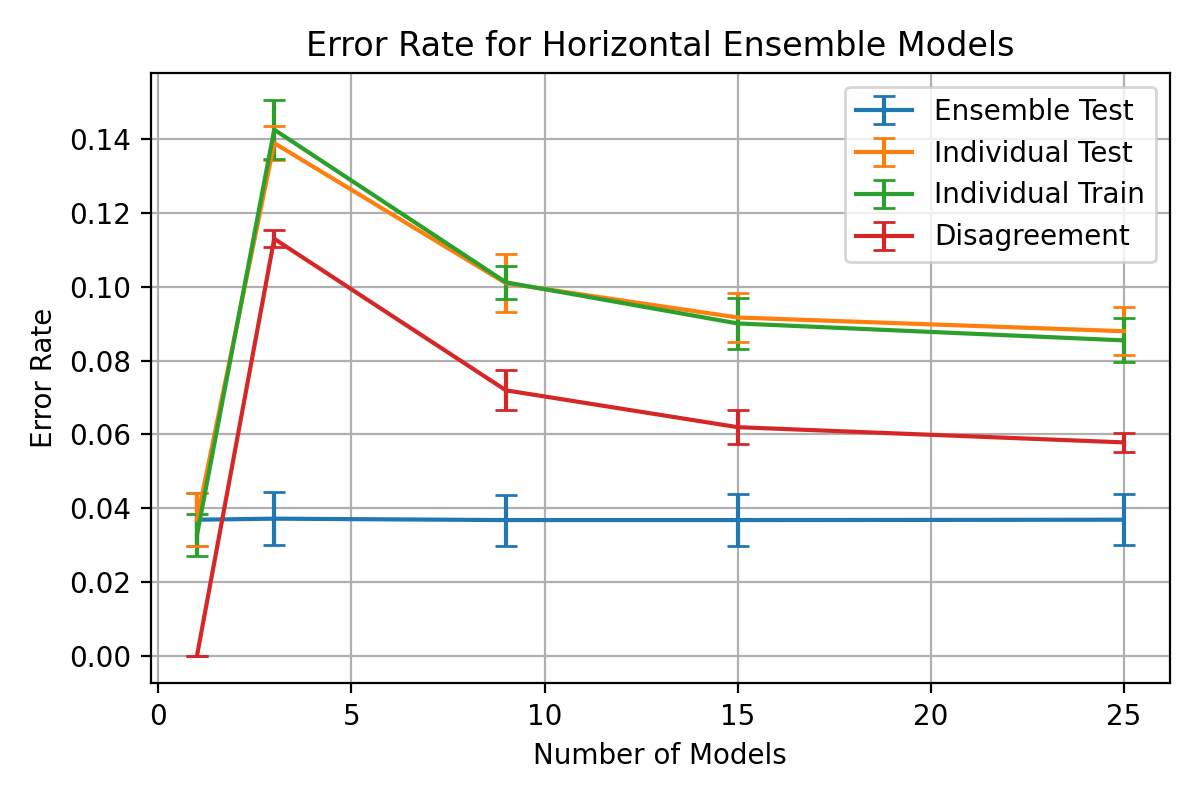

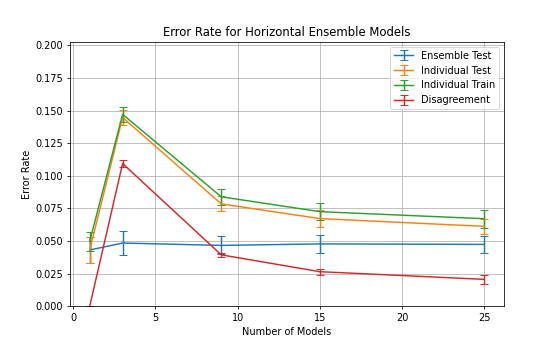

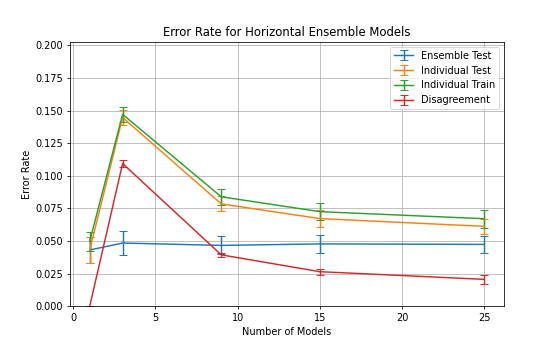

# | test | learning rate | momentum | batch size | hidden nodes | epochs | models |

|

||||

# | --- | --- | --- | --- | --- | --- | --- |

|

||||

# |3|0.06|0.05|35|16|1 - 300|1, 3, 9, 15, 25|

|

||||

# | test | learning rate | momentum | batch size | hidden nodes | epochs | models | stratify |

|

||||

# | --- | --- | --- | --- | --- | --- | --- | --- |

|

||||

# |3|0.06|0.05|35|16|1 - 300|1, 3, 9, 15, 25| |

|

||||

# |4|0.06|0.05|35|1 - 50|50|1, 3, 9, 15, 25| |

|

||||

# |5|0.06|0.05|35|1 - 300|50|1, 3, 9, 15, 25| |

|

||||

# |6|0.001|0.01|35|1 - 400|50|1, 3, 9, 15, 25| |

|

||||

# |7|0.01|0.01|35|1 - 400|30 - 150|1, 3, 9, 15, 25| |

|

||||

# |8|0.03|0.01|35|1 - 400|5 - 100|1, 3, 9, 15, 25| |

|

||||

# |9|0.1|0.01|35|1 - 400|20|1, 3, 9, 15, 25| |

|

||||

# |10|0.15|0.01|35|1 - 400|20|1, 3, 9, 15, 25, 35, 45| |

|

||||

# |11|0.15|0.01|35|1 - 400|10|1, 3, 9, 15, 25, 35, 45| |

|

||||

# |12|0.02|0.01|35|m|50|1, 3, 9, 15, 25, 35, 45| |

|

||||

# |13|0.01 exp 0.98, 1|0.01|35|1 - 200|50|1, 3, 9, 15, 25, 35, 45| n |

|

||||

# |14|0.01|0.01|35|1 - 200|50|1, 3, 9, 15, 25, 35, 45| n |

|

||||

# |15|0.01|0.9|35|50 - 100|50|1, 3, 5, 7, 9, 15, 25, 35, 45| n |

|

||||

# |16|0.01|0.1|35|50 - 100|50|1, 3, 5, 7, 9, 15, 25, 35, 45| n |

|

||||

# |17|0.1|0.1|35|50 - 100|50 - 100|1, 3, 5, 7, 9, 15, 25, 35, 45| n |

|

||||

|

||||

# In[24]:

|

||||

# In[335]:

|

||||

|

||||

|

||||

batch_size=35

|

||||

test_size=0.5

|

||||

epochs=50

|

||||

lr_schedule = tf.keras.optimizers.schedules.ExponentialDecay(0.01,

|

||||

decay_steps=1,

|

||||

decay_rate=0.98)

|

||||

|

||||

plt.plot(range(epochs+1), [lr_schedule(i) for i in range(epochs+1)])

|

||||

plt.grid()

|

||||

plt.ylim(0)

|

||||

plt.xlabel('Epochs')

|

||||

plt.ylabel('Learning Rate')

|

||||

plt.show()

|

||||

|

||||

|

||||

# In[357]:

|

||||

|

||||

|

||||

multi_ensem_results = list()

|

||||

multi_ensem_iterations = 2

|

||||

multi_ensem_iterations = 30

|

||||

for i in range(multi_ensem_iterations):

|

||||

print(f"Iteration {i+1}/{multi_ensem_iterations}")

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5, stratify=labels)

|

||||

multi_ensem_results.append(list(evaluate_ensemble_vote(epochs=(1, 100),

|

||||

hidden_nodes=16,

|

||||

nmodels=[1, 3, 7, 11, 15],

|

||||

optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=0.05, momentum=0.01),

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=test_size,

|

||||

# stratify=labels

|

||||

)

|

||||

multi_ensem_results.append(list(evaluate_ensemble_vote(epochs=(50, 100),

|

||||

hidden_nodes=(50, 100),

|

||||

nmodels=[1, 3, 5, 7, 9, 15, 25, 35, 45],

|

||||

optimizer=lambda: tf.keras.optimizers.SGD(learning_rate=0.1, momentum=0.1),

|

||||

weight_init=lambda: 'random_uniform',

|

||||

batch_size=35,

|

||||

batch_size=batch_size,

|

||||

dtrain=data_train,

|

||||

dtest=data_test,

|

||||

ltrain=labels_train,

|

||||

@ -748,7 +829,17 @@ for i in range(multi_ensem_iterations):

|

||||

# 2. Individual Accuracy

|

||||

# 3. Agreement

|

||||

|

||||

# In[25]:

|

||||

# In[322]:

|

||||

|

||||

|

||||

def test_tensor_data(test):

|

||||

return [test["accuracy"],

|

||||

np.mean([i["accuracy"][-1] for i in test["history"]]), # avg train acc

|

||||

test["individual_accuracy"],

|

||||

test["agreement"]]

|

||||

|

||||

|

||||

# In[362]:

|

||||

|

||||

|

||||

multi_ensem_models = sorted(list({i["num_models"] for i in multi_ensem_results[0]}))

|

||||

@ -759,10 +850,7 @@ for iter_idx, iteration in enumerate(multi_ensem_results):

|

||||

for single_test in iteration:

|

||||

|

||||

ensem_models_idx = multi_ensem_models.index(single_test['num_models'])

|

||||

accuracy_ensem_tensor[iter_idx, :, ensem_models_idx] = [single_test["accuracy"],

|

||||

np.mean([i["accuracy"][-1] for i in single_test["history"]]),

|

||||

single_test["individual_accuracy"],

|

||||

single_test["agreement"]]

|

||||

accuracy_ensem_tensor[iter_idx, :, ensem_models_idx] = test_tensor_data(single_test)

|

||||

|

||||

mean_ensem_accuracy = np.mean(accuracy_ensem_tensor, axis=0)

|

||||

std_ensem_accuracy = np.std(accuracy_ensem_tensor, axis=0)

|

||||

@ -778,18 +866,26 @@ print(f'Momentum: {multi_ensem_results[0][0]["optimizer"]["momentum"]:.3}')

|

||||

# #### Export/Import Test Sets

|

||||

#

|

||||

# Export mean and standard deviations for retrieval and visualisation

|

||||

pickle.dump(multi_ensem_results, open("result.p", "wb"))

|

||||

# In[22]:

|

||||

|

||||

# In[358]:

|

||||

|

||||

|

||||

multi_ensem_results = pickle.load(open("results/exp2-test3.p", "rb"))

|

||||

exp2_testname = 'exp2-test17'

|

||||

pickle.dump(multi_ensem_results, open(f"results/{exp2_testname}.p", "wb"))

|

||||

|

||||

|

||||

# In[349]:

|

||||

|

||||

|

||||

exp2_testname = 'exp2-test16'

|

||||

multi_ensem_results = pickle.load(open(f"results/{exp2_testname}.p", "rb"))

|

||||

|

||||

np.savetxt("exp2-mean.csv", mean_ensem_accuracy, delimiter=',')

|

||||

np.savetxt("exp2-std.csv", std_ensem_accuracy, delimiter=',')mean_ensem_accuracy = np.loadtxt("results/test1-exp2-mean.csv", delimiter=',')

|

||||

std_ensem_accuracy = np.loadtxt("results/test1-exp2-std.csv", delimiter=',')

|

||||

# ### Best Results

|

||||

|

||||

# In[26]:

|

||||

# In[363]:

|

||||

|

||||

|

||||

best_ensem_accuracy_idx = np.unravel_index(np.argmax(mean_ensem_accuracy[0, :]), mean_ensem_accuracy.shape)

|

||||

@ -801,10 +897,10 @@ print(f'Models: {best_ensem_accuracy_models}, {best_ensem_accuracy * 100:.3}% Ac

|

||||

|

||||

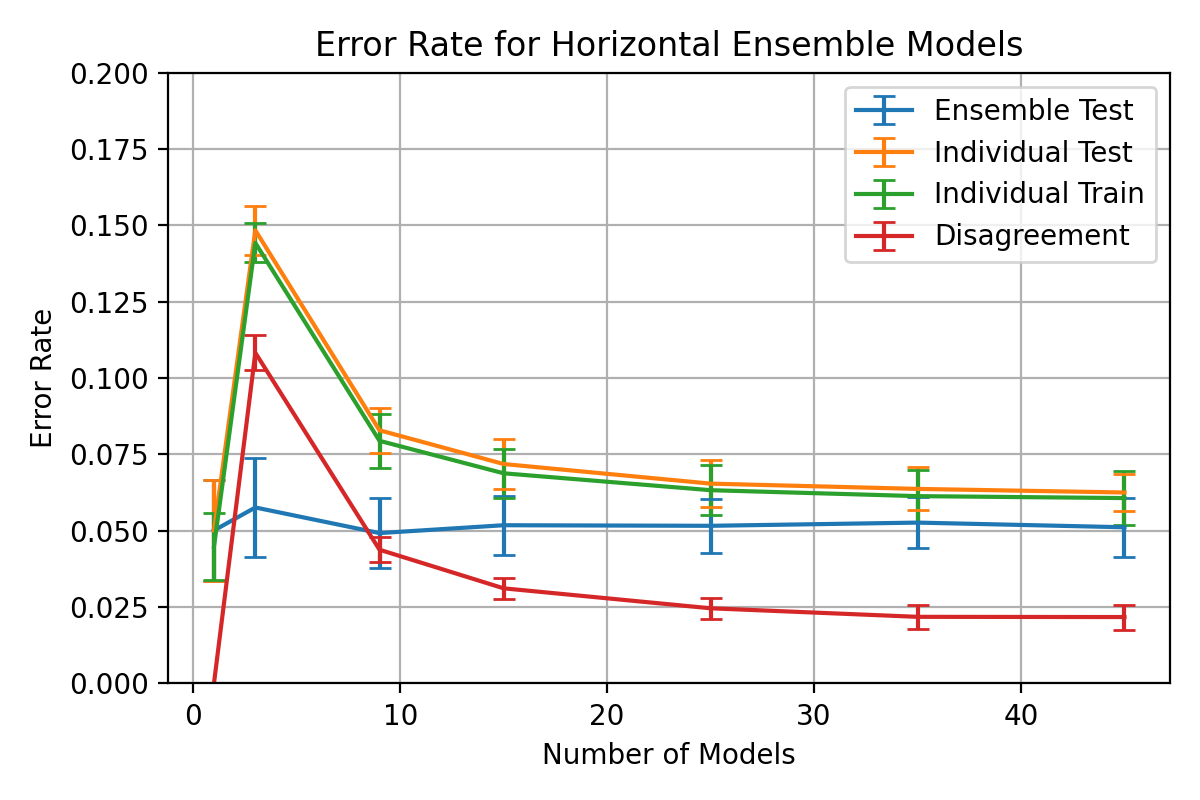

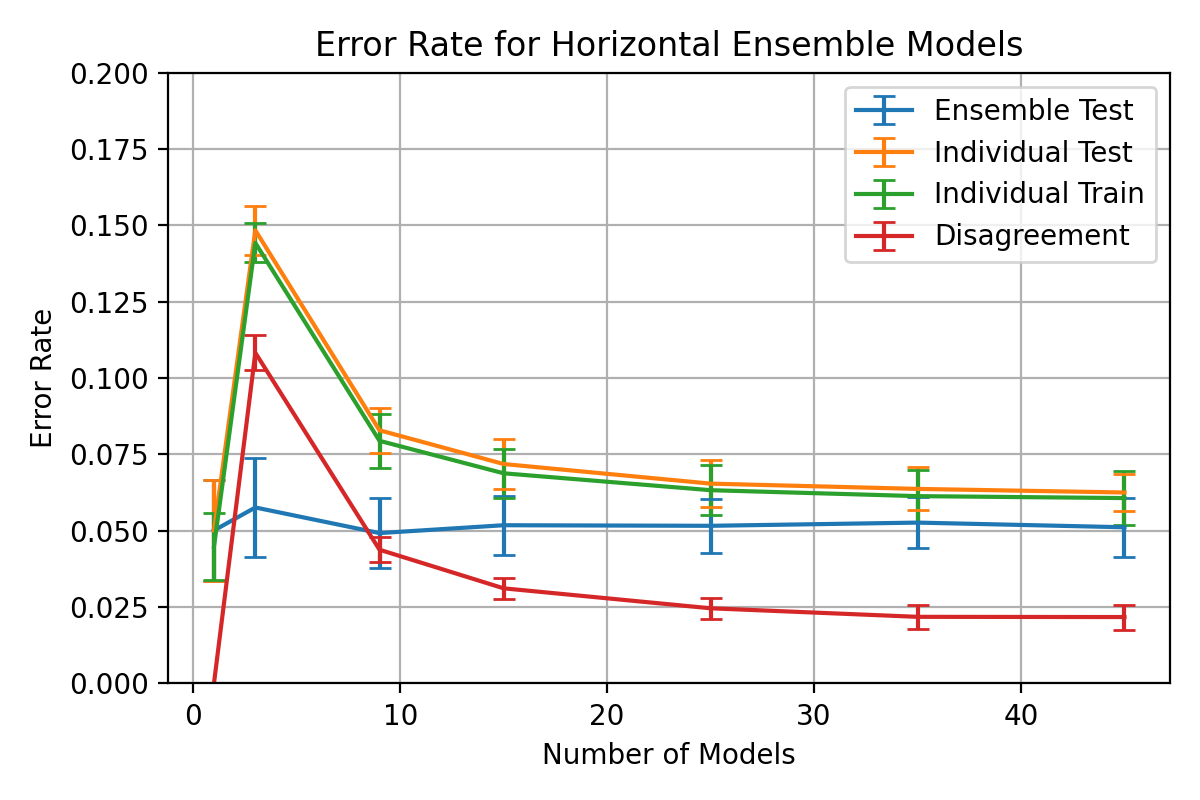

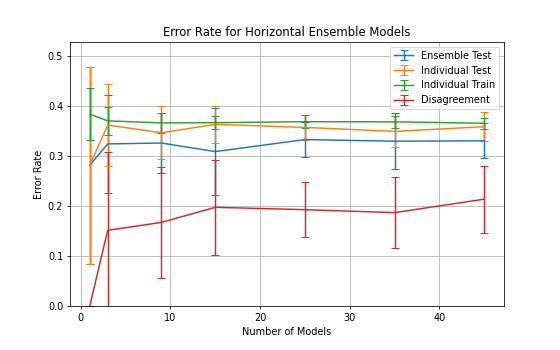

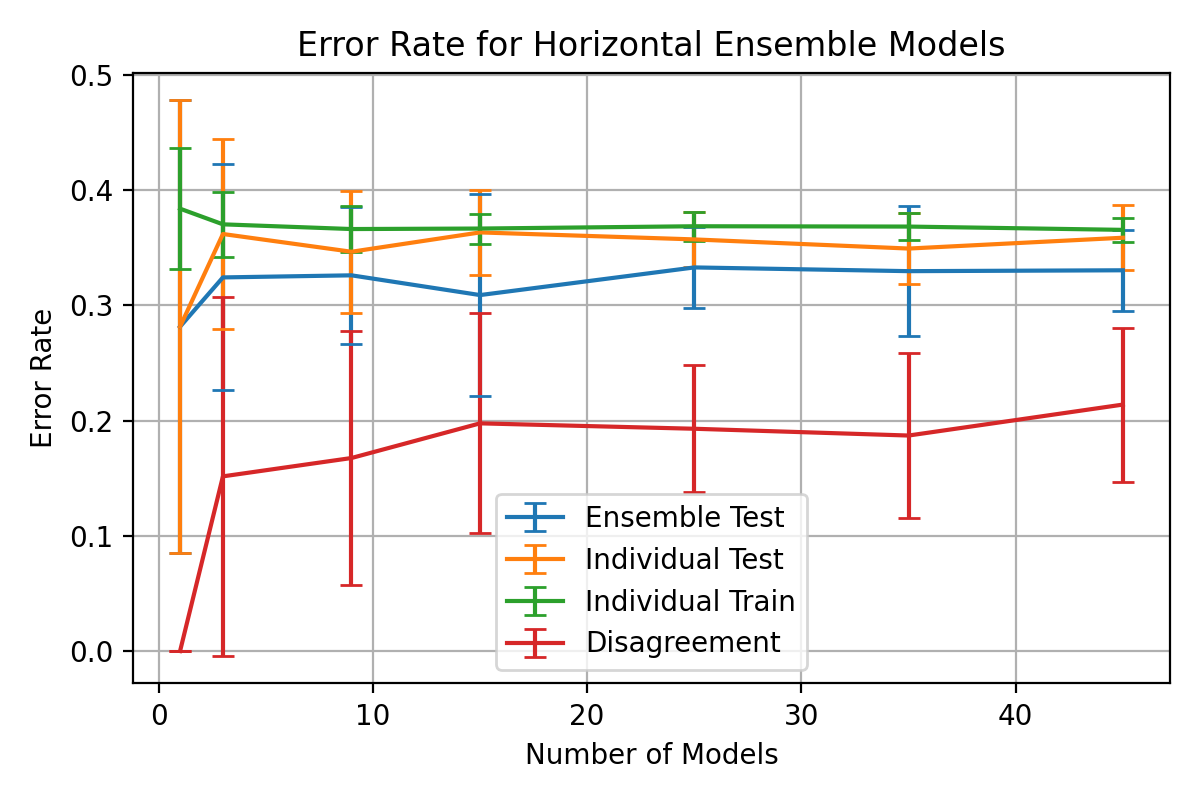

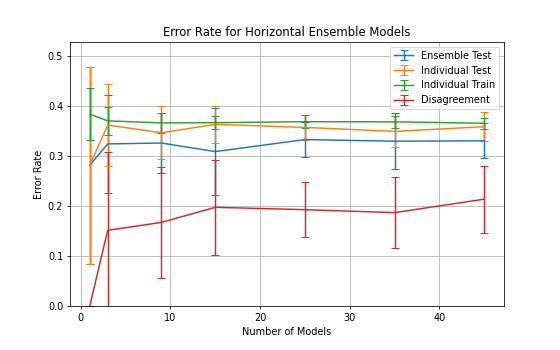

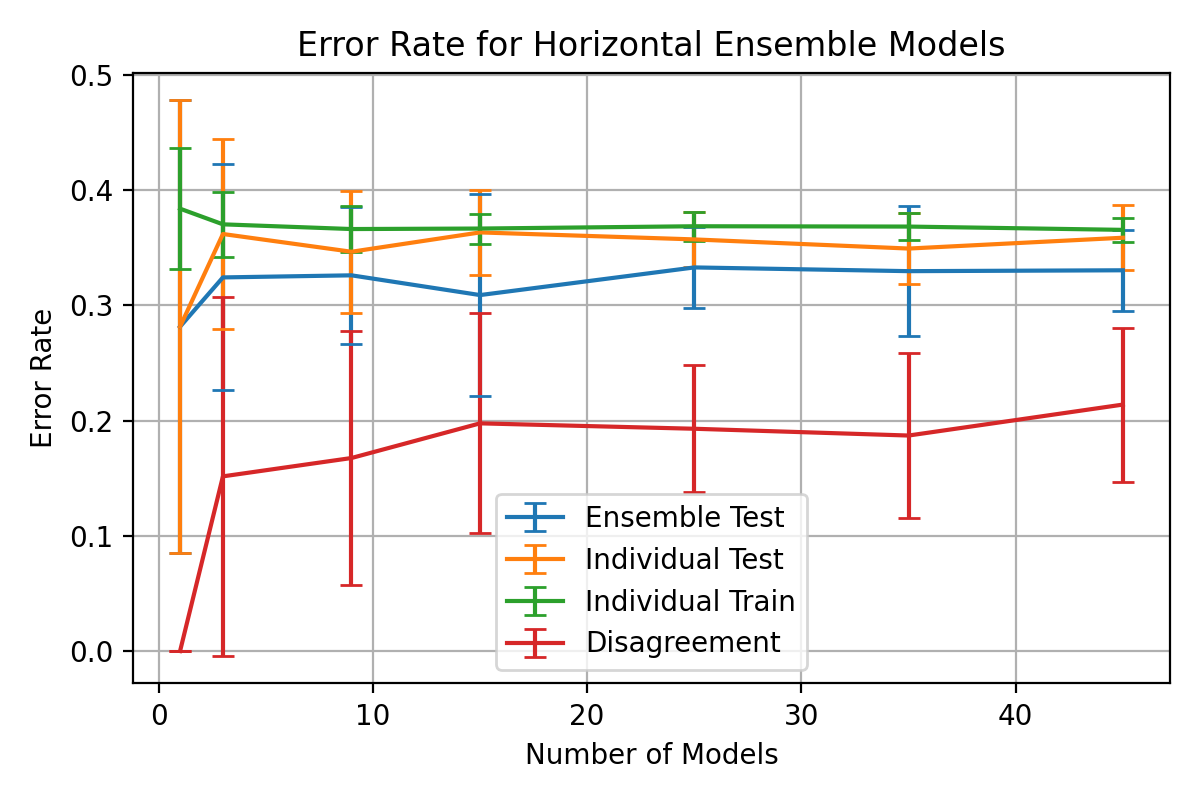

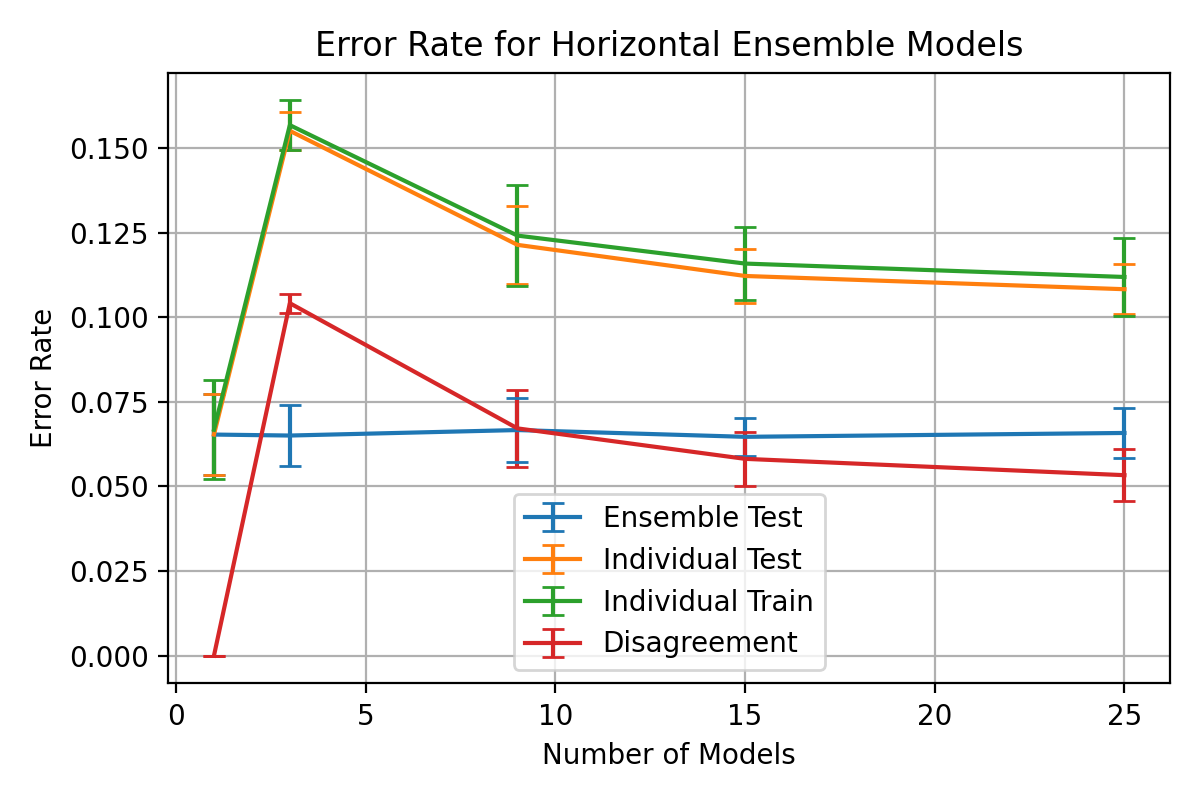

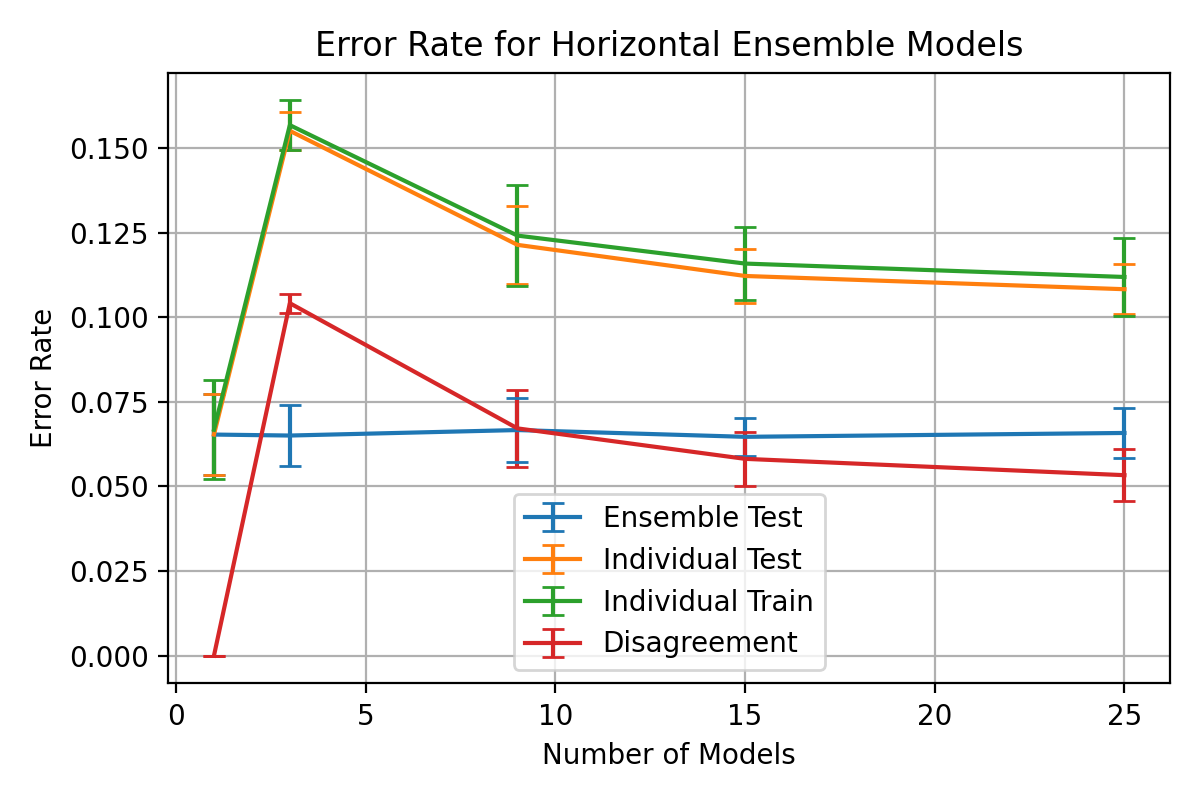

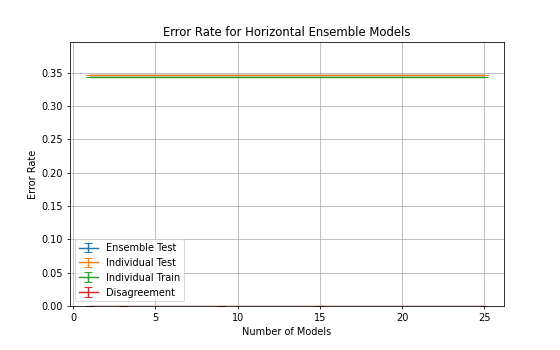

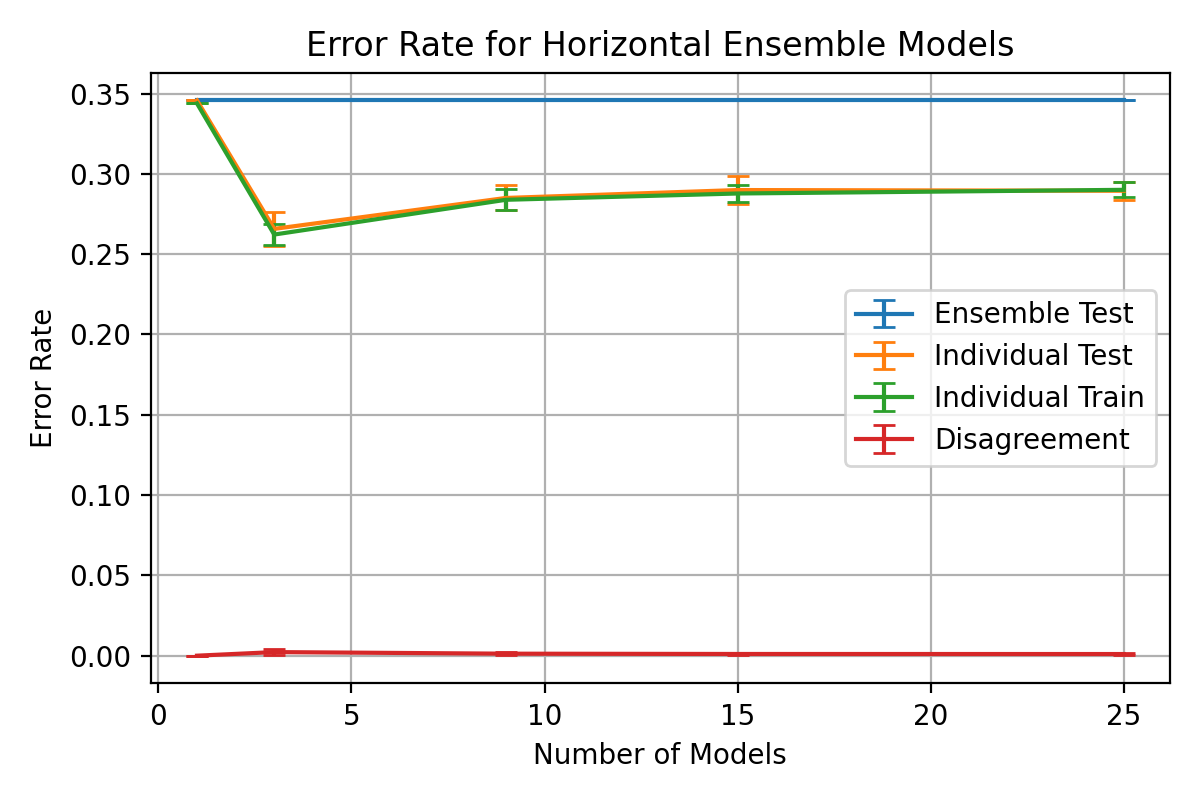

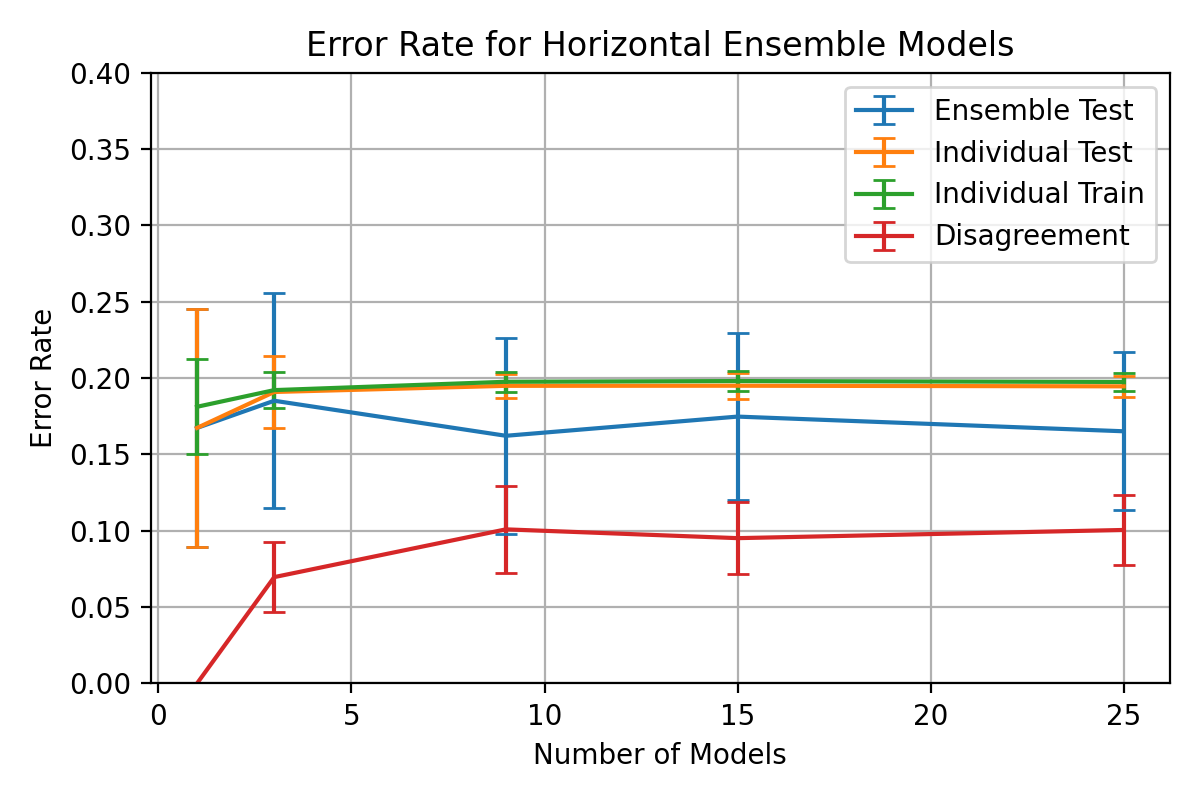

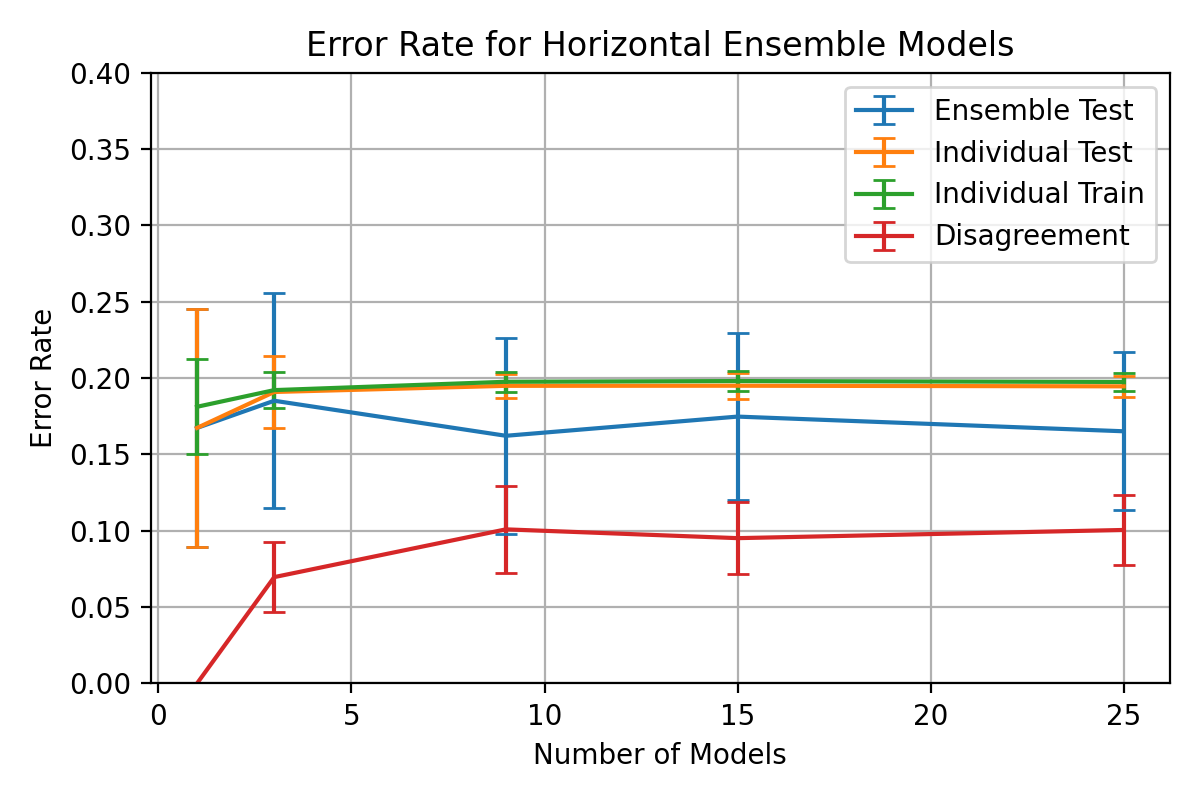

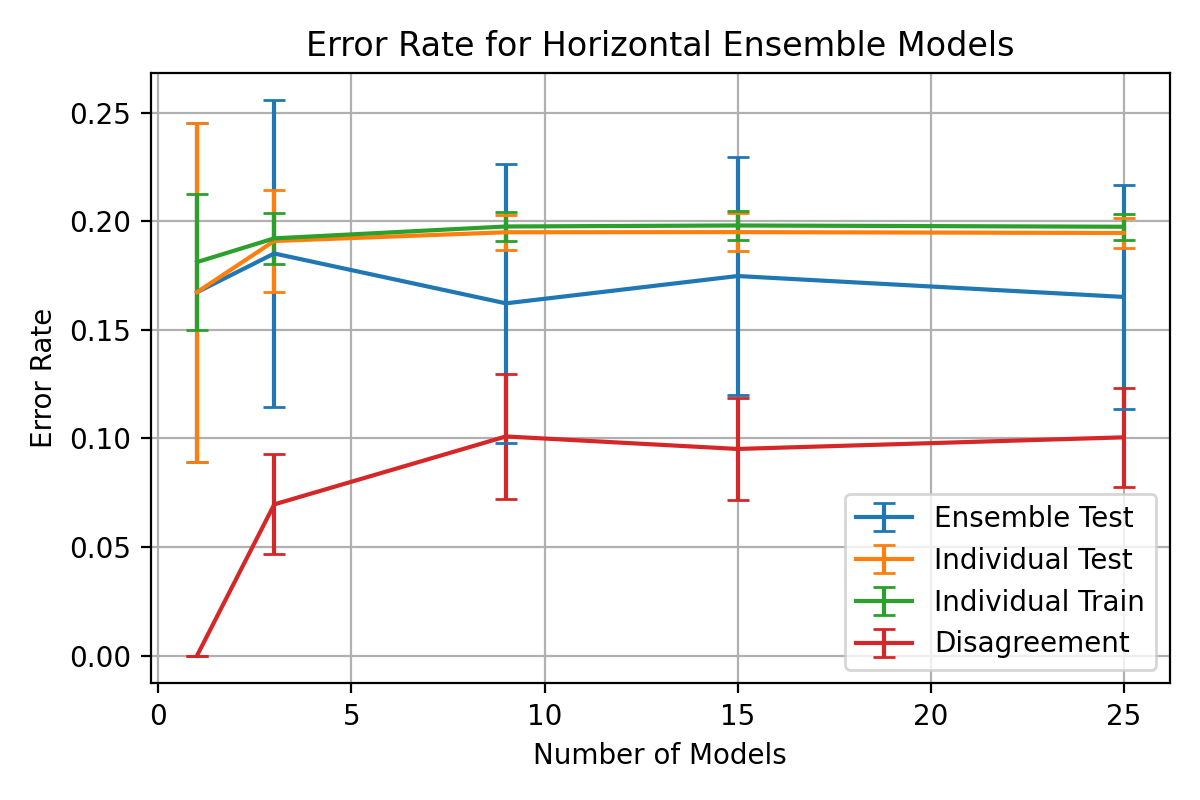

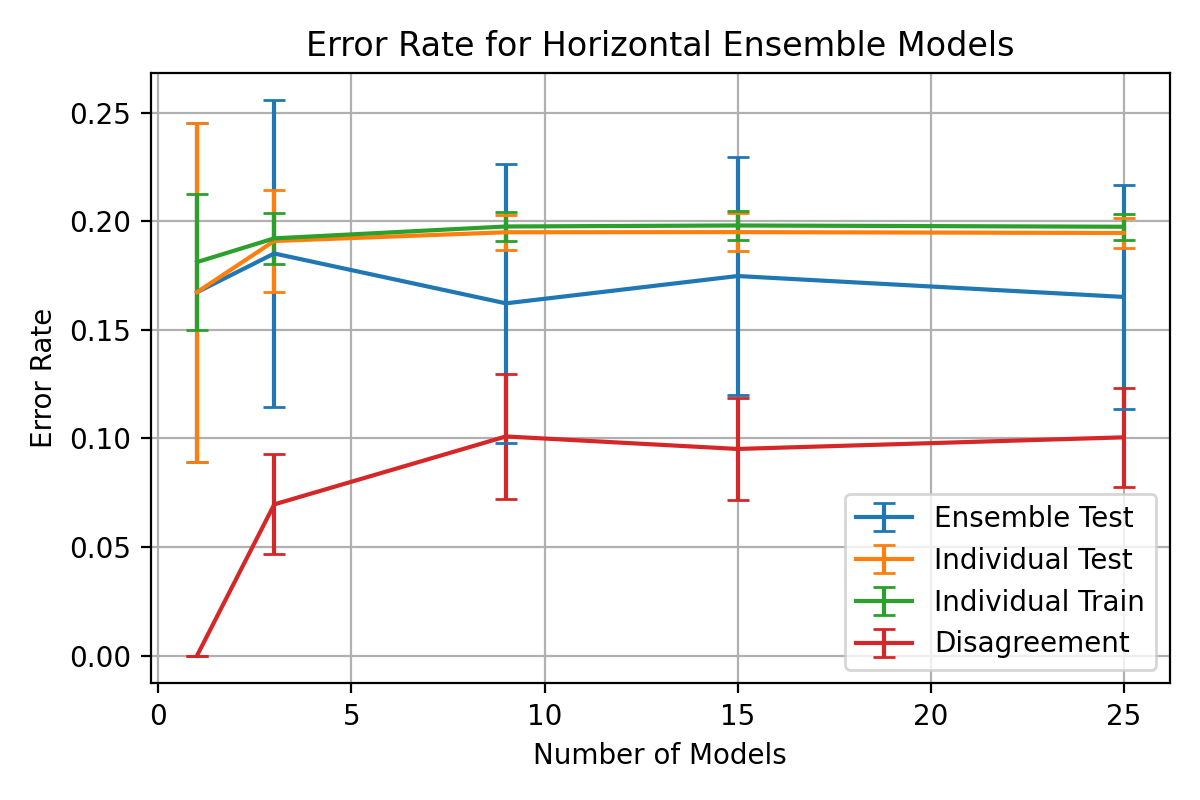

# ### Test/Train Error Over Model Numbers

|

||||

|

||||

# In[29]:

|

||||

# In[364]:

|

||||

|

||||

|

||||

fig = plt.figure(figsize=(8, 5))

|

||||

fig = plt.figure(figsize=(6, 4))

|

||||

fig.set_dpi(fig_dpi)

|

||||

|

||||

# plt.plot(multi_ensem_models, 1 - mean_ensem_accuracy[0, :], 'x-', label='Ensemble Test')

|

||||

@ -818,12 +914,16 @@ plt.errorbar(multi_ensem_models, 1 - mean_ensem_accuracy[1, :], yerr=std_ensem_a

|

||||

plt.errorbar(multi_ensem_models, 1 - mean_ensem_accuracy[3, :], yerr=std_ensem_accuracy[3, :], capsize=4, label='Disagreement')

|

||||

|

||||

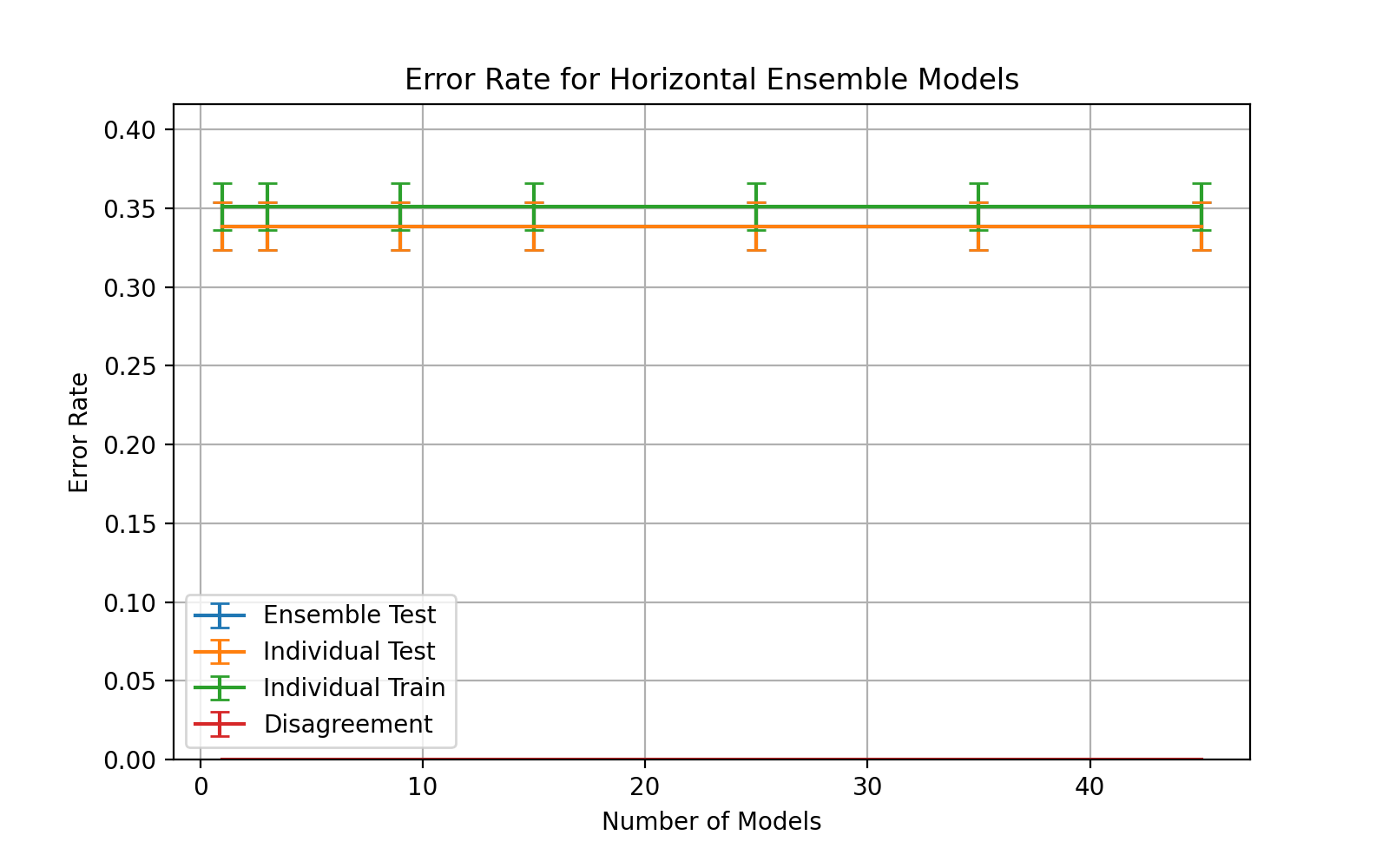

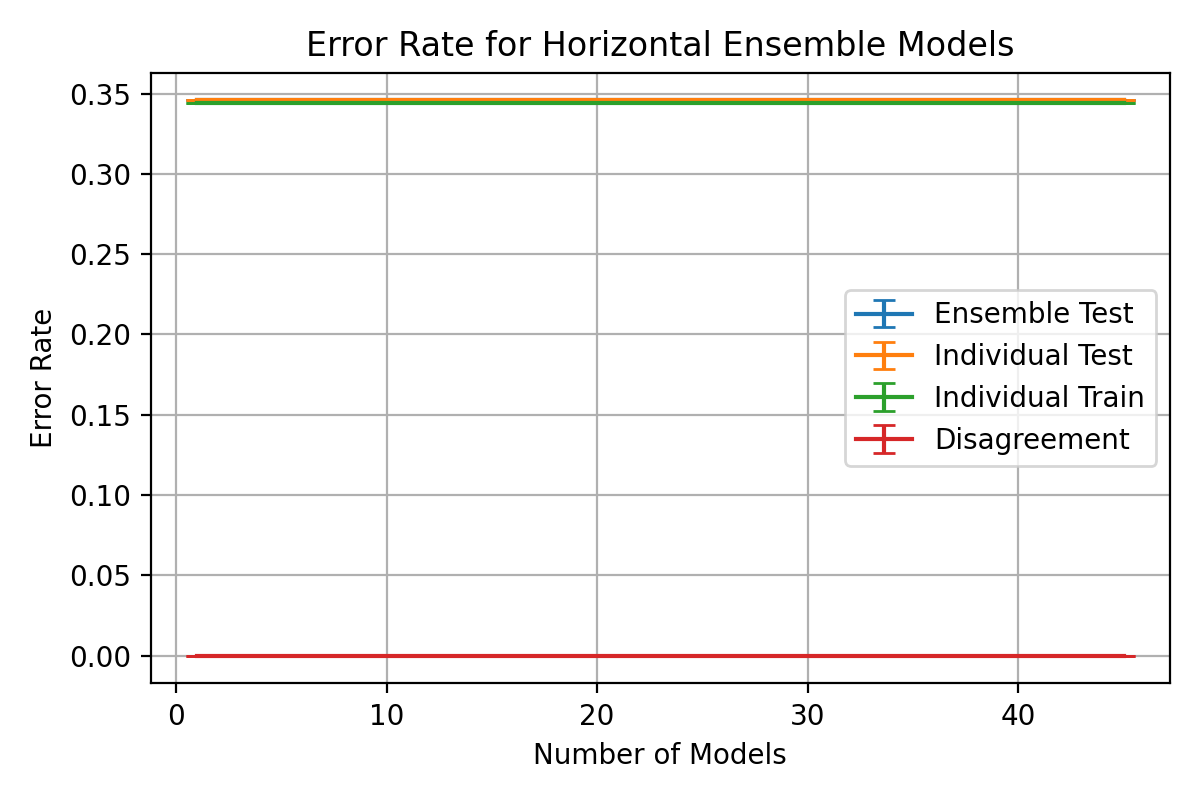

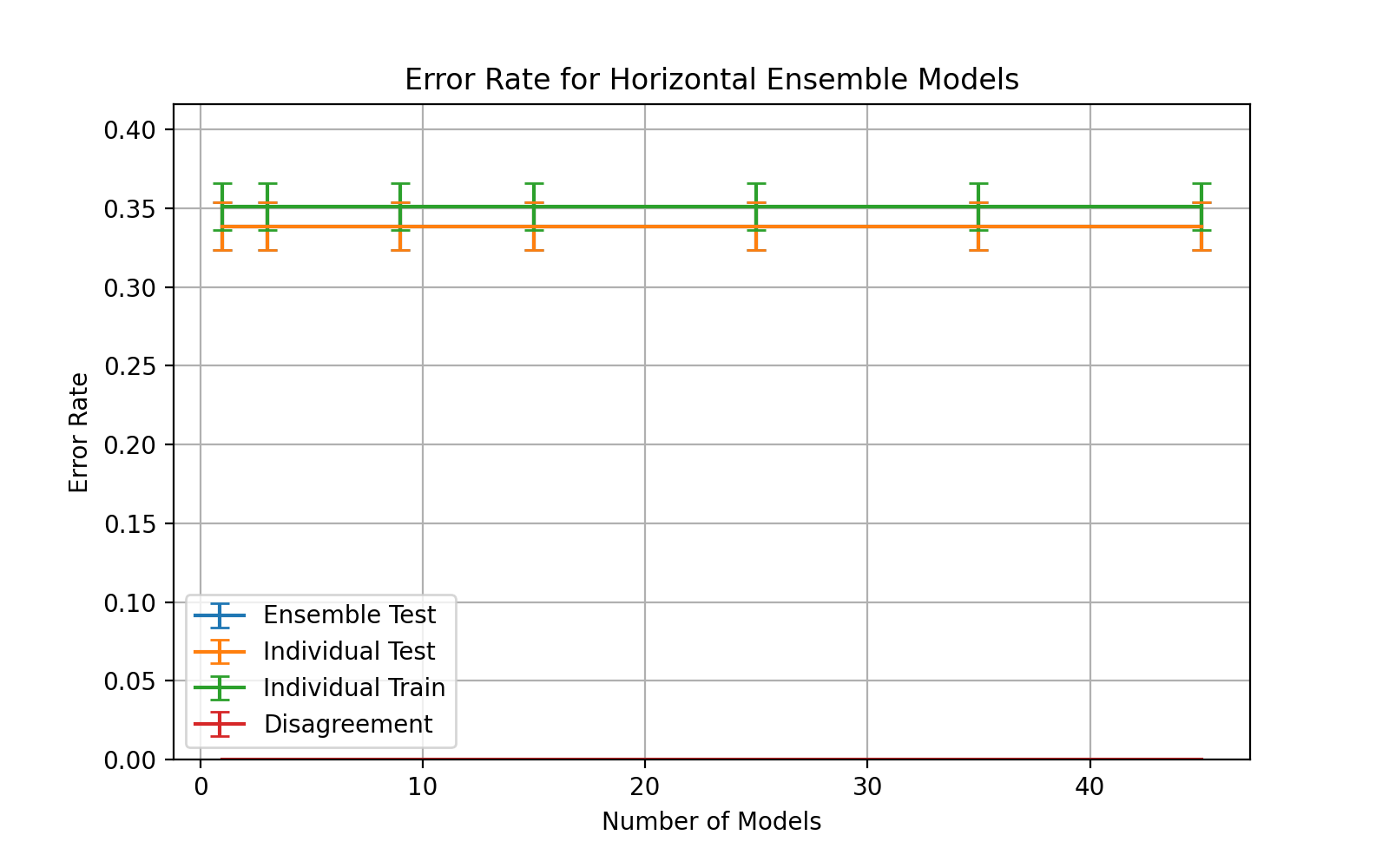

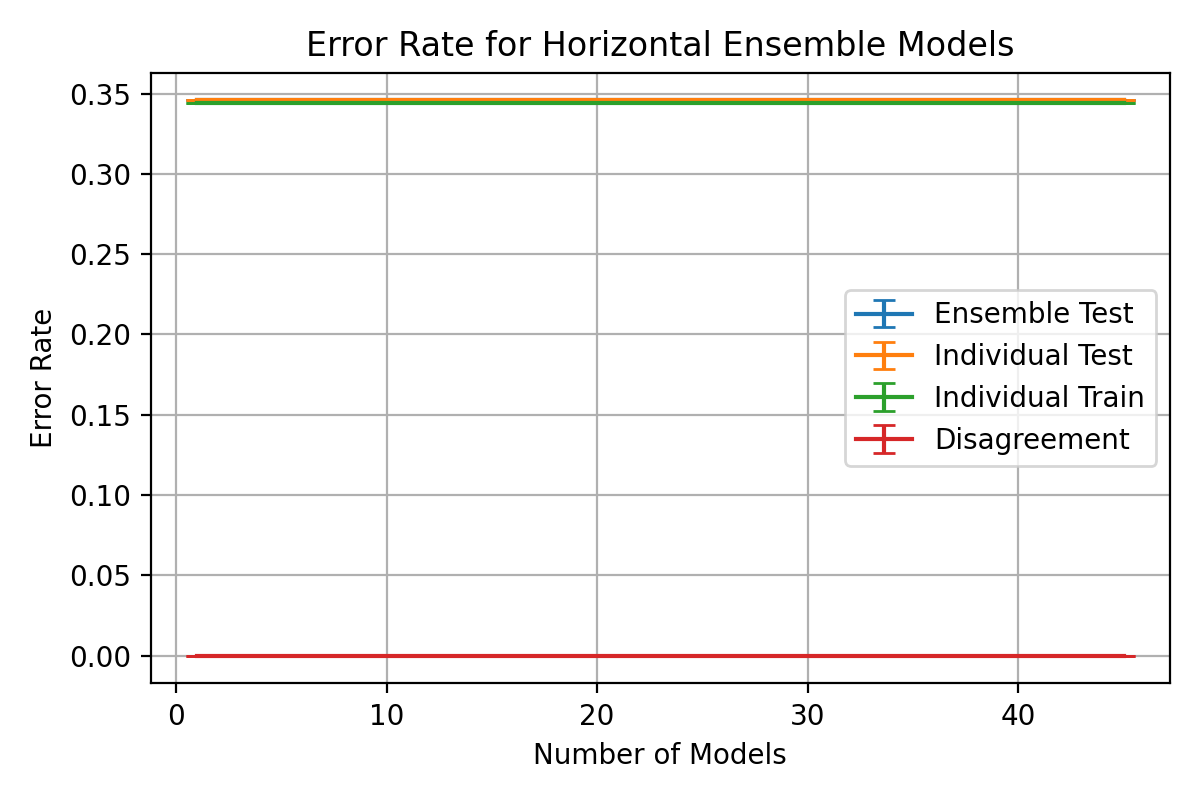

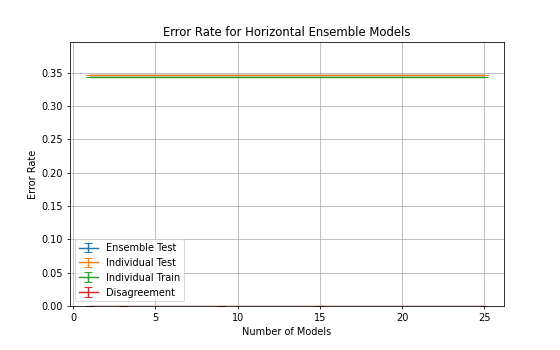

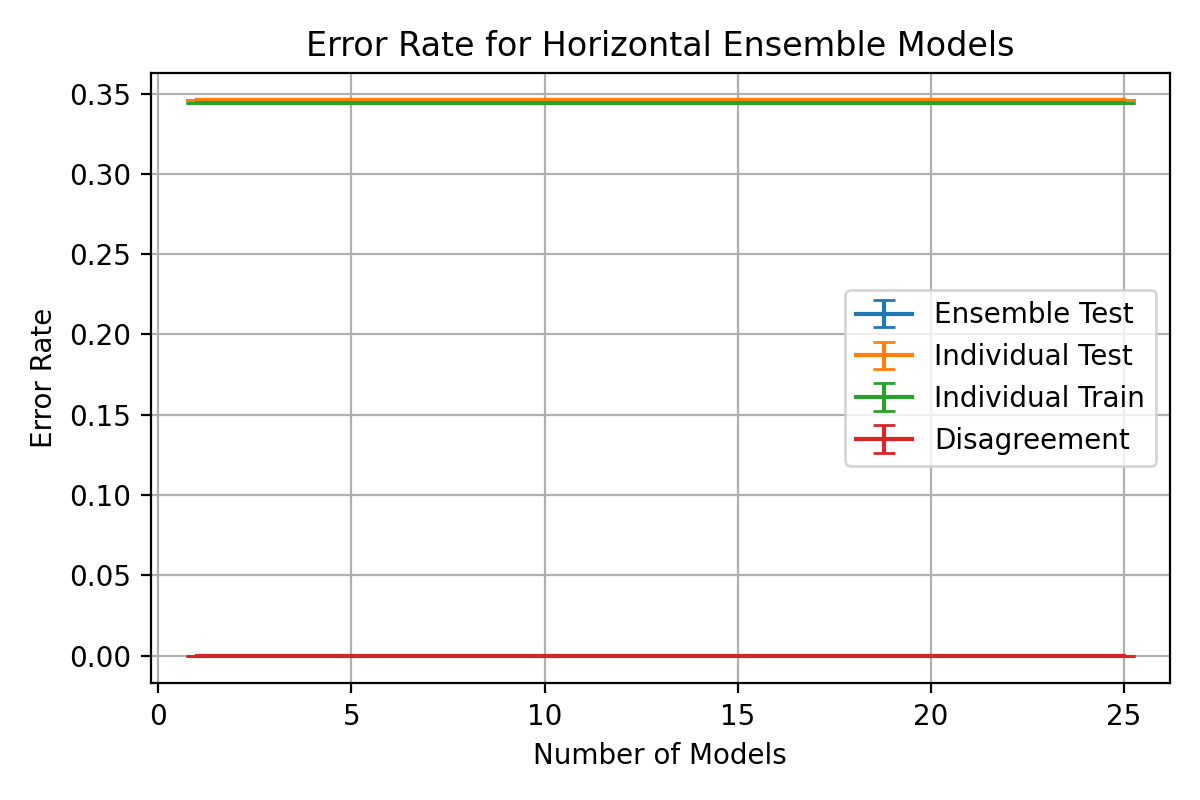

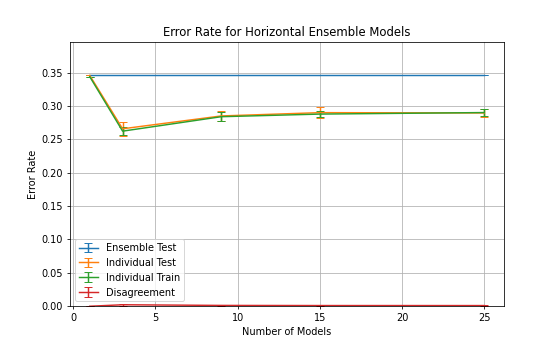

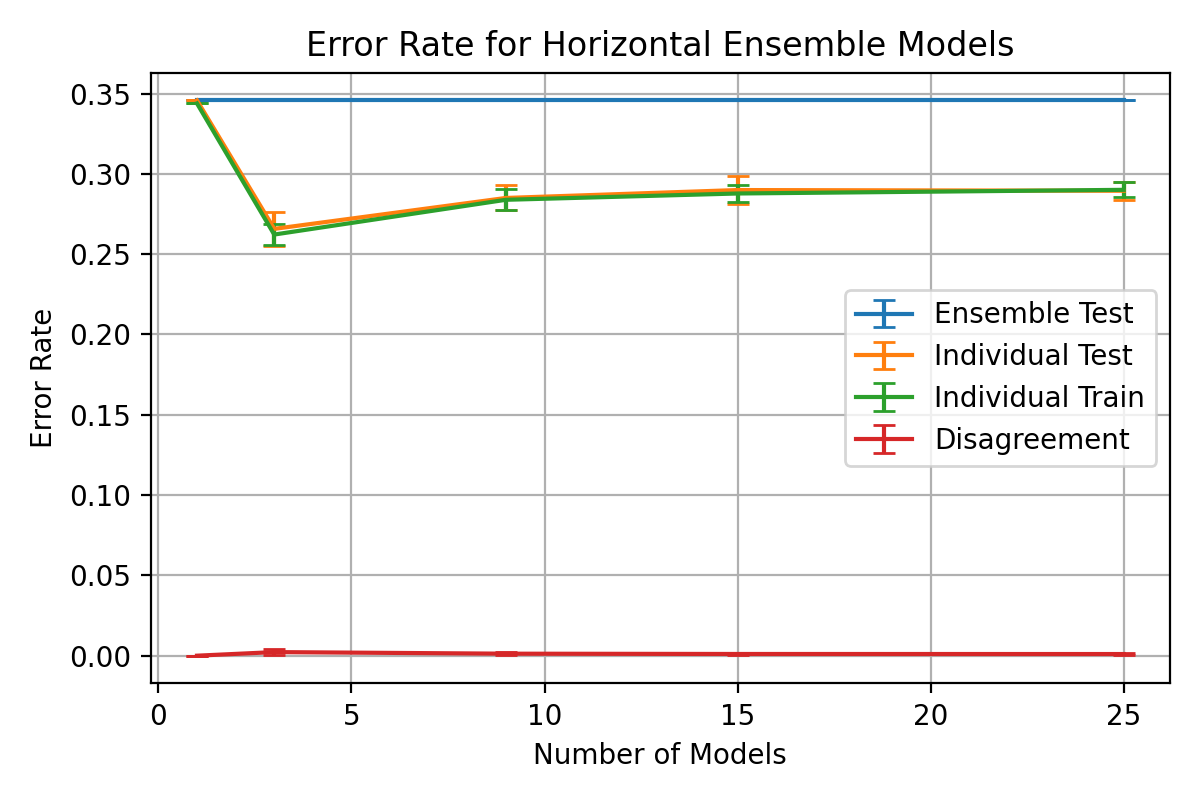

plt.title(f"Error Rate for Horizontal Ensemble Models")

|

||||

# plt.ylim(0, 1)

|

||||

plt.ylim(0, np.max(1 - mean_ensem_accuracy + std_ensem_accuracy) + 0.05)

|

||||

# plt.ylim(0, 0.2)

|

||||

# plt.ylim(0, np.max(1 - mean_ensem_accuracy + std_ensem_accuracy) + 0.05)

|

||||

plt.grid()

|

||||

plt.legend()

|

||||

plt.xlabel("Number of Models")

|

||||

plt.ylabel("Error Rate")

|

||||

|

||||

plt.tight_layout()

|

||||

plt.savefig(f'graphs/{exp2_testname}-error-rate-curves.png')

|

||||

|

||||

plt.show()

|

||||

|

||||

|

||||

@ -831,7 +931,7 @@ plt.show()

|

||||

#

|

||||

# Repeat Exp 2) for cancer dataset with two different optimisers of your choice e.g. 'trainlm' and 'trainrp'. Comment and discuss the result and decide which is more appropriate training algorithm for the problem. In your discussion, include in your description a detailed account of how the training algorithms (optimisations) work.

|

||||

|

||||

# In[7]:

|

||||

# In[127]:

|

||||

|

||||

|

||||

def evaluate_optimisers(optimizers=[(lambda: 'sgd', 'sgd'),

|

||||

@ -868,17 +968,25 @@ for test in evaluate_optimisers(epochs=(5, 300), nmodels=[1, 3, 5]):

|

||||

#

|

||||

# ### Pickle Results

|

||||

#

|

||||

# | test | optim1 | optim2 | optim3 | batch size | hidden nodes | epochs | models |

|

||||

# | --- | --- | --- | --- | --- | --- | --- | --- |

|

||||

# | test | optim1 | optim2 | optim3 | lr | momentum | epsilon | batch size | hidden nodes | epochs | models | stratified |

|

||||

# | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

|

||||

# | 1 | SGD | Adam | RMSprop | 0.1 | 0.0 | 1e7 | 35 | 16 | 1 - 100 | 1, 3, 9, 15, 25 | y |

|

||||

# | 2 | SGD | Adam | RMSprop | 0.05 | 0.01 | 1e7 | 35 | 16 | 1 - 100 | 1, 3, 9, 15, 25 | y |

|

||||

# | 3 | SGD | Adam | RMSprop | 0.1 | 0.01 | 1e7 | 35 | 1 - 400 | 20 | 1, 3, 9, 15, 25, 35, 45 | y |

|

||||

# | 4 | SGD | Adam | RMSprop | 0.075 | 0.01 | 1e7 | 35 | 1 - 400 | 20 | 1, 3, 9, 15, 25, 35, 45 | y |

|

||||

# | 5 | SGD | Adam | RMSprop | 0.05 | 0.01 | 1e7 | 35 | 1 - 400 | 20 | 1, 3, 9, 15, 25, 35, 45 | n |

|

||||

# | 6 | SGD | Adam | RMSprop | 0.02 | 0.01 | 1e7 | 35 | m | 50 | 1, 3, 9, 15, 25, 35, 45 | n |

|

||||

# | 7 | SGD | Adam | RMSprop | 0.1 | 0.9 | 1e-8 | 35 | 1 - 400 | 50 - 100 | 1, 3, 5, 7, 9, 15, 25 | n |

|

||||

# | 8 | SGD | Adam | RMSprop | 0.05 | 0.9 | 1e-8 | 35 | 1 - 400 | 50 - 100 | 1, 3, 5, 7, 9, 15, 25 | n |

|

||||

|

||||

# In[36]:

|

||||

# In[27]:

|

||||

|

||||

|

||||

multi_optim_results = list()

|

||||

multi_optim_iterations = 5

|

||||

multi_optim_iterations = 30

|

||||

|

||||

multi_optim_lr = 0.01

|

||||

multi_optim_mom = 0.0

|

||||

multi_optim_lr = 0.05

|

||||

multi_optim_mom = 0.01

|

||||

multi_optim_eps = 1e-07

|

||||

multi_optims = [(lambda: tf_optim.SGD(learning_rate=multi_optim_lr,

|

||||

momentum=multi_optim_mom), 'sgd'),

|

||||

@ -890,10 +998,12 @@ multi_optims = [(lambda: tf_optim.SGD(learning_rate=multi_optim_lr,

|

||||

|

||||

for i in range(multi_optim_iterations):

|

||||

print(f"Iteration {i+1}/{multi_optim_iterations}")

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5, stratify=labels)

|

||||

multi_optim_results.append(list(evaluate_optimisers(epochs=(1, 100),

|

||||

hidden_nodes=16,

|

||||

nmodels=[1, 3, 5, 7],

|

||||

data_train, data_test, labels_train, labels_test = train_test_split(data, labels, test_size=0.5,

|

||||

# stratify=labels

|

||||

)

|

||||

multi_optim_results.append(list(evaluate_optimisers(epochs=(50, 100),

|

||||

hidden_nodes=(1, 400),

|

||||

nmodels=[1, 3, 9, 15, 25],

|

||||

optimizers=multi_optims,

|

||||

weight_init=lambda: 'random_uniform',

|

||||

batch_size=35,

|

||||

@ -917,7 +1027,7 @@ for i in range(multi_optim_iterations):

|

||||

# 2. Individual Accuracy

|

||||

# 3. Agreement

|

||||

|

||||

# In[37]:

|

||||

# In[467]:

|

||||

|

||||

|

||||

multi_optim_results_dict = dict() # indexed by optimiser name

|

||||

@ -950,10 +1060,8 @@ for optim, optim_results in multi_optim_results_dict.items():

|

||||

for single_test in iteration:

|

||||

|

||||

optim_models_idx = multi_optim_models.index(single_test['num_models'])

|

||||

accuracy_optim_tensor[iter_idx, :, optim_models_idx] = [single_test["accuracy"],

|

||||

np.mean([i["accuracy"][-1] for i in single_test["history"]]),

|

||||

single_test["individual_accuracy"],

|

||||

single_test["agreement"]]

|

||||

accuracy_optim_tensor[iter_idx, :, optim_models_idx] = test_tensor_data(single_test)

|

||||

|

||||

optim_tensors[optim] = {

|

||||

"accuracy": accuracy_optim_tensor,

|

||||

"mean": np.mean(accuracy_optim_tensor, axis=0),

|

||||

@ -970,10 +1078,23 @@ print(f'Loss: {multi_optim_results[0][0][0]["loss"]}')

|

||||

# #### Export/Import Test Sets

|

||||

#

|

||||

# Export mean and standard deviations for retrieval and visualisation

|

||||

pickle.dump(multi_optim_results, open("result.p", "wb"))multi_optim_results = pickle.load(open("results/exp3-test1.p", "rb"))

|

||||

|

||||

# In[28]:

|

||||

|

||||

|

||||

pickle.dump(multi_optim_results, open("results/exp3-test5.p", "wb"))

|

||||

|

||||

|

||||

# In[466]:

|

||||

|

||||

|

||||

exp3_testname = 'exp3-test8'

|

||||

multi_optim_results = pickle.load(open(f"results/{exp3_testname}.p", "rb"))

|

||||

|

||||

|

||||

# ### Best Results

|

||||

|

||||

# In[38]:

|

||||

# In[468]:

|

||||

|

||||

|

||||

for optim, optim_results in optim_tensors.items():

|

||||

@ -986,10 +1107,10 @@ for optim, optim_results in optim_tensors.items():

|

||||

|

||||

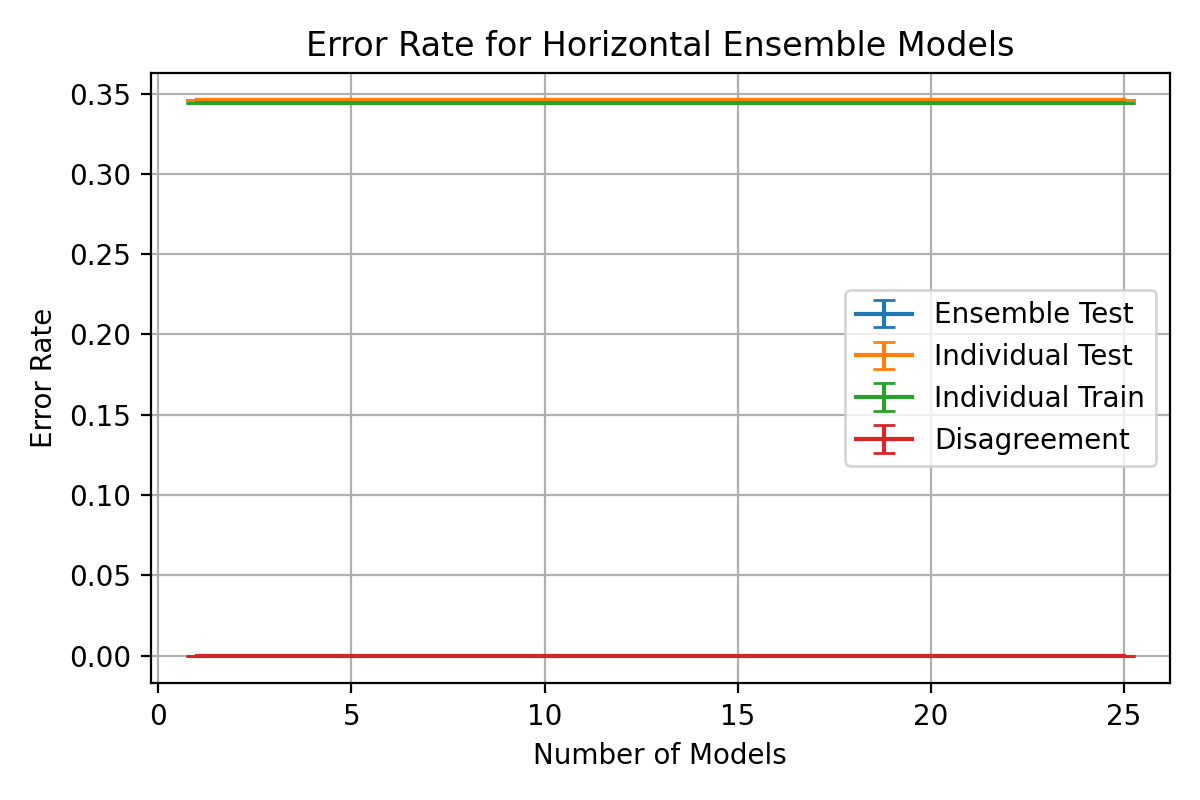

# ### Optimiser Error Rates

|

||||

|

||||

# In[40]:

|

||||

# In[469]:

|

||||

|

||||

|

||||

fig, axes = plt.subplots(1, 3, figsize=(24, 5))

|

||||

fig, axes = plt.subplots(1, 3, figsize=(12, 3))

|

||||

fig.set_dpi(fig_dpi)

|

||||

|

||||

for idx, ((optimiser_name, tensors_dict), ax) in enumerate(zip(optim_tensors.items(), axes.flatten())):

|

||||

@ -1004,13 +1125,21 @@ for idx, ((optimiser_name, tensors_dict), ax) in enumerate(zip(optim_tensors.ite

|

||||

# ax.errorbar(multi_optim_models, 1 - tensors_dict["mean"][3, :], yerr=tensors_dict["std"][3, :], capsize=4, label='Disagreement')

|

||||

|

||||

ax.set_title(f"{optimiser_name} Error Rate for Ensemble Models")

|

||||

# ax.set_ylim(0, 1)

|

||||

ax.set_ylim(0, np.max([np.max(1 - i["mean"] + i["std"]) for i in optim_tensors.values()]) + 0.03)

|

||||

ax.set_ylim(0, 0.1)

|

||||

# ax.set_ylim(0, np.max([np.max(1 - i["mean"] + i["std"]) for i in optim_tensors.values()]) + 0.03)

|

||||

ax.grid()

|

||||

# if idx > 0:

|

||||

ax.legend()

|

||||

ax.set_xlabel("Number of Models")

|

||||

ax.set_ylabel("Error Rate")

|

||||

|

||||

# axes[0].set_ylim(0, 0.4)

|

||||

axes[1].legend()

|

||||

axes[2].legend()

|

||||

|

||||

plt.tight_layout()

|

||||

plt.savefig(f'graphs/{exp3_testname}-error-rate-curves.png')

|

||||

|

||||

plt.show()

|

||||

|

||||

|

||||

|

||||

@ -5,7 +5,7 @@ description = ""

|

||||

authors = ["andy <andy@sarsoo.xyz>"]

|

||||

|

||||

[tool.poetry.dependencies]

|

||||

python = "3.8"

|

||||

python = "~3.8"

|

||||

tensorflow = "^2.4.1"

|

||||

pandas = "^1.2.4"

|

||||

matplotlib = "^3.4.1"

|

||||

|

||||

@ -52,3 +52,58 @@

|

||||

year = {2012}

|

||||

}

|

||||

|

||||

@misc{tf.keras.optimizers.SGD,

|

||||

author = {TensorFlow},

|

||||

title = {tf.keras.optimizers.SGD - Documentation},

|

||||

url = {https://www.tensorflow.org/api_docs/python/tf/keras/optimizers/SGD},

|

||||

urldate = {2021-05-02},

|

||||

year = {2019}

|

||||

}

|

||||

|

||||

@misc{paperspace-mom-rmsprop-adam,

|

||||

author = {Kathuria, Ayoosh},

|

||||

title = {Intro to optimization in deep learning: Momentum, RMSProp and Adam},

|

||||

url = {https://blog.paperspace.com/intro-to-optimization-momentum-rmsprop-adam},

|

||||

urldate = {2021-05-02},

|

||||

year = {2018}

|

||||

}

|

||||

|

||||

@misc{understanding-rmsprop,

|

||||

author = {Bushaev, Vitaly},

|

||||

month = sep,

|

||||

organization = {Towards Data Science},

|

||||

title = {Understanding RMSprop — faster neural network learning},

|

||||

url = {https://towardsdatascience.com/understanding-rmsprop-faster-neural-network-learning-62e116fcf29a},

|

||||

urldate = {2021-05-02},

|

||||

year = {2018}

|

||||

}

|

||||

|

||||

@misc{rmsprop-hinton,

|

||||

author = {Tieleman, T. and Hinton, G.},

|

||||

howpublished = {COURSERA: Neural Networks for Machine Learning},

|

||||

title = {Lecture 6.5—RmsProp: Divide the gradient by a running average of its recent magnitude},

|

||||

url = {https://www.cs.toronto.edu/~tijmen/csc321/slides/lecture_slides_lec6.pdf},

|

||||

urldate = {2021-05-02},

|

||||

year = {2012}

|

||||

}

|

||||

|

||||

@article{adam-paper,

|

||||

author = {Kingma, Diederik and Ba, Jimmy},

|

||||

journal = {International Conference on Learning Representations},

|

||||

month = {12},

|

||||

title = {Adam: A Method for Stochastic Optimization},

|

||||

url = {https://www.researchgate.net/publication/269935079_Adam_A_Method_for_Stochastic_Optimization},

|

||||

urldate = {2021-05-02},

|

||||

year = {2014}

|

||||

}

|

||||

|

||||

@misc{tds-adam,

|

||||

author = {Bushaev, Vitaly},

|

||||

month = oct,

|

||||

organization = {Towards Data Science},

|

||||

title = {Adam — latest trends in deep learning optimization.},

|

||||

url = {https://towardsdatascience.com/adam-latest-trends-in-deep-learning-optimization-6be9a291375c},

|

||||

urldate = {2021-05-02},

|

||||

year = {2018}

|

||||

}

|

||||

|

||||

|

||||